Category: Software Development - Page 2

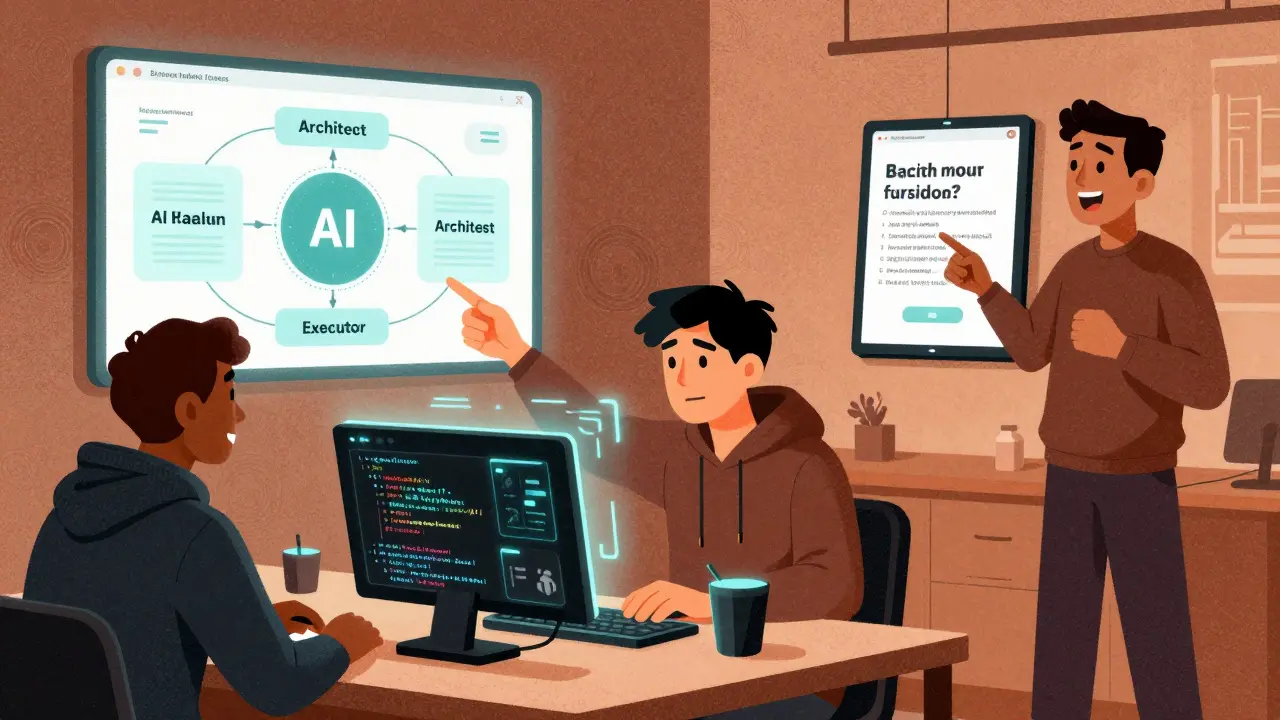

Design Patterns Commonly Used by LLMs in Vibe Coding Codebases

Explore essential design patterns for vibe coding, including vertical slices and context engineering. Learn how LLMs shape modern software architecture.

Read MoreSecrets Management in Vibe-Coded Projects: Never Hardcode API Keys

Learn how to secure AI-generated code by avoiding hardcoded API keys and implementing proper secrets management strategies in software development.

Read MoreHackathon Strategy: Win with Vibe Coding and LLM Agents

Winning hackathons in 2026 isn't about coding faster-it's about orchestrating AI tools like vibe coding and LLM agents to build compelling, user-focused prototypes in under 48 hours. Learn the strategy top teams use.

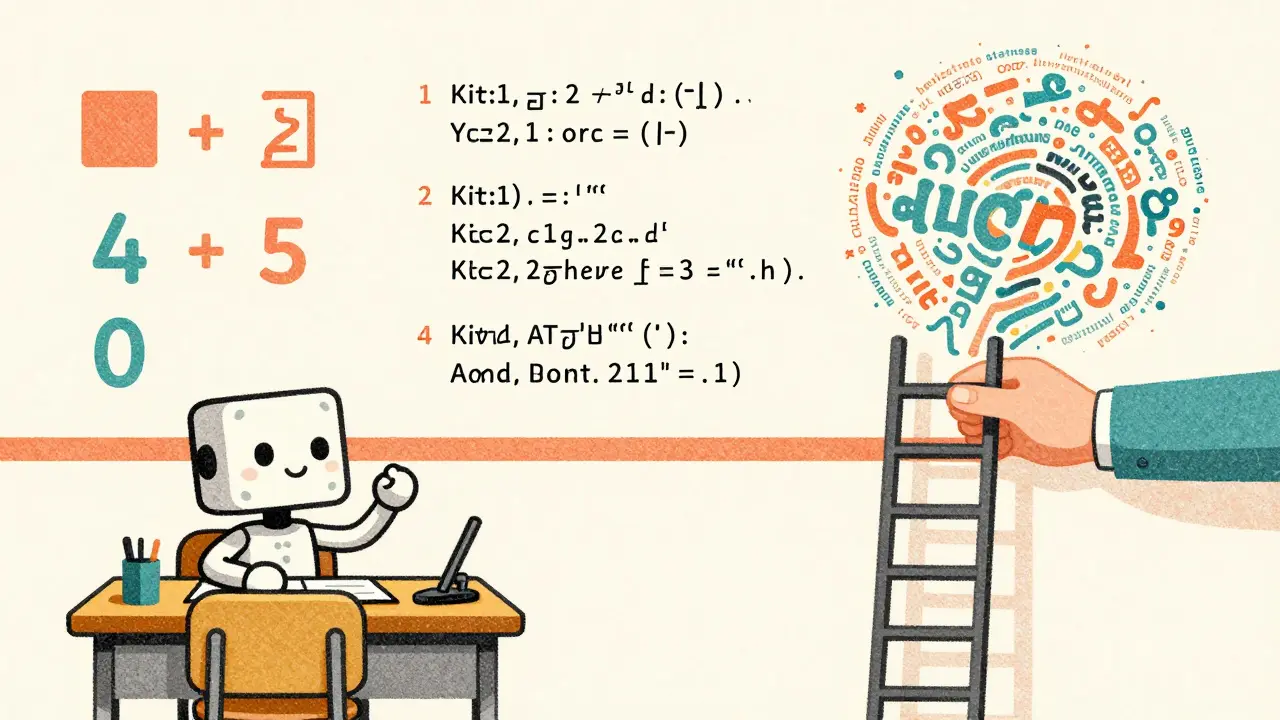

Read MoreCurriculum and Blending: How to Mix Datasets for Better Large Language Models

Curriculum learning improves LLM performance by sequencing training data from simple to complex. This method boosts accuracy, cuts compute costs, and works best on structured tasks like math and code. It's becoming standard in modern AI training pipelines.

Read MorePost-Training Quantization for Large Language Models: 8-Bit and 4-Bit Methods Explained

Post-training quantization cuts LLM memory use and speeds up inference by 2-3x without retraining. Learn how 8-bit and 4-bit methods like SmoothQuant, AWQ, and GPTQ make it possible-and what you need to know to use them.

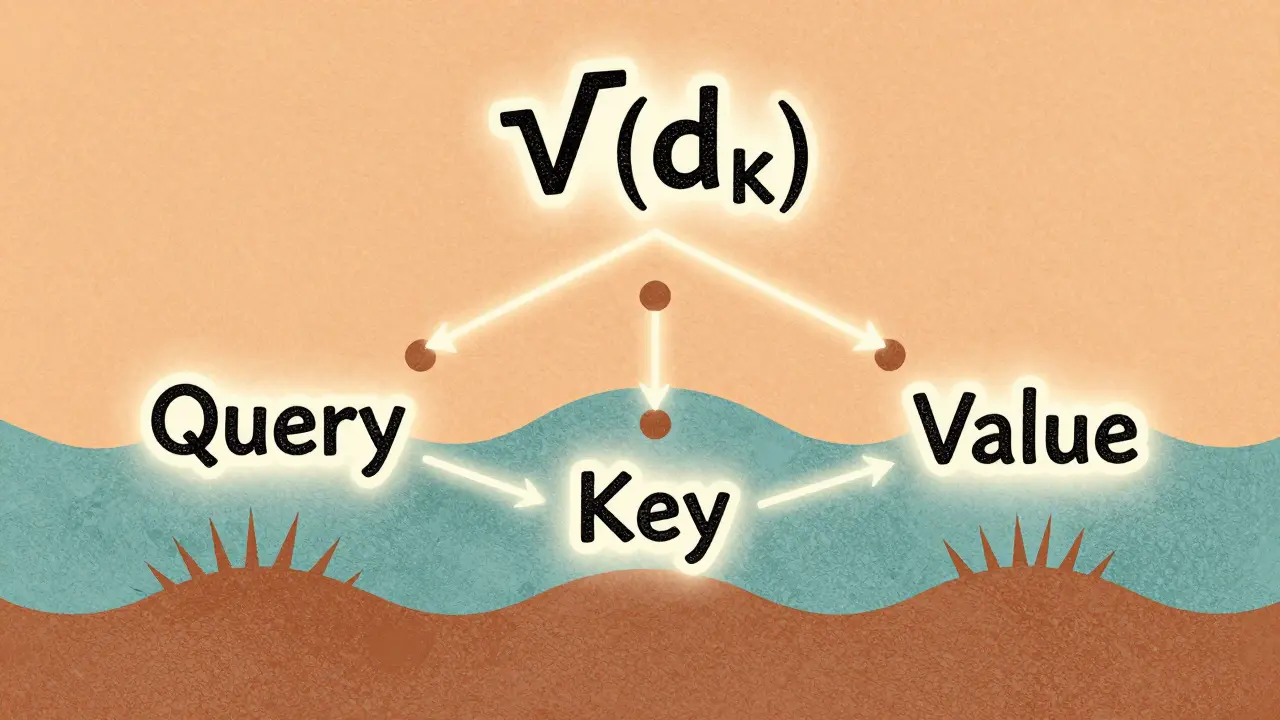

Read MoreScaled Dot-Product Attention Explained for Large Language Model Practitioners

Scaled dot-product attention is the core mechanism behind modern LLMs like GPT and Llama. Learn why the 1/√(d_k) scaling is non-negotiable, how it prevents training collapse, and what pitfalls to avoid in practice.

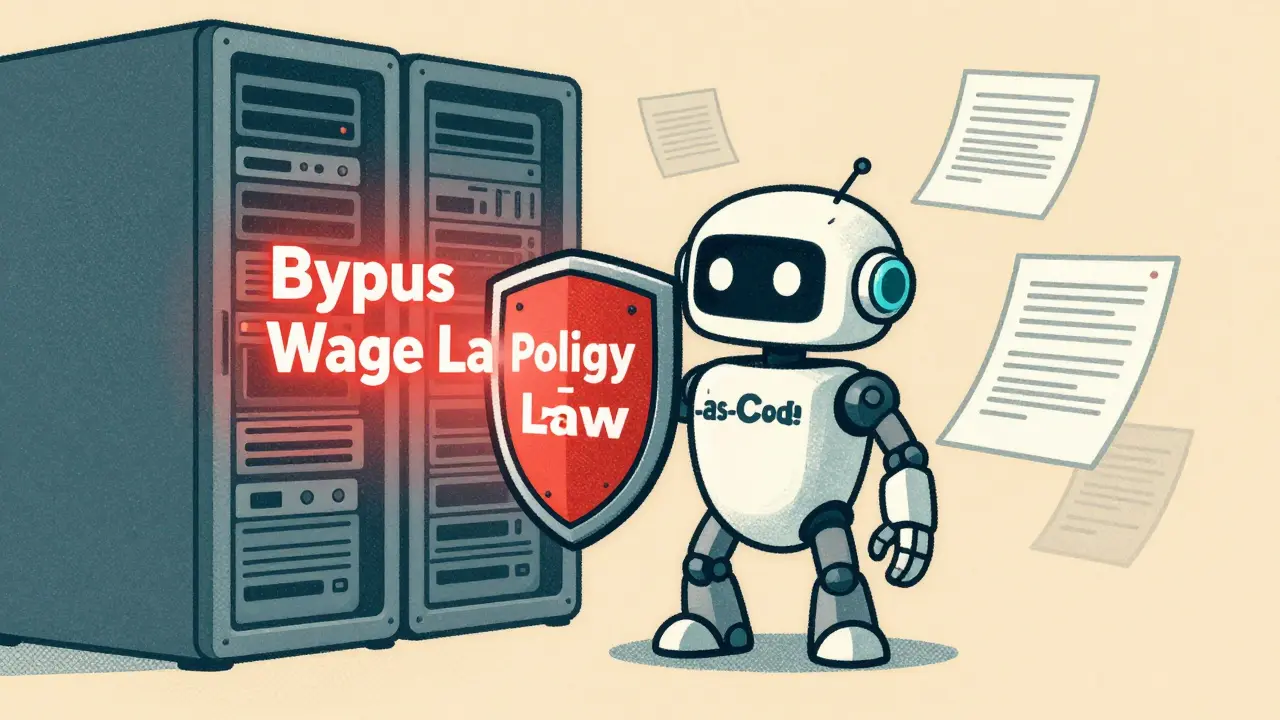

Read MoreEthical AI Agents for Code: How Guardrails Enforce Policy by Default

Ethical AI agents for code are designed to refuse illegal or unethical commands by default, using policy-as-code architecture to enforce compliance without human intervention. This approach is becoming the new standard for trustworthy AI in government, finance, and development.

Read MoreSafety by Design in Generative AI: How to Embed Protections into Product Architecture

Safety by Design embeds child protection and harm prevention directly into generative AI architecture-from training data to real-time filtering. This isn't optional. It's the only way to build AI that doesn't become a weapon.

Read MoreData Augmentation for LLM Fine-Tuning: Synthetic and Human-in-the-Loop Approaches

Data augmentation boosts LLM fine-tuning by generating realistic training examples using synthetic methods and human feedback. Learn how synthetic data and human-in-the-loop approaches improve accuracy, reduce costs, and work with LoRA for efficient model adaptation.

Read MorePrompt Compression: How to Reduce Tokens Without Losing LLM Accuracy

Prompt compression cuts LLM token usage by up to 80% without losing accuracy, slashing costs and latency. Learn how techniques like LLMLingua work, where they excel, and how to implement them today.

Read MoreWhy Large Language Models Outperform Task-Specific Systems on Many NLP Tasks

Large language models outperform task-specific NLP systems on complex, context-heavy tasks due to their scale, architecture, and ability to generalize. But for simple, domain-specific tasks, traditional models still win on accuracy and efficiency.

Read MoreMulti-Task Fine-Tuning for Large Language Models: One Model, Many Skills

Multi-task fine-tuning lets one large language model master multiple skills at once, outperforming single-task models with less compute. Learn how it works, why it’s beating GPT-4 on benchmarks, and how companies are using it in 2026.

Read More