Category: Software Development - Page 3

SAST, DAST, and SCA for AI-Generated Code: Tools That Actually Catch Real Security Issues

SAST, DAST, and SCA tools must adapt to catch security flaws in AI-generated code. Learn how modern tools like Mend and Cycode now detect vulnerabilities in AI-written code, why traditional DAST is obsolete, and how to build a layered defense that actually works.

Read MoreCurriculum Learning in NLP: How Ordering Data Makes Large Language Models Smarter

Curriculum learning in NLP improves large language models by training them on data ordered from simple to complex, cutting costs, speeding up training, and boosting accuracy on hard tasks. Learn how it works and where it shines.

Read MorePost-Training Calibration for Large Language Models: How Confidence and Abstention Improve Reliability

Post-training calibration ensures large language models express confidence accurately and know when to abstain. Learn how it works, why it matters, and how to apply it to improve reliability without retraining.

Read MoreBatched Generation in LLM Serving: How Request Scheduling Impacts Outputs

Batched generation in LLM serving uses dynamic request scheduling to boost throughput by 3-5x. Learn how continuous batching, PagedAttention, and learning-to-rank algorithms make AI responses faster and cheaper - and why most systems still get it wrong.

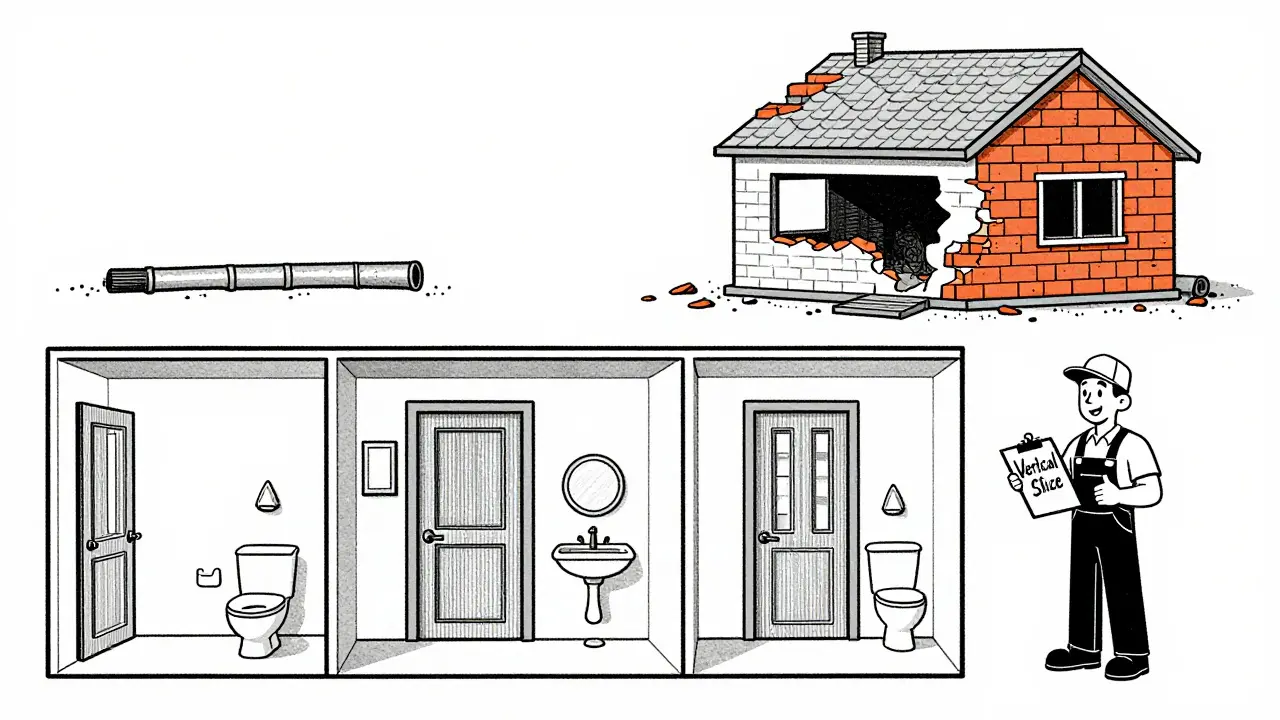

Read MoreScoping Prompts to Vertical Slices: End-to-End Over Feature Fragments

Vertical slicing delivers real user value by building end-to-end features instead of fragmented layers. Learn how this Agile method cuts development time by 40%, improves feedback, and transforms how teams ship software.

Read MoreReplit: Cloud Development with AI Agents and One-Click Deploys for Vibe Coding

Replit transforms coding into a smooth, collaborative experience with cloud-based development, AI-powered agents, and one-click deploys. No setup required-just code in your browser. Perfect for startups, education, and teams wanting to ship faster. Learn how Replit’s AI handles 90% of foundational code and deploys apps instantly.

Read MoreHow to Use Vibe Coding for Frontend i18n and Localization

Learn how vibe coding speeds up frontend i18n setup using LLMs, but avoid linguistic pitfalls with proper prompting. Real-world examples, best practices, and tools like i18next explained. Discover why hybrid approaches are becoming standard for global apps.

Read MoreStyle Guides for Prompts: Achieving Consistent Code Across Sessions

A coding style guide ensures consistent, readable code across teams and sessions. Learn how to build a practical, tool-driven guide that reduces review time, cuts bugs, and keeps developers sane-without overwhelming them with rules.

Read MorePost-Processing Validation for Generative AI: Rules, Regex, and Programmatic Checks to Stop Hallucinations

Post-processing validation stops generative AI hallucinations using rules, regex, and programmatic checks. Learn how to build a layered defense that catches lies before they reach users.

Read MoreQuantization-Aware Training for LLMs: How to Keep Accuracy While Shrinking Model Size

Quantization-aware training lets you shrink large language models to 4-bit without losing accuracy. Learn how it works, why it beats traditional methods, and how to use it in 2026.

Read MoreMemory Planning to Avoid OOM in Large Language Model Inference

Learn how memory planning techniques like CAMELoT and Dynamic Memory Sparsification reduce OOM errors in LLM inference without sacrificing accuracy, enabling larger models to run on standard hardware.

Read MoreOpen Source in the Vibe Coding Era: How Community Models Are Shaping AI-Powered Development

Open-source AI models are reshaping software development through community-driven fine-tuning, offering customization and control that closed-source models can't match-especially in privacy-sensitive and legacy code environments.

Read More