What if you could train one language model to do ten different jobs-like analyzing financial reports, summarizing legal contracts, detecting sentiment in tweets, answering medical questions, writing code, and translating languages-all without needing ten separate models? That’s not science fiction. It’s what multi-task fine-tuning does today, and it’s changing how companies build smarter AI systems.

For years, the default approach was simple: train one model per task. Need a model that reads financial statements? Train it on financial data. Need one that understands medical jargon? Train another. But this method is expensive, slow, and wasteful. Each model needs its own set of parameters, its own training time, its own storage, and its own maintenance. Multi-task fine-tuning flips that model on its head. Instead of building ten models, you build one-trained on ten tasks at once.

How Multi-Task Fine-Tuning Works

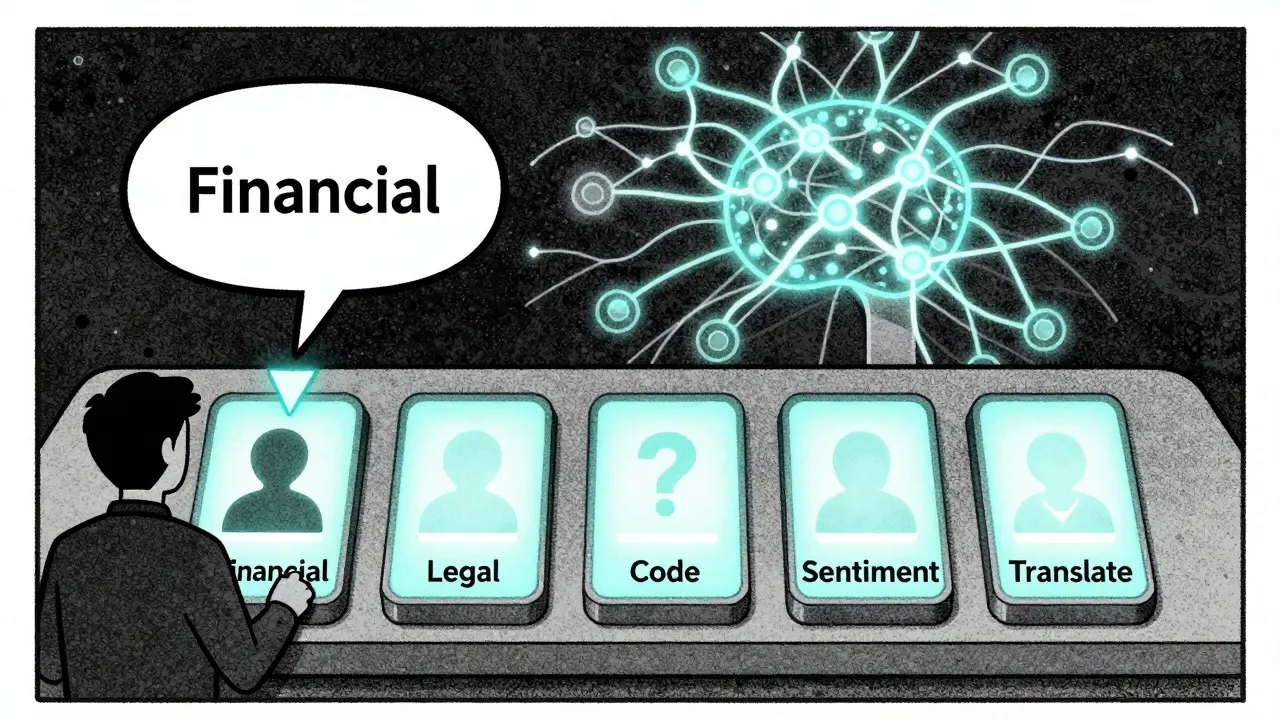

At its core, multi-task fine-tuning takes a large pre-trained language model-like Phi-3-Mini, Llama 3, or Mistral-and fine-tunes it on multiple datasets simultaneously. Each dataset represents a different task: one might be financial question answering, another could be legal clause classification, and a third might be social media sentiment analysis. The model learns to switch between these tasks, gradually building a richer understanding of language patterns that overlap across domains.

This isn’t just training on more data. It’s training on related data. When you train a model on both financial reporting and stock market news, it starts to notice that phrases like "revenue growth" and "quarterly earnings" often appear together. That connection helps it perform better on both tasks. Researchers call this the "cocktail effect"-where combining tasks creates a performance boost greater than the sum of its parts.

One of the biggest breakthroughs came in 2024 with the Mixture of Adapters (MoA) a parameter-efficient fine-tuning architecture that uses lightweight adapter modules with dynamic routing to select task-specific expert pathways within a single LLM. MoA doesn’t retrain the whole model. Instead, it adds tiny, task-specific "adapters"-small neural networks that plug into the original model’s layers. When a user asks a question, the system routes it to the most relevant adapter. Need to analyze a balance sheet? The router sends it to the financial adapter. Need to summarize a patient note? It picks the medical one. This keeps the base model light and efficient.

Why It Beats Single-Task Fine-Tuning

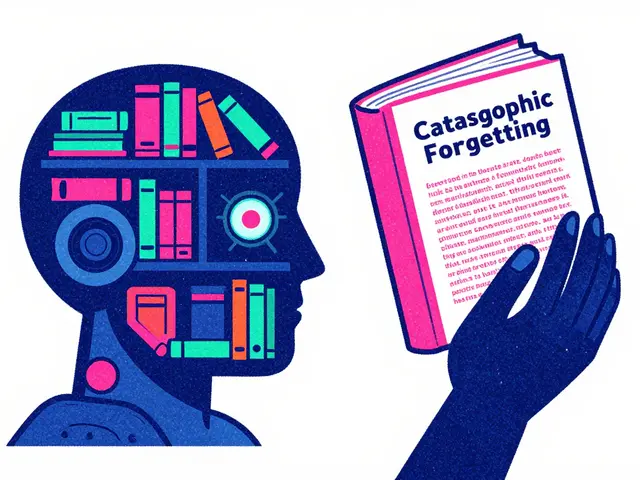

Single-task fine-tuning works well, but it has limits. A model trained only on financial QA might become too specialized. It loses the ability to generalize. Multi-task fine-tuning fixes this by forcing the model to learn common patterns across domains.

Here’s what the numbers show:

- Models fine-tuned with multi-task learning outperformed single-task models by 5.2% to 18.4% across financial benchmarks, according to research published in arXiv:2410.01109v1.

- On tasks like headline sentiment analysis and Twitter sentiment detection, gains hit 18.4%-because those tasks benefit from shared linguistic cues.

- A 3.8B parameter Phi-3-Mini model, when multi-task fine-tuned, surpassed GPT-4-o on several domain-specific benchmarks.

And the efficiency? Massive. Stanford’s 2023 study found that training a single BERT model on three tasks used just 110 million shared parameters. If you’d built three separate models, you’d need 330 million. That’s a 67% reduction in parameters-and a huge drop in compute cost.

The Hidden Cost: Task Interference and Poor Choices

Multi-task fine-tuning isn’t magic. If you mix the wrong tasks, performance can drop. Training a model on medical records and movie reviews at the same time? That’s a mess. The model gets confused. It starts overfitting to the bigger datasets and forgets the smaller ones.

This is called task interference. The solution? Careful task selection. The best results come from grouping tasks that share linguistic features. Financial tasks work well together because they all use formal language, numerical reasoning, and structured formats. Legal, medical, and technical writing also form natural clusters.

Another problem is data imbalance. If one task has 10,000 examples and another has only 100, the model will ignore the small one. Researchers use anneal sampling to fix this. Instead of cycling through tasks in a fixed order (round-robin), anneal sampling gradually adjusts how often each task appears during training. Early on, it gives more weight to rare tasks. Later, it shifts toward balance. This helps the model learn everything without forgetting the underdogs.

How to Implement It Right

If you’re trying this yourself, here’s what actually works:

- Start with a pre-trained model-Phi-3-Mini, Llama 3, or Mistral are good choices. Avoid huge models unless you have serious compute.

- Pick 3-6 related tasks. Financial QA + sentiment + classification. Legal summarization + clause extraction + contract classification. Don’t go overboard.

- Use LoRA or MoA. Full fine-tuning is overkill. Low-Rank Adaptation adds only a few thousand extra parameters. MoA adds routing on top.

- Use anneal sampling. Don’t just alternate tasks. Let the model adjust its focus over time.

- Include general instruction data. Throw in some open-ended prompts like "Explain this in simple terms" to prevent overfitting.

- Set hyperparameters wisely: learning rate between 2e-5 and 5e-5, batch size 16-64, epochs between 3 and 10, weight decay 0.01-0.1.

One team at a hedge fund trained a model on five financial tasks using MoA. After 7 days of training, it beat five separate single-task models on all benchmarks-and used 80% less memory. They now run it on a single GPU.

Real-World Adoption

This isn’t just academic. As of Q3 2024, 17 of the top 50 global banks were testing multi-task fine-tuning for financial analysis. Google Cloud added it to Vertex AI in December 2024, and it’ll be generally available in March 2025. DataCamp reports that 35% of their enterprise clients now use multi-task fine-tuning-up from 8% in early 2023.

Companies are realizing: why pay for five models when one can do it all? The cost savings are real. The performance gains are measurable. And with tools like FinMix an open-source framework being released in Q1 2025 to simplify financial domain multi-task fine-tuning with pre-optimized task combinations coming soon, even smaller teams can get started.

What’s Next?

The next frontier? Dynamic routing. Right now, MoA picks adapters based on the input. But what if the model could adjust its routing in real time based on context? For example, if a user asks a question about "Apple"-is that the company, the fruit, or the tech stock? A future system might detect ambiguity and activate multiple adapters simultaneously.

Experts are also exploring hybrid models: multi-task fine-tuning + external knowledge bases + retrieval-augmented generation. The goal? To go beyond pattern recognition and build true domain understanding.

But there’s a warning: ethics matter. Dr. Emily Bender from the University of Washington points out that if you combine biased datasets-say, legal texts from one jurisdiction and financial reports from another-you might accidentally reinforce harmful patterns. Always audit your data. Check for skewed representations. Test for fairness.

Final Thoughts

Multi-task fine-tuning isn’t about making models bigger. It’s about making them smarter-with less. By training one model on multiple related tasks, you unlock synergy. You reduce cost. You simplify deployment. You create systems that don’t just answer questions-they understand context.

The old way was: one model, one job. The new way? One model, many skills. And it’s already here.

Can multi-task fine-tuning work with small models?

Yes-and sometimes better than with large ones. A 3.8B parameter Phi-3-Mini model, when multi-task fine-tuned on financial tasks, outperformed GPT-4-o on several benchmarks. Smaller models benefit more from shared knowledge because they’re less likely to overfit. The key is using parameter-efficient methods like LoRA or MoA to avoid overloading them.

What’s the difference between multi-task fine-tuning and prompt engineering?

Prompt engineering changes how you ask questions. Multi-task fine-tuning changes what the model knows. Prompts can help a model guess the right answer once. But if you fine-tune it on 10 financial tasks, it learns to recognize financial patterns permanently. Prompts are temporary; fine-tuning is permanent. For repeatable, production-grade systems, fine-tuning wins.

Do I need a lot of data for each task?

Not necessarily. Multi-task fine-tuning thrives on related data, not huge amounts. A task with 200 examples can still help if it shares linguistic features with others. The MoA architecture handles imbalance by routing and anneal sampling. You don’t need 10,000 examples per task-just enough to teach the model the pattern.

Can I use this for non-financial tasks?

Absolutely. The same principles apply to legal, medical, technical, or customer support tasks. For example, combining contract summarization, clause extraction, and legal QA creates a powerful legal assistant. The trick is grouping tasks that use similar language structures. Don’t mix poetry analysis with tax code parsing-stick to domains with shared patterns.

Is multi-task fine-tuning better than using multiple specialized models?

For most real-world use cases, yes. Multiple models mean more storage, more inference latency, more monitoring, and more maintenance. One multi-task model reduces all that. Plus, it often performs better due to the cocktail effect. The only exception? If tasks are completely unrelated or require extreme specialization (like real-time video analysis vs. text summarization), separate models might still be better.

What tools do I need to start?

You need PyTorch or TensorFlow, Hugging Face Transformers, and libraries like PEFT (Parameter-Efficient Fine-Tuning) for LoRA. For MoA-style routing, wait for FinMix (coming Q1 2025) or use the open-source adapter libraries from Stanford’s CS224N project. Start with a small model like Phi-3-Mini and 3-5 related tasks. You don’t need a supercomputer-just a single GPU and patience.