Business Technology AI Scripts: Deploy LLMs, Cut Costs, and Stay Compliant

When you're building AI into your business tech, you're not just writing code—you're managing large language models, AI systems that process and generate human-like text based on massive datasets. Also known as LLMs, they power chatbots, automate reports, and even guide field technicians—but only if you handle them right. Most companies fail because they treat LLMs like regular software. They spin up a model, throw in some prompts, and hope for the best. Then the bills spike, the legal team panics, and the engineers are stuck debugging a black box that costs $2,000 a day to run.

That’s where smart business tech comes in. It’s not about having the fanciest model. It’s about knowing how cloud cost optimization, strategies like autoscaling and spot instances that reduce AI infrastructure expenses without losing performance works. It’s about understanding how AI compliance, the rules around data use, export controls, and state-level laws that govern how AI models are trained and deployed affects your bottom line. And it’s about using LLM autoscaling, automated systems that adjust computing power based on real-time demand to avoid paying for idle GPUs so you’re not overpaying during slow hours. These aren’t theoretical ideas. They’re the difference between a prototype that dies and a tool that makes your team 10x more efficient.

You’ll find posts here that show you exactly how to fix the biggest headaches in business AI: why your LLM bill jumps when users ask long questions, how California’s new law forces you to track training data, how spot instances can slash your cloud costs by 60%, and how field service teams use AI to cut repair times in half. No fluff. No buzzwords. Just real strategies used by teams running AI in production—where mistakes cost money, time, and trust.

What follows isn’t a list of tools. It’s a roadmap. A way to move from guessing what your AI will do next to knowing exactly how it behaves, how much it costs, and whether you’re breaking any rules. If you’re building, managing, or paying for AI in your business, this is where you start.

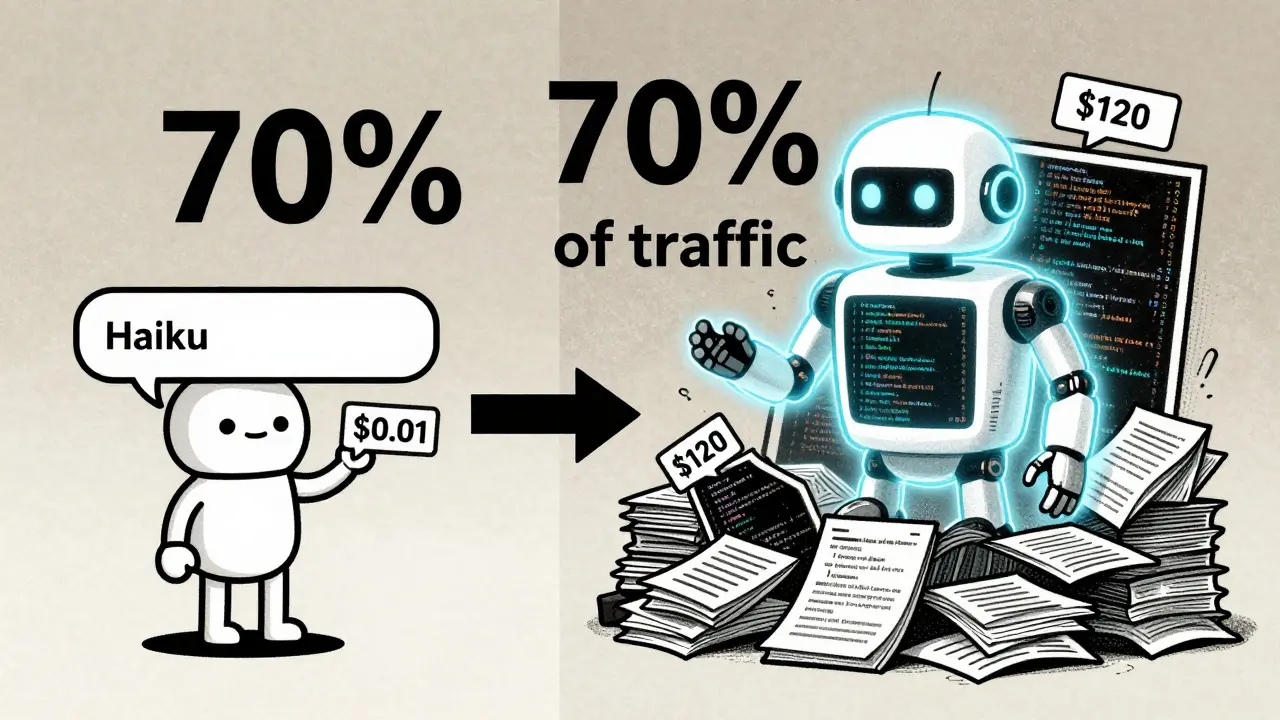

How to Lower LLM Costs: Prompt Length, Batching, and Caching Strategies

Learn how to slash LLM costs by up to 80% using prompt optimization, batching, and semantic caching. A practical guide to reducing token spend without losing quality.

Read MoreColorado SB24-205 Guide: AI Impact Assessments and Risk Management

A practical guide to Colorado SB24-205. Learn how to handle AI impact assessments, risk management, and compliance for high-risk AI systems in Colorado.

Read MoreCybersecurity and Generative AI: Threat Reports, Playbooks, and Simulations

Explore the intersection of Generative AI and cybersecurity in 2026. Learn about AI-powered threats, agentic AI risks, and how to use AI-driven playbooks for defense.

Read MoreAutonomous Ticket Resolution: Scaling IT Support with Domain-Specific LLM Agents

Learn how domain-specific LLM agents are transforming IT support by automating ticket resolution, reducing redundancies, and improving routing accuracy to 95%.

Read MoreNext-Gen Generative AI Hardware: The 2026 Guide to Accelerators, Memory, and Networking

Explore the 2026 landscape of Generative AI hardware, from NVIDIA's Rubin and AMD's Helios to HBM4 memory and TSMC's 1.6nm A16 process.

Read MoreHow to Use Multimodal Generative AI for Cohesive Cross-Channel Marketing Campaigns

Learn how to use multimodal generative AI to create cohesive, high-converting marketing campaigns across all channels while maintaining brand consistency.

Read MoreGenerative AI for Healthcare Providers: Notes, Authorizations, and Care Plans 2026

Explore how healthcare providers are leveraging generative AI to automate note drafting, streamline prior authorizations, and optimize patient care plans while managing costs and compliance.

Read MoreAgentic Generative AI: Mastering Autonomous Planning and Workflow Execution in 2026

Explore Agentic Generative AI, the shift from reactive chatbots to autonomous workflow execution. Learn how it works, real-world use cases, and implementation challenges in 2026.

Read MoreEstimating Monthly Costs for a Production Large Language Model Application

Estimating monthly costs for a production LLM application requires understanding infrastructure, model routing, and development expenses-not just API pricing. In 2026, smart architecture cuts costs by 90% compared to brute-force approaches.

Read MoreEnterprise Integration of Vibe Coding: Embedding AI into Existing Toolchains

Enterprise vibe coding embeds AI into development workflows, cutting time-to-value by up to 40% while maintaining security. Learn how companies like ServiceNow and Salesforce are using it to build internal tools faster-with guardrails that prevent chaos.

Read MoreInternal Marketplaces for Vibe-Coded Components and Services

Internal marketplaces for vibe-coded components let non-engineers build and share AI-generated tools safely. With proper governance, companies save hundreds of hours, reduce shadow IT, and turn every employee into a creator.

Read MoreLegal Services and Generative AI: Automate Documents, Review Contracts, and Manage Knowledge

Generative AI is transforming legal services by automating document creation, speeding up contract review, and unlocking instant access to legal knowledge. Firms using these tools save hundreds of hours per lawyer annually while improving accuracy and client trust.

Read More