The race to build the most powerful AI isn't just about who has the best code; it's about who has the most silicon. We've reached a point where software is outstripping the physical hardware it runs on, leading to what some call "computation inflation." To keep up with massive models, the industry is moving away from general-purpose chips toward a hyper-specialized stack of accelerators, memory, and networking that can handle trillions of parameters without melting the power grid. If you're planning infrastructure for 2026, the game has shifted from simply buying more GPUs to optimizing the entire data path.

| Provider | Flagship 2026 Tech | Key Attribute | Primary Focus |

|---|---|---|---|

| NVIDIA | Rubin Platform | HBM4 Integration | Full-stack Training/Inference |

| AMD | Helios (MI400/450) | 19.6 TB/s Bandwidth | Enterprise Inference |

| Microsoft | Maia 200 | FP4 Tensor Cores | Token Generation Economics |

| AWS | Trainium3 | 3nm TSMC Process | Energy-Efficient Training |

The New Era of AI Accelerators

For years, the Generative AI Hardware is the physical computational layer consisting of specialized processors designed to execute the massive matrix multiplications required by deep learning models. While the H100 was the gold standard for a while, 2026 is all about the transition to native 4-bit and 8-bit precision to squeeze more performance out of every watt.

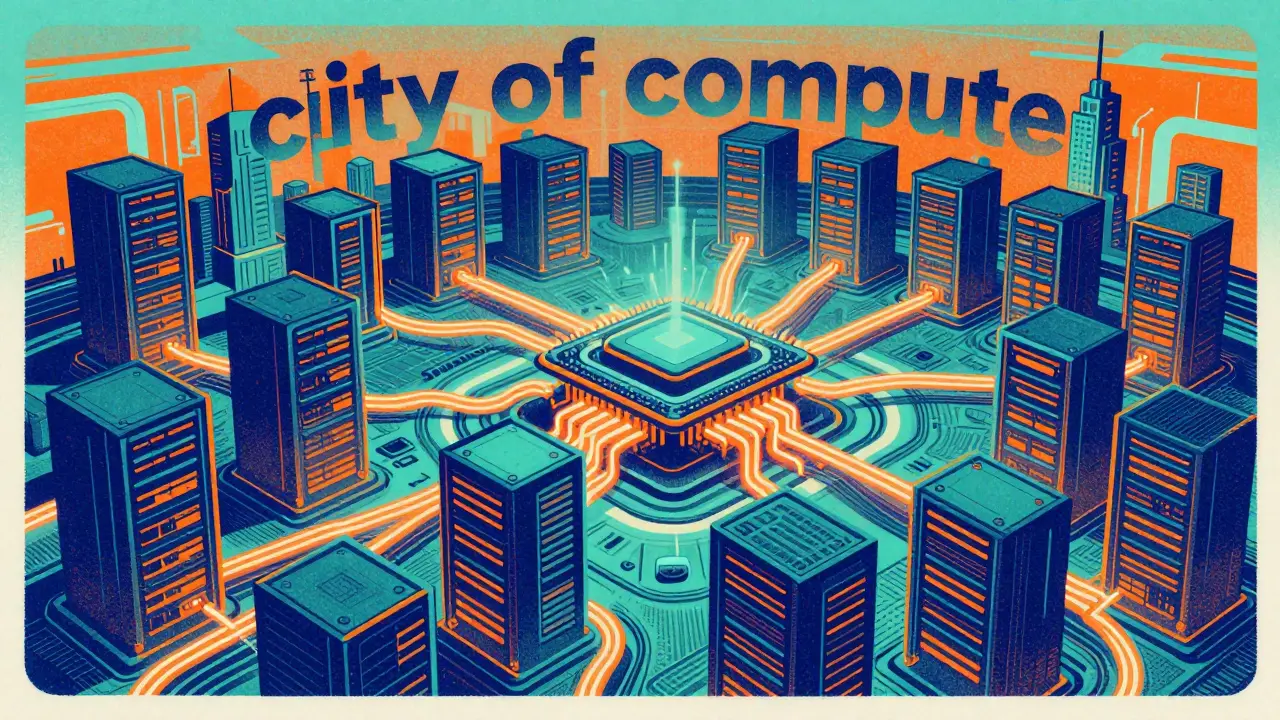

NVIDIA is doubling down with its Rubin AI platform. This isn't just a speed bump; it's a response to the sheer scale of models being deployed. When you see OpenAI committing to 10 gigawatts of power for their systems, you realize we aren't talking about "servers" anymore, but essentially small cities of compute. Rubin focuses on integrating the latest memory standards to prevent the processor from idling while waiting for data.

Meanwhile, AMD is attacking the inference bottleneck with the Helios systems (MI400/MI450). They've realized that for many businesses, the cost of running a model (inference) is more critical than the cost of training it. By pushing bandwidth to nearly 20 TB/s, Helios aims to make real-time AI feel instantaneous, removing the "stutter" often seen in complex generative tasks.

The hyperscalers-AWS, Google, and Microsoft-are no longer just customers; they are competitors. Microsoft's Maia 200 is a prime example of "vertical integration." Instead of paying the NVIDIA tax, they built a chip specifically for token generation using FP4 precision. This allows them to generate text faster and cheaper, which is the only way to make consumer-facing AI chatbots profitable at scale.

Breaking the Memory Wall with HBM4

If the accelerator is the engine, memory is the fuel line. The biggest problem in AI today isn't raw compute power; it's the "memory wall." The processor can calculate results faster than the memory can feed it data. To fix this, the industry is moving to HBM4 (High-Bandwidth Memory generation 4).

HBM4 allows for much denser stacking of memory layers directly on top of or next to the GPU/NPU. For the Rubin and Helios platforms, HBM4 is the secret sauce that allows them to handle massive context windows-think of it as the AI's short-term memory. Without this, the AI "forgets" the beginning of a long document by the time it reaches the end.

However, getting your hands on this tech is a nightmare. SK Hynix has a virtual stranglehold on production, and supply is tight. This is why we see some players, like Microsoft with the Maia 200, sticking to HBM3e for now. They've optimized the chip to work with slightly older memory while they wait for the HBM4 supply chain to stabilize.

The Backbone: Networking and Interconnects

You can't just plug 6,000 accelerators into a standard switch and expect them to work. At this scale, the network is the computer. Traditional Ethernet was too slow, leading to the rise of proprietary fabrics. But the trend in 2026 is moving back toward specialized versions of standard protocols to lower costs.

Microsoft is challenging the status quo with a two-tier scale-up network. Instead of using a proprietary black-box system, they've built a design on standard Ethernet that still provides 2.8 TB/s of bidirectional bandwidth. This allows them to cluster up to 6,144 accelerators while keeping the hardware predictable and easier to repair.

NVIDIA's approach is more ecosystem-driven. Their MGX program allows companies like Dell and HPE to build custom server chassis around NVIDIA's chips. This means the networking is tightly integrated into the motherboard and the rack, ensuring that the transition from one chip to the next happens in nanoseconds, not milliseconds.

The Silicon Foundation: TSMC's 1.6nm Leap

None of this happens without the foundry. TSMC is essentially the landlord of the AI revolution. In 2026, the shift to the A16 process (1.6nm-class) is the most important technical milestone. This isn't just about making transistors smaller; it's about how they are powered.

The A16 process introduces backside power rails. In simple terms, they've moved the power delivery to the bottom of the wafer, leaving the top for data signals. This reduces interference and allows for a 15-20% drop in power consumption at the same speed. For a datacenter using 10GW of power, a 20% efficiency gain isn't just "green"-it's a multi-billion dollar saving on electricity and cooling.

We are also seeing the rise of System-on-Wafer technology, pioneered by companies like Cerebras. Instead of cutting a silicon wafer into hundreds of tiny chips and wiring them together, they keep the whole wafer as one giant chip. This eliminates the networking lag between chips entirely, though it requires incredibly complex cooling solutions to prevent the giant slab of silicon from overheating.

Edge AI and the Shift to On-Device Processing

While the giant datacenters get the headlines, a parallel revolution is happening in your pocket. Qualcomm and Intel are fighting for the "AI PC" and mobile market. The goal here is to move the inference from the cloud to the device. Why send a prompt to a server in Virginia if your phone can handle it locally?

Qualcomm's Hexagon NPU (Neural Processing Unit) is designed for this. It's not as powerful as a H100, but it's incredibly energy-efficient. For 2026, the AI200 and AI250 accelerators bring this efficiency to the datacenter, offering massive amounts of LPDDR memory per card to handle leaner, distilled models that don't require the power of a full GPU cluster.

Intel is playing a different game with its Xeon 6 processors. They are leveraging their dominance in the CPU market by baking AI acceleration directly into the CPU cores. This is perfect for companies that aren't running trillion-parameter models but need their existing servers to handle small-scale generative AI tasks without adding new hardware to the rack.

What is the difference between a GPU and an NPU in generative AI?

A GPU (Graphics Processing Unit) is a general-purpose parallel processor that can handle a wide variety of tasks, making it great for training models. An NPU (Neural Processing Unit) is a specialized circuit designed specifically for the mathematical operations used in AI, like tensor multiplication. NPUs are much more energy-efficient and are typically found in mobile devices or specialized inference chips to run models locally.

Why is HBM4 so important for 2026 AI hardware?

Modern AI models have a "memory bottleneck." They can calculate faster than they can retrieve data from memory. HBM4 (High-Bandwidth Memory 4) increases the speed and capacity of this data transfer, allowing accelerators like NVIDIA's Rubin or AMD's Helios to feed data to the cores faster, which directly reduces the time it takes for an AI to generate a response.

Will proprietary chips like Maia or Trainium replace NVIDIA?

Not entirely, but they provide a critical alternative. NVIDIA's strength is its software ecosystem (CUDA). However, cloud providers like Microsoft and AWS build their own chips to optimize for specific tasks, like reducing the cost per token for inference. Most companies will likely use a mix: NVIDIA for heavy training and proprietary accelerators for cost-effective deployment.

What does "backside power rail" mean in TSMC's A16 process?

In traditional chip design, power and data wires are all mixed together on top of the silicon, which creates congestion and electrical interference. Backside power rails move the power delivery to the underside of the chip. This simplifies the wiring, reduces power leakage, and allows the chip to run faster while consuming less electricity.

How does the Cerebras Wafer-Scale Engine differ from a standard GPU?

A standard GPU is a small piece of silicon cut from a larger wafer. To get more power, you connect thousands of these small chips via cables and switches. Cerebras doesn't cut the wafer; they use the entire circular slice of silicon as one giant processor. This removes the need for external networking between chips, drastically reducing latency and increasing the speed of data movement.

Next Steps for Infrastructure Planning

If you are an enterprise architect looking at the 2026 landscape, stop thinking in terms of "chip speed" and start thinking in terms of "system bottlenecks." If your goal is to train a proprietary model from scratch, your priority should be securing HBM4-equipped clusters and high-bandwidth interconnects. The bottleneck will be your memory bandwidth, not your TFLOPS.

For those focusing on deployment (inference), look toward the Maia 200 or AMD Helios series. The goal here is token economics. Experiment with FP4 and FP8 precision to see how much you can compress your model without losing accuracy; this will determine whether you need a massive GPU cluster or if a fleet of NPUs can handle the load more cheaply.

Finally, keep an eye on the power envelope. With the shift to TSMC's A16, the energy efficiency gains are real, but the absolute power draw is still climbing. Ensure your datacenter cooling strategy is ready for the heat density of wafer-scale engines or ultra-dense Rubin racks before you commit to the hardware.

Flannery Smail

5 April, 2026 - 20:49 PM

Everyone is obsessing over HBM4 like it's the second coming, but let's be real, we're just adding more hardware to mask the fact that our algorithms are bloated and inefficient. Software optimization usually yields better results than just throwing more silicon at the wall.

Rakesh Kumar

6 April, 2026 - 05:28 AM

The idea of a System-on-Wafer is absolutely mind-blowing! Imagine the raw power of a single giant slice of silicon working in total harmony! It's like we're witnessing the birth of a digital god! I can't even imagine the cooling systems required for that beast!

Ronnie Kaye

7 April, 2026 - 09:15 AM

Oh sure, because we definitely need 10 gigawatts of power to generate a few funny poems and some AI art of cats in space. Truly a peak achievement for humanity, just burning the planet to make chatbots slightly faster. Great job everyone!

Nicholas Carpenter

7 April, 2026 - 09:51 AM

It's really interesting to see how the hyperscalers are branching out. While it's a tough road to compete with the NVIDIA ecosystem, the move toward vertical integration could actually lower costs for the end user in the long run. Looking forward to seeing the real-world benchmarks on the Maia 200.

Priyank Panchal

9 April, 2026 - 04:08 AM

Stop pretending that proprietary fabrics are the only way forward. Ethernet is evolving and these "black-box" systems are just vendor lock-in schemes designed to bleed companies dry. Wake up and look at the pricing models!

Tony Smith

10 April, 2026 - 17:47 PM

It is truly a marvel that we have reached this zenith of engineering. I am sure we shall all enjoy the benevolent efficiency of 1.6nm processes, provided the power grid doesn't simply collapse under the weight of our ambitions. How quaint that we thought the H100 was the peak.

Ian Maggs

10 April, 2026 - 18:42 PM

One must wonder... if the network IS the computer... where does the soul of the machine reside??? Perhaps in the latency... the silence between the pulses of light!!!

Michael Gradwell

11 April, 2026 - 09:25 AM

just another way to waste energy on things that dont matter. we dont need faster tokens we need better humans. total waste of silicon

Emmanuel Sadi

12 April, 2026 - 06:02 AM

Imagine thinking that a 20% efficiency gain on a 10GW system actually makes it "green." The sheer arrogance of the corporate sustainability reports is the only thing more impressive than the chip architecture here. It's an accounting trick, not an engineering miracle.

Bill Castanier

13 April, 2026 - 05:23 AM

Solid breakdown. Useful info.