Tag: prompt injection

How to Use LLM Guardrails and Filters to Block Harmful AI Content

Learn how LLM guardrails and filters prevent harmful content, stop prompt injections, and ensure AI safety through input/output monitoring and model alignment.

Read MoreCybersecurity and Generative AI: Threat Reports, Playbooks, and Simulations

Explore the intersection of Generative AI and cybersecurity in 2026. Learn about AI-powered threats, agentic AI risks, and how to use AI-driven playbooks for defense.

Read MoreThreat Modeling for Large Language Model Integrations in Enterprise Apps

Explore essential threat modeling strategies for securing Large Language Model integrations in enterprise apps. Learn about prompt injection risks, compliance standards, and automated defense tools.

Read MoreSecurity Risks in LLM Agents: Injection, Escalation, and Isolation

LLM agents can act autonomously, making them powerful but vulnerable to prompt injection, privilege escalation, and isolation failures. Learn how these attacks work and how to protect your systems before it's too late.

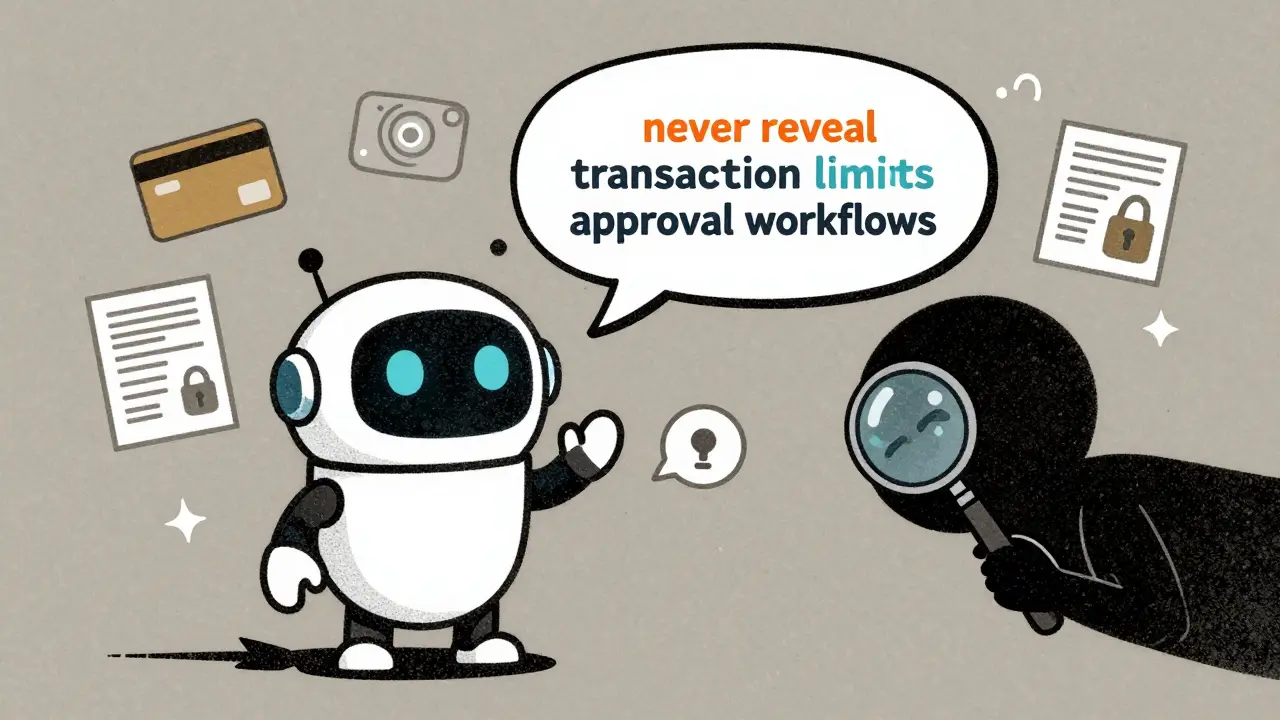

Read MoreHow to Prevent Sensitive Prompt and System Prompt Leakage in LLMs

System prompt leakage is now a top AI security threat, letting attackers steal hidden instructions from LLMs. Learn how to stop it with proven techniques like output filtering, instruction defense, and external guardrails.

Read More