Training a single large generative AI model today can use more electricity than a small town. GPT-3 burned through about 1,300 megawatt-hours. GPT-4? That jumped to 65,000 MWh - 50 times more. If this trend keeps going, data centers could be responsible for over 1% of global carbon emissions by 2027. The math is brutal: we’re pouring more power into training models just to squeeze out a few extra percentage points of accuracy. But there’s a smarter way.

Why Energy Efficiency Isn’t Optional Anymore

It’s not just about saving money on cloud bills. It’s about sustainability. Every time you train a model, you’re asking the grid to deliver massive amounts of power - often from non-renewable sources. MIT researchers found that nearly half the energy used in AI training goes into improving accuracy by only 2-3%. That’s like burning a tank of gas just to drive 5 extra miles. There’s a better path: instead of throwing more compute at the problem, we can make models leaner. Enter sparsity, pruning, and low-rank methods. These aren’t new ideas, but they’ve become essential tools. They don’t just cut energy use - they let you train bigger models without needing a power plant next door.Sparsity: Making Models Mostly Zero

Sparsity means forcing parts of a neural network to become zero. Think of it like removing unused wires in a circuit. If 80% of the weights in a model are zero, you’re doing 80% less math. That saves power. There are two types: unstructured and structured. Unstructured sparsity zeros out individual weights anywhere in the matrix. It’s great for compression - some models hit 90% sparsity. But hardware doesn’t handle random zeros well. Your GPU still has to check every position, even if it’s zero. Structured sparsity is smarter. It zeros out entire blocks - like removing whole rows or columns of weights. MobileBERT, for example, went from 110 million parameters down to 25 million using this method. Accuracy? Still 97% of the original. And here’s the kicker: modern GPUs and TPUs are built to handle these patterns. They can skip entire chunks of computation. That means real speed gains, not just theoretical savings.Pruning: Cutting the Fat During Training

Pruning is like trimming a tree while it’s still growing. You don’t wait until the model is done. You remove the weakest connections as training progresses. There are three main ways:- Magnitude-based pruning: Remove the smallest weights. Simple, effective. A 50% prune on GPT-2 cut training energy by 42% with only a 0.8% drop in accuracy.

- Movement pruning: Watch how weights change during training. If a weight barely moves, it’s probably not doing much. Cut it. This adapts dynamically.

- Lottery ticket hypothesis: Find a tiny subnetwork inside the big model that, if trained alone, performs just as well. You’re not shrinking the model - you’re finding the real core of it.

Low-Rank Methods: Breaking Down Matrices

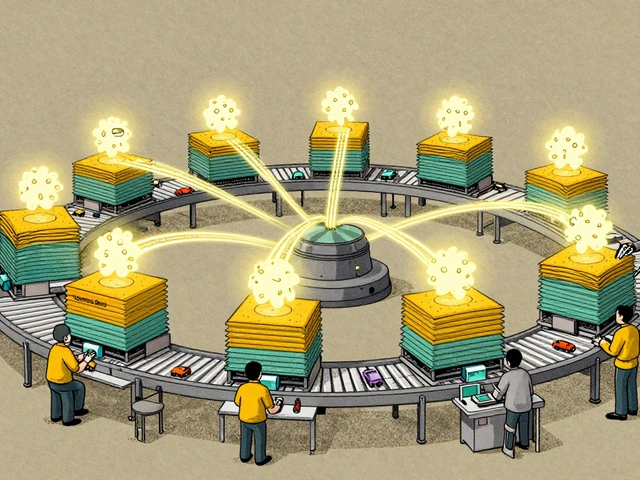

This one’s a bit more math-heavy, but it’s powerful. Instead of storing a huge weight matrix, you approximate it using smaller matrices multiplied together. Think of it like compressing a video: you don’t store every pixel - you store patterns. Low-rank adaptation (LoRA) is the most popular technique here. It adds small, low-rank matrices to existing weights instead of retraining everything. NVIDIA’s NeMo framework used LoRA on BERT-base and slashed training energy from 187 kWh to 118 kWh - a 37% reduction - while keeping 99.2% of its accuracy on question-answering tasks. Tucker decomposition and tensor train methods work similarly. They can compress models 3-4 times without hurting performance. That means you can fit a 70-billion-parameter model on fewer GPUs. Fewer GPUs? Less power. Less cooling. Lower cost.How They Compare to Other Methods

You might have heard of mixed precision training (using 16-bit instead of 32-bit numbers) or early stopping. Both help - mixed precision saves 15-20%, early stopping saves 20-30%. But they’re limited. Mixed precision needs special hardware. Early stopping risks underfitting. Sparsity and pruning? They work on any model. Any framework. Any hardware. And they save 30-80% of energy. IBM’s October 2024 analysis showed that combining structured pruning with LoRA cut Llama-2-7B training energy by 63%. Mixed precision alone? Just 42%. That’s a huge gap. The trade-off? Complexity. You need to tune hyperparameters. You need to validate accuracy carefully. You can’t just flip a switch. But the payoff is worth it - especially when you’re training dozens of models a month.Real-World Implementation: What It Takes

This isn’t plug-and-play. Most teams need 2-4 weeks to get comfortable with these techniques. TensorFlow’s guide walks you through five steps:- Train your baseline model.

- Configure sparsity or pruning settings.

- Apply it gradually during fine-tuning.

- Check accuracy on a validation set.

- Optimize for deployment - sparse models run faster on inference hardware.

Amy P

10 February, 2026 - 22:27 PM

I just trained a model last week and my electricity bill nearly doubled. Like, WTF? This isn't just about climate change-it's about my wallet. I had no idea pruning could cut energy by 40%? I'm gonna try structured sparsity now. No more brute force. My GPU is tired.

Ashley Kuehnel

11 February, 2026 - 18:02 PM

hey everyone! just wanted to say this post is so helpful!! i've been scared to touch pruning because i thought it'd wreck my model, but the numbers here are insane-41% less energy and almost no accuracy loss? that's a win-win. i used to think efficiency meant sacrificing performance, but now i see it's just smarter work. also, typo: 'prune' not 'prun' lol. keep it up!

adam smith

11 February, 2026 - 23:17 PM

This is all very interesting. However, I must point out that the entire premise is built on an assumption that energy consumption is a problem. It is not. Energy is abundant. The grid is robust. The cost of electricity is negligible compared to the value generated. Why optimize for efficiency when optimization is unnecessary?

Mark Nitka

12 February, 2026 - 20:04 PM

Look, I get it. We're all trying to be green. But let's be real-this isn't about saving the planet. It's about saving money. And honestly? The fact that companies like NVIDIA are building hardware to support sparsity means they already know this is the future. You don't need to be an environmentalist to care about cutting training costs by 60%. This isn't activism-it's economics. And the numbers speak louder than your guilt.

k arnold

12 February, 2026 - 23:24 PM

Oh wow. So we're gonna make AI models smaller so we can train them faster... and then use that time to train 10x more models? Brilliant. We'll just make the problem 10x worse. That's not efficiency. That's denial with a PhD.

Tiffany Ho

14 February, 2026 - 13:30 PM

this is so cool i never realized how much energy goes into training models i thought it was just about big GPUs but pruning and low rank stuff actually makes sense like why train everything when you can just keep the good parts i'm gonna try this on my next project thanks for sharing