Most IT support teams are drowning. When a system outage hits, the flood of identical tickets doesn't just slow down response times-it creates a noise floor that hides critical, unique failures. Traditional rule-based systems try to fix this with rigid keywords and manual routing, but they lack the nuance to understand that ten different tickets might actually be describing one single root cause. This is where Autonomous Ticket Resolution is an AI-driven approach that uses specialized Large Language Models to categorize, route, and resolve support tickets with minimal human intervention. By shifting from treating tickets as isolated events to treating them as a connected web of data, companies are finally cutting through the noise.

Moving Beyond Simple Chatbots

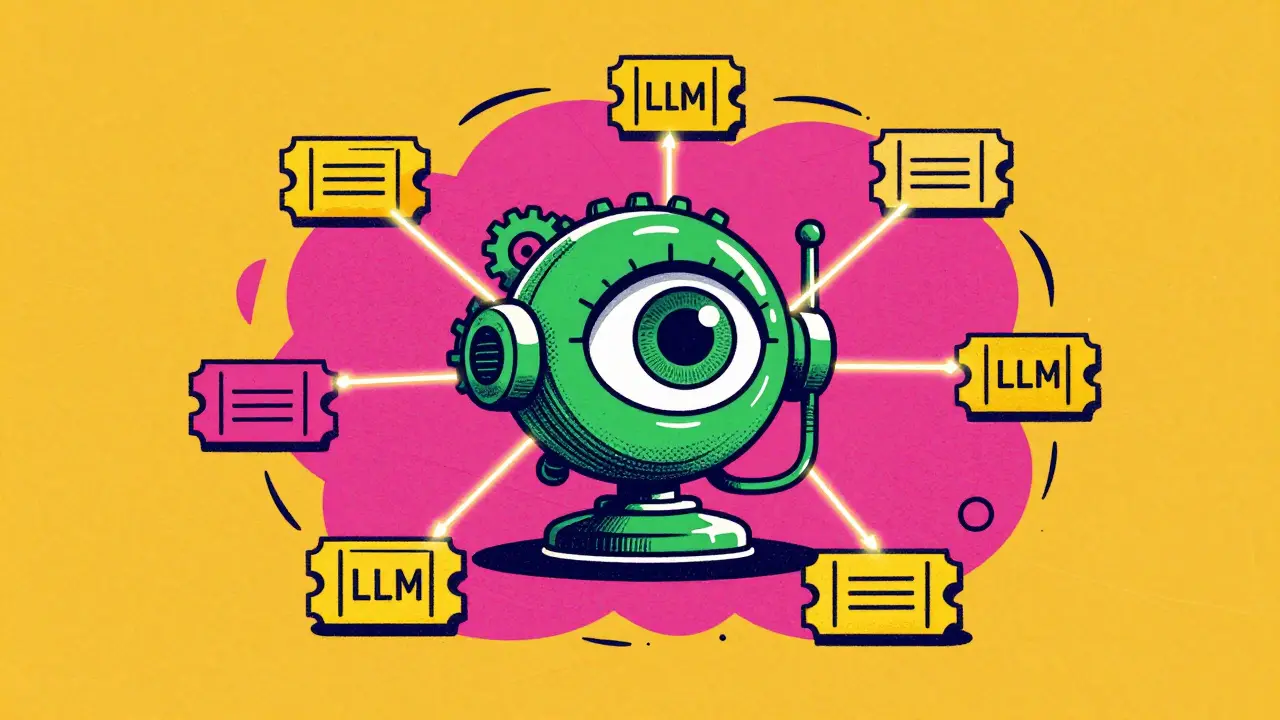

We've all dealt with the "dumb" chatbot that just points you to a FAQ page. Domain-specific LLM agents are different. Instead of general-purpose knowledge, these agents are fine-tuned on a company's own historical ticket data, documentation, and technical logs. This allows them to understand the specific jargon of a financial platform or the complex architecture of a cloud service provider.

Unlike early AI attempts that just analyzed the text of a single ticket, modern frameworks-like those deployed on Volcano Engine-use relationship-driven escalation. This means the AI doesn't just ask "What is this ticket about?" but instead asks "How does this ticket relate to the five others that arrived in the last ten minutes?" By identifying these clusters, the system can automatically deduplicate issues, reducing redundant escalations by as much as 30-40%.

How the Autonomous Engine Actually Works

To make a system autonomous, you can't just give it a prompt; you need a structured workflow. Most professional implementations use a Finite State Machine to track the lifecycle of a ticket. A ticket isn't just "open" or "closed"; it moves through specific states like Analyzing, Pending, and Escalated, with transitions triggered by actual conversations between the customer and the agent.

The technical heavy lifting happens in three core modules:

- Categorization: The AI treats ticket handling as a multi-class classification task. Instead of guessing, it assigns the ticket to a predefined category-such as "Asset Loss" or "System Failure"-aligned with actual team responsibilities.

- Deduplication: The system uses embedding models to turn ticket text into vector representations. By calculating the cosine similarity between these vectors, it can spot nearly identical issues even if the users used different words.

- Intelligent Routing: Rather than a simple round-robin assignment, the agent considers both the content of the ticket and the current availability of human experts to minimize misrouted tickets.

| Feature | Rule-Based ITSM | LLM-Based Agents |

|---|---|---|

| Analysis Method | Keyword matching / If-Then logic | Semantic understanding & context |

| Ticket Handling | Individual / Isolated | Relationship-driven / Clustering |

| Routing Accuracy | Moderate (High misroute rate) | High (Up to 95% accuracy) |

| Scalability | Manual rule updates required | Self-improving via fine-tuning |

Real-World Impact and Performance

The numbers coming out of recent deployments, particularly from firms like Tiger Analytics, show a clear shift in operational efficiency. In their 2024 implementations, they achieved roughly 95% accuracy across categorization and prioritization modules. More importantly, about 15-20% of tickets are now resolved entirely through automated self-service, meaning the human analyst never even sees them.

For the humans still in the loop, the relief is tangible. Analysts at ByteDance reported a 35% reduction in time spent on the mind-numbing task of routine categorization. When a major outage occurs, the AI's ability to link related tickets allows teams to see the full scope of an incident instantly, rather than spending the first hour of a crisis manually grouping tickets into a spreadsheet.

The "Black Box" Problem and Technical Hurdles

It isn't all seamless. One of the biggest friction points is trust. When an AI decides a ticket is "Low Priority," human agents often want to know why. This "black box" decision-making led to pushback from about 22% of agents in some studies. The fix? Transparency features that display the LLM's reasoning process-essentially showing the work, like a math student would.

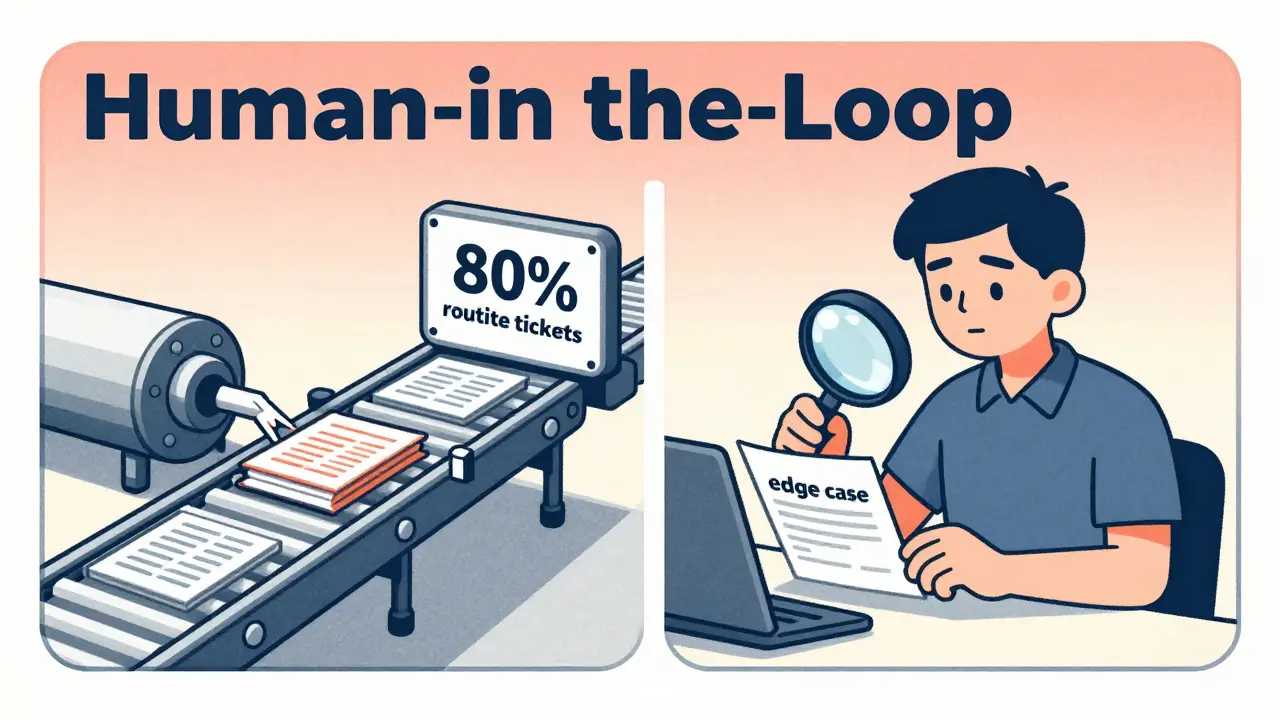

There is also the issue of "edge cases." No matter how good the model is, there will always be highly specialized technical issues-think deep network architecture failures-that the AI can't solve. Current data suggests that about 5-8% of tickets will always fall into an "Others" category, requiring a human expert to step in. The goal isn't 100% automation; it's automating the 80% of routine noise so experts can actually focus on that 5-8% of critical complexity.

Implementing Your Own Agent System

If you're looking to move toward Autonomous Ticket Resolution, don't try to automate the resolution step on day one. The most successful rollouts follow a phased approach. Start with categorization and routing to prove the model's accuracy, then move toward autonomous resolution once you have a high level of confidence in the AI's labeling.

The biggest hurdle isn't usually the code-it's the data. About 65% of initial implementations struggle with inconsistent historical ticket data. If your old tickets are a mess of "fixed it" and "closed" with no detailed descriptions, your LLM will learn those bad habits. Expect to spend 2-3 weeks on data cleaning and standardization before you even touch a model.

To bridge the gap between a general model and a domain expert, most teams use LoRA (Low-Rank Adaptation). This allows you to fine-tune a massive model like GPT-4 or Gemini on your specific dataset without needing a supercomputer or a PhD in machine learning.

Do I need a huge team of data scientists to set this up?

Not necessarily. While a basic understanding of LLM concepts is required, modern fine-tuning frameworks like LoRA make the process much more accessible. The most important roles are actually those who understand your organization's ITSM platform and the subject matter experts who can validate if the AI's categorizations are correct.

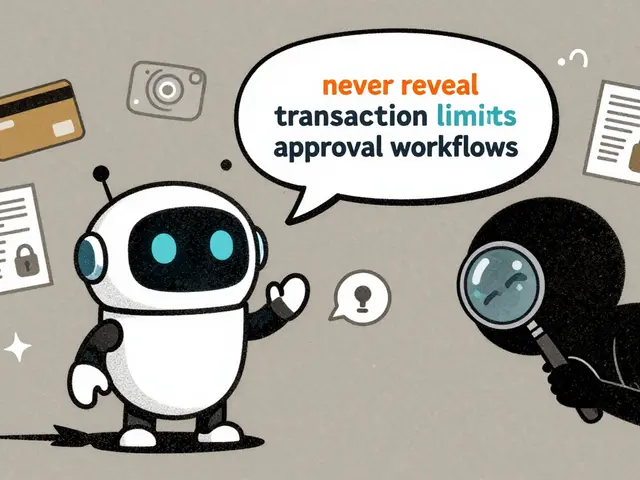

How do these agents handle data privacy?

Privacy is a major concern, with over 50% of organizations citing it as a primary challenge. To mitigate this, most enterprises use private VPC deployments or data masking techniques to ensure sensitive customer information isn't used to train public models or exposed during the inference process.

What happens if the AI misclassifies a critical ticket?

This is why a "human-in-the-loop" system is essential. The AI should act as a first responder that suggests a priority and route, but high-impact tickets should still trigger a human notification. Furthermore, using sentiment analysis helps the AI detect frustration or urgency that might not be captured by technical keywords alone.

Which ITSM tools are compatible with this technology?

Most autonomous agents are designed to integrate via API with industry-standard platforms like ServiceNow and Jira. Because they act as an intelligent layer on top of these tools, you don't usually need to replace your existing software; you just connect the LLM agent to the ticket feed.

How long does it take to see a return on investment?

Early adopters in high-volume sectors like telecommunications and financial services report seeing ROI within 9-12 months. This is primarily driven by the reduction in ticket resolution time and the decrease in agent workload for routine tasks.