When you hear "AI application," you might picture a futuristic chatbot or a self-driving car. But in 2026, production large language model applications are running quietly in customer service queues, internal knowledge bases, code assistants, and document analyzers-everywhere. And here’s the hard truth: most companies have no idea how much these systems actually cost to run each month. Some think it’s $5,000. Others assume it’s $50,000. The real answer? It could be $200… or $540,000. The difference isn’t just about how much you use it-it’s about how you build it.

Why Your LLM Cost Estimate Is Probably Wrong

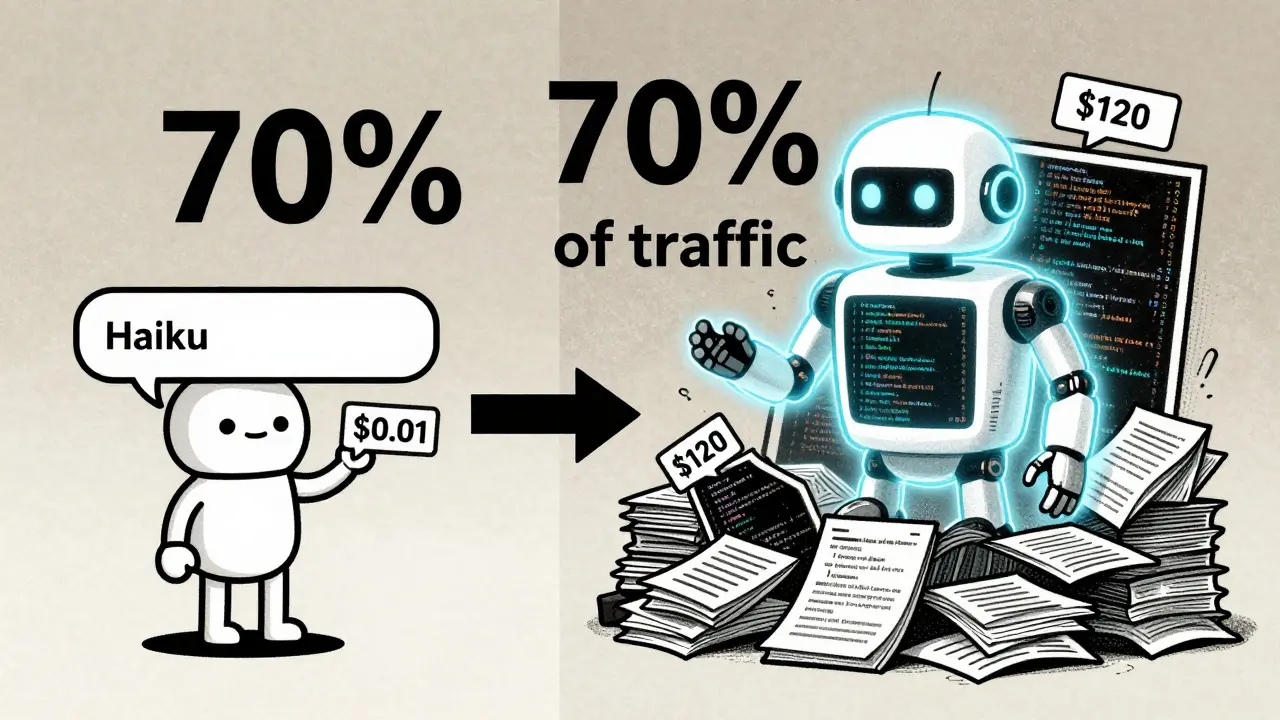

A lot of teams start by looking at API prices. "GPT-4 Turbo costs $0.03 per 1,000 tokens," they say. "We’ll make 100,000 calls a month. That’s $3,000. Easy." Then they launch-and suddenly their bill hits $12,000. What happened? The mistake is assuming API calls are the main cost. They’re not. In fact, in production systems, API usage typically makes up only about 5% of total monthly spending. The other 95%? Infrastructure. Serving models. Scaling GPUs. Managing latency. Monitoring failures. These are the hidden engines that eat your budget. Take a startup with 10,000 monthly active users. They run a chatbot that handles 50,000 conversations, processes 5,000 documents, and assists with 10,000 code queries. If they route every single request to GPT-4 Turbo? That’s $8,500/month. Claude 3 Opus? $12,000. But if they use a smart cascade-simple questions go to Claude Haiku (at 1/10th the cost), medium ones to GPT-4o, and only the toughest ones to GPT-4 Turbo? The bill drops to $1,200. That’s not magic. It’s architecture.The Three Big Cost Buckets

There are three real categories of cost for any production LLM app. You need to track all three.- API and Inference Costs: This is what you pay to cloud providers like OpenAI, Anthropic, or Mistral for each token processed. It’s the most visible line item, but it’s also the easiest to optimize. Prices have collapsed since 2023. GPT-4 quality models that cost $60 per million tokens in 2023 now run under $0.75. That’s a 98% drop.

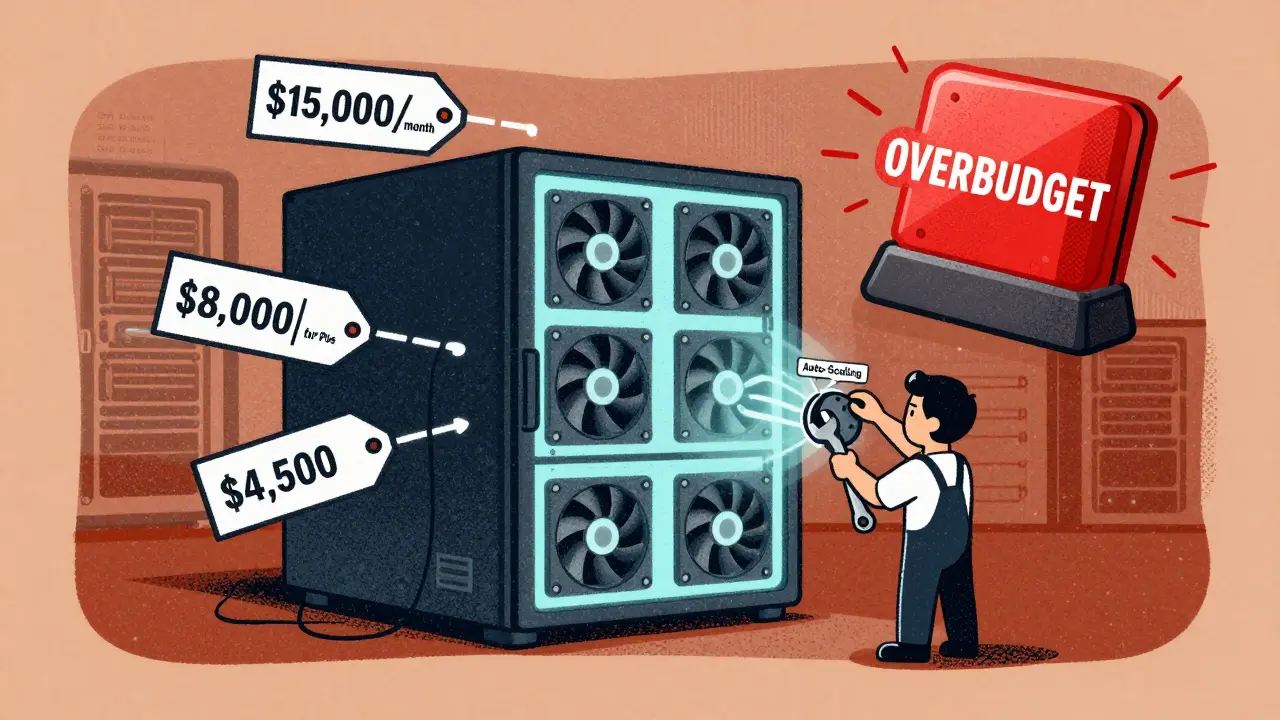

- Infrastructure and Serving Costs: This is where most budgets explode. If you’re hosting models on your own servers-say, on AWS or Azure-you’re paying for GPUs, memory, network bandwidth, load balancers, auto-scaling, and uptime monitoring. A small proof-of-concept with 1 GPU might cost $2,000/month. A full production system with 4 GPUs, 16 CPUs, and 99% uptime? $15,000/month. Enterprise-grade setups with 8+ GPUs? $45,000/month. This isn’t optional if you need reliability.

- Development and Training Costs: This is a one-time (or periodic) investment. Building a custom model from scratch? That’s $200,000-$400,000 a year just in compute. Fine-tuning a model with LoRA? $50-$300 in compute, plus $4,000-$12,000 in engineering labor. Most teams don’t need to train. They need to adapt.

Here’s the kicker: if you’re spending more than $8,000/month on infrastructure, you’re probably not using model routing. If you’re spending $50,000/month on API calls, you’re likely using GPT-4 Turbo for everything. Both are signs of poor design.

Real-World Cost Scenarios

Let’s look at two real setups from 2026 deployments.Scenario 1: Small SaaS Startup

Users: 10,000 monthly active

Requests: 50,000 chat, 5,000 docs, 10,000 code

- All GPT-4 Turbo: $8,500/month

- All Claude 3 Opus: $12,000/month

- Optimized cascade (Haiku + Sonnet + GPT-4o): $1,200/month

That’s a 90% savings just by picking the right model for the job. Haiku handles simple yes/no questions. Sonnet does document summarization. GPT-4o handles code generation. No need to throw expensive models at everything.

Scenario 2: Enterprise Customer Support System

Users: 100,000 monthly active

Requests: 500,000 support chats, 50,000 document analysis, 100,000 internal tool queries

- Claude 3 Sonnet only: $45,000/month

- Haiku + Sonnet cascade: $8,000/month

- GPT-4o Mini + selective Sonnet: $4,500/month

Again, the same logic applies. Simple queries get routed to the cheapest model. Complex ones get the heavy artillery. The result? A 90% reduction in monthly spend. That’s not a tweak. That’s a redesign.

Infrastructure: The Silent Budget Killer

You can’t avoid infrastructure if you want reliability. But you can control it.USM Systems tracked 12-month deployments in 2026 and found:

- Small dev environments (2-4 CPUs, 1 GPU): $1,500-$3,000/month

- Medium production (8-16 CPUs, 2-4 GPUs): $8,000-$15,000/month

- Large enterprise (32+ CPUs, 8+ GPUs): $23,000-$45,000/month

- Model training clusters (16+ high-end GPUs): $35,000-$65,000/month

Here’s what most teams don’t realize: if you’re spending $35,000/month on a training cluster, you’re probably doing it wrong. Unless you’re building a proprietary model for a unique domain like medical diagnostics or legal contract analysis, you don’t need to train from scratch. Use a foundation model. Fine-tune it with LoRA. That cuts your infrastructure cost from $65,000/month to $15,000/month.

And don’t forget: reserved instances, spot instances, and auto-scaling can cut your cloud bill by 40-60%. If you’re paying full price for GPUs 24/7, you’re leaving money on the table.

Development Costs: How Much Does It Really Cost to Build?

You can’t just plug in an API and call it done. Someone has to build the system. Someone has to connect it to your database. Someone has to monitor it when it breaks at 3 a.m.According to CodeWave and Kellton’s 2026 data:

- Basic AI solution (simple chatbot): $20,000-$80,000, 1-3 months

- Intermediate (context-aware chat, document processing): $50,000-$150,000, 3-6 months

- Advanced (multi-turn, sentiment, real-time analytics): $100,000-$300,000, 6-9 months

- Enterprise platform (multi-language, voice, omnichannel): $250,000-$1,000,000+, 9-18 months

And staffing? You’re not just paying for developers. You need:

- Data Scientists: $120,000-$180,000/year

- MLOps Specialists: $125,000-$190,000/year

That’s $250,000+ in annual salary just to keep the system running. If your monthly infrastructure cost is $15,000, that’s $180,000/year. Add staff? You’re now spending $430,000/year. That’s why AI-as-a-service is becoming so popular. For most companies, paying $1,000/month for a managed solution is cheaper than hiring two specialists.

How to Cut Costs Without Losing Quality

Here’s what works in 2026:- Use model cascading. Route 70% of requests to cheap models (Haiku, GPT-4o Mini). Reserve expensive ones (GPT-4 Turbo, Claude Opus) for the 10-20% that need deep reasoning.

- Adopt LoRA fine-tuning. A full fine-tune costs $20,000+ in compute. LoRA? $300. You get 95% of the performance for 10% of the cost.

- Don’t train custom models. Unless you’re in a niche like pharmaceutical research or military logistics, you don’t need to. Use pre-trained models. They’re better, faster, and cheaper.

- Quantize your models. Turning a 13B model into an 8-bit version cuts memory use by 60%. That means you can run it on half the GPUs.

- Audit your data before you start. Bad data leads to expensive retraining. If you’re paying $80,000 to annotate 100,000 legal documents, make sure they’re clean first.

Stratagem Systems analyzed 127 deployments in 2026. Teams that followed these five steps reduced their monthly costs by 86-90%. Not by using cheaper clouds. By using smarter architecture.

ROI Timelines: When Will You Break Even?

Costs aren’t just about money. They’re about time.- Basic AI: ROI in 6-10 months

- Intermediate: 8-14 months

- Advanced: 12-18 months

- Enterprise: 14-24 months

Notice a pattern? The more complex the system, the longer it takes to pay for itself. And the higher the failure rate. Basic AI solutions succeed 75-85% of the time. Enterprise platforms? Only 45-60%. That’s not because the tech is bad. It’s because complexity multiplies risk.

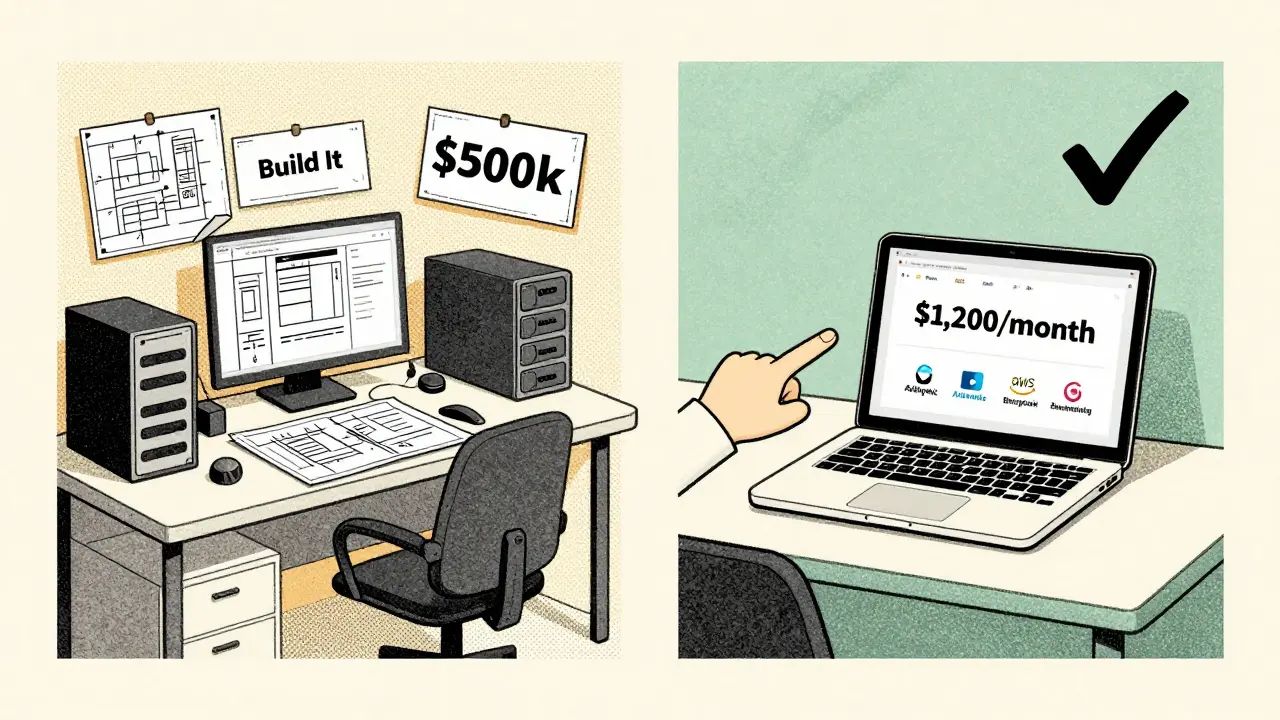

That’s why starting small matters. Build a prototype with API calls. Test it with 100 users. Measure the ROI. Then scale. Don’t spend $500,000 on a custom platform before you know if your users even want it.

Build vs. Buy: The Real Choice

The biggest question isn’t "How much does it cost?" It’s "Should you even build it?"Managed AI services (like Inkeep, Anthropic Cloud, or AWS Bedrock) now offer:

- Pre-built chatbots

- Document summarization tools

- Code assistants

- Custom fine-tuning via API

For $1,000-$5,000/month, you get enterprise-grade performance without hiring a team. You get uptime SLAs. You get automatic updates. You get support.

Custom development only makes sense if:

- You have proprietary data that can’t be shared

- Your domain is too niche for public models (e.g., legal contracts in a specific jurisdiction)

- You need real-time learning from live user feedback

For 90% of businesses? Managed services are the smarter choice. You save money. You save time. You save headaches.

What’s the average monthly cost for a production LLM app in 2026?

There’s no average-it depends entirely on architecture. A basic chatbot using optimized model routing can cost as little as $200/month. A full enterprise system with custom infrastructure and staff can cost $540,000/month. Most small to medium businesses fall between $1,000 and $15,000/month.

Is using GPT-4 Turbo for everything a bad idea?

Yes, almost always. GPT-4 Turbo is powerful, but it’s also 10-20x more expensive than models like Claude Haiku or GPT-4o Mini. Most user requests-like answering FAQs, summarizing short texts, or tagging documents-don’t need deep reasoning. Routing those to cheaper models cuts your bill by 80-90% without sacrificing quality.

Can I save money by training my own model?

Only if you have unique data and a very specific need. Training a 6B-parameter model costs $20,000-$40,000/month in compute alone. LoRA fine-tuning on a pre-trained model achieves nearly the same performance for $300-$12,500 total. Unless you’re in a highly regulated or proprietary field, skip training and fine-tune instead.

How much does infrastructure really cost compared to API calls?

Infrastructure is 95% of the cost. API calls make up only 5%. That means even if you cut your API spending in half, you won’t save much unless you also optimize your GPU usage, scaling, and model serving. Hosting your own models on AWS or Azure with proper auto-scaling is often cheaper than paying for high-volume API calls at premium rates.

What’s the cheapest way to get started with LLMs?

Use a managed AI service like Anthropic Cloud or AWS Bedrock. Start with a simple chatbot using Haiku or GPT-4o Mini. Handle 1,000-5,000 requests/month. Measure user satisfaction and cost. Then scale. You can build a working prototype for under $200/month and avoid $100,000+ in development costs.

kelvin kind

24 March, 2026 - 09:48 AM

I've seen so many teams blow $20k/month on GPT-4 Turbo for FAQ bots. Just use Haiku. It's like using a Ferrari to deliver pizza. You can, but why?

Simple.

Ian Cassidy

25 March, 2026 - 22:59 PM

The 95% infrastructure cost stat is wild. Most people think AI = API calls. Nah. It's GPU uptime, auto-scaling groups, and monitoring alerts at 3 a.m. that kill you. I've had teams run 4x A100s on spot instances and cut costs by 60%. Still got 99.8% uptime. The magic is in the architecture, not the model.

Ananya Sharma

27 March, 2026 - 18:59 PM

You're all missing the point. This whole 'model cascading' nonsense is just corporate gaslighting. Why not just admit that AI is a $10 billion Ponzi scheme built on hype and cloud credits? Companies aren't saving money-they're just outsourcing their delusion to Anthropic and OpenAI. You think Haiku is cheaper? It's just the new 'lite' version of the same scam. And don't get me started on LoRA fine-tuning. That's not innovation-it's duct tape on a nuclear reactor. You're not building AI. You're just shuffling tokens through a black box and calling it 'cost-efficient.'

Sarah McWhirter

29 March, 2026 - 04:15 AM

I love how everyone acts like this is some groundbreaking insight. I've been running LLMs since 2022. We used to pay $500k/month on GPT-4 Turbo for customer service. Then we switched to Haiku for 80% of queries. Saved $420k. Then we realized our data was garbage. So we cleaned it. Saved another $180k. Then we realized our MLOps guy was asleep at the wheel. He got fired. Now we pay $1,200. And yes, I still get emails at 2 a.m. asking why the bot said 'I love you' to a customer. But hey, ROI is 11 months. That's better than my dating life. 😘

Ben De Keersmaecker

30 March, 2026 - 08:12 AM

The distinction between API costs and infrastructure is crucial, but rarely understood. Most engineers fixate on per-token pricing because it’s quantifiable and visible. But infrastructure isn’t just about GPUs-it’s about latency tail latency, request queuing, model versioning, and rollback protocols. A single unmonitored model drift can cost more than a month’s API bill. And let’s not forget the hidden labor cost: the engineer who stays up for 72 hours because the model started hallucinating product specs as legal disclaimers. That’s not a bug. It’s a systemic failure. Architecture matters because humans are still the final layer of validation.

Paritosh Bhagat

30 March, 2026 - 22:34 PM

I just want to say that this entire post is sooo refreshing! 🙌 I’ve been screaming from the rooftops that companies are wasting money on GPT-4 Turbo for simple tasks. I work in a call center in Pune, and we used to have 12 agents handling 300 queries a day. Now? One Haiku bot. 98% accuracy. 95% cost reduction. And guess what? The employees are happier. No more burnout. No more yelling at customers who say 'I just want to talk to a human.' But hey, maybe I’m just too emotional. 😢

Zach Beggs

31 March, 2026 - 01:39 AM

This is spot on. We did the exact same thing at my company. Started with GPT-4 Turbo. Bill hit $18k. Switched to cascade: Haiku for yes/no, Sonnet for summaries, GPT-4o for code. Now it’s $1,400. No drop in quality. Just better design. Also, spot instances + auto-scaling saved us another 30%. Honestly, if you’re not doing this, you’re just throwing money at a black box.

Chris Heffron

31 March, 2026 - 15:27 PM

Great breakdown! 😊 One thing you didn’t mention: caching. We cache 60% of repeat queries. That’s like getting free inference. Took us 2 weeks to implement. Saved $800/month. Small win, but it adds up. Also, check your tokenization-some inputs are bloated with whitespace. Clean that up, and you’ll shave off another 10-15%. 💡

Aaron Elliott

2 April, 2026 - 06:17 AM

While the empirical data presented herein is superficially compelling, it remains fundamentally predicated upon a neoliberal techno-optimist framework that obfuscates the deeper epistemological crisis of algorithmic governance. The assertion that cost reduction equates to efficiency is a fallacy of reification: one cannot optimize what one has not first interrogated. The very act of routing queries through cascading models is a form of epistemic violence-a reduction of human discourse to transactional token streams. One must ask: at what cost to meaning? At what cost to dignity? The $200/month chatbot may be economically rational, but is it ethically defensible?