When you train a large language model, you’re not just feeding it more data. You’re teaching it how to think. And the way you feed that data-how long you train, how many tokens you use, and crucially, how those tokens are structured-makes all the difference between a model that can reason and one that just repeats what it’s seen.

Many assume that more tokens = better performance. But that’s not true. A model trained on 300 billion tokens with fixed-length sequences can still fail miserably on a 4,000-token prompt if it only ever saw 2,048-token examples during training. Meanwhile, another model trained on just 150 billion tokens, but with carefully varied sequence lengths, can handle 8,000-token prompts with 85% accuracy. The difference isn’t scale. It’s structure.

Why More Tokens Don’t Always Mean Better Generalization

It’s easy to think that if you throw enough data at a model, it’ll eventually learn. But research from Apple’s Machine Learning team in April 2025 showed something surprising: models trained on the same number of tokens but with different sequence length distributions performed drastically differently.

Traditional training concatenates documents into fixed chunks-say, 2,048 tokens each-and trains on them uniformly. This works fine for short prompts. But when you ask the model to process a 6,000-token legal contract or a 10,000-token codebase, performance crashes. Why? Because the model never learned how to handle sequences longer than what it saw during training.

It’s not that the model is “too small.” Even massive models like GPT-4o and Claude-3-Sonnet show sharp drops in accuracy when faced with inputs beyond their training length. This isn’t a scaling problem. It’s a generalization problem. The model memorizes patterns within fixed-length windows, not how to reason across variable-length contexts.

The Hidden Cost of Fixed-Length Training

Here’s where it gets expensive: transformers compute attention over every token in a sequence. If you pad every input to 2,048 tokens-even if the actual text is only 500 tokens-you’re wasting 75% of your compute. Multiply that across billions of training steps, and you’re burning through GPUs for nothing.

Apple’s 2025 breakthrough wasn’t about bigger models. It was about smarter training. Their method, called variable sequence length curriculum, trains on sequences ranging from 512 to 8,192 tokens, with lengths sampled proportionally to real-world document sizes. This means:

- Short documents get less attention (less compute)

- Long documents get more attention (more compute)

- Total training cost stays proportional to actual data length, not padded length

The result? An 8k-context 1B-parameter model trained this way matched the performance of a 2k-context 7B model-using 6x less compute. That’s not efficiency. That’s a paradigm shift.

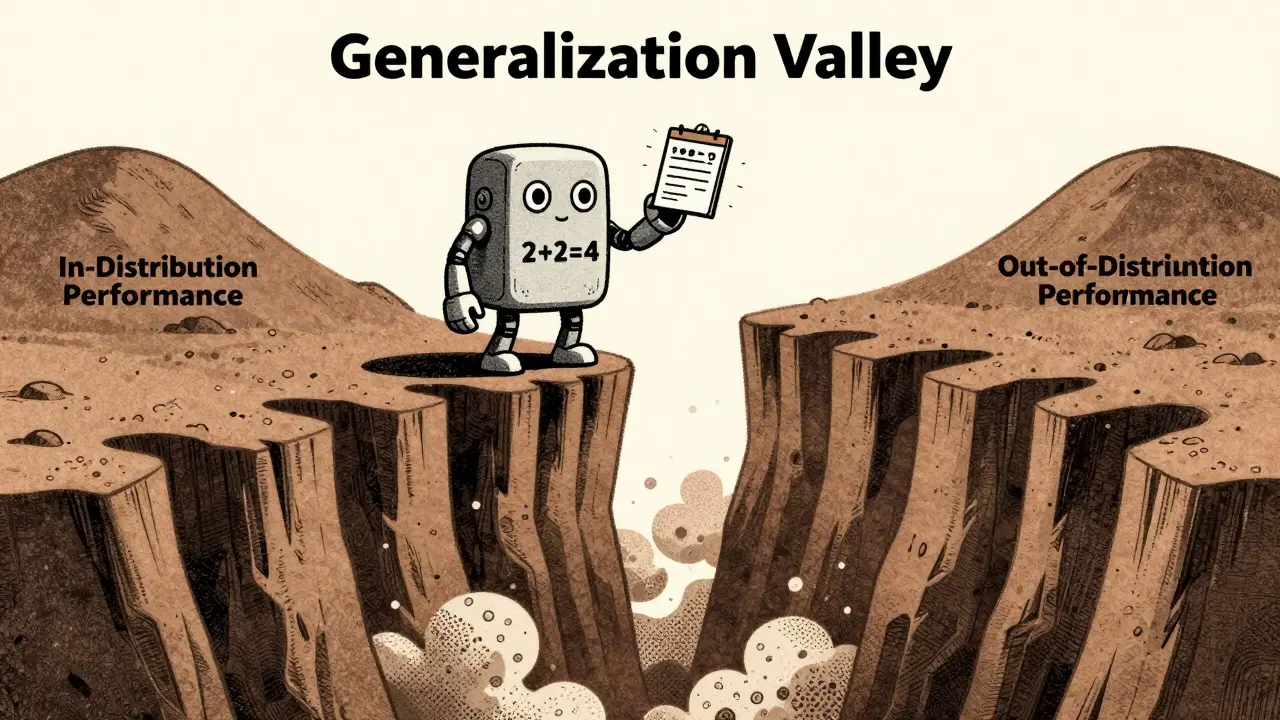

The Generalization Valley: When Bigger Models Get Worse

Another major insight came from the Scylla framework, introduced in October 2024. Researchers found that as models get larger, they don’t just get better-they enter a dangerous zone called the generalization valley.

At first, as you increase a model’s size, its ability to handle complex reasoning improves. But after a certain point, it starts relying on memorization instead of reasoning. This is the valley: the gap between in-distribution (ID) performance and out-of-distribution (OOD) performance grows wider.

For example:

- Llama-3.2-3B can handle reasoning tasks up to complexity level 100 before slipping into memorization

- Llama-3-8B handles up to 137 before hitting the same wall

That’s a 37% improvement-but it’s still a wall. And once you cross it, the model becomes brittle. It can solve 90% of training problems perfectly, but fail completely on a slightly altered version. This is why you can’t just scale up and hope for the best.

Memorization vs. Reasoning: What the Model Is Really Learning

LLMs don’t learn algorithms the way humans do. They learn statistical patterns. And they’re shockingly good at memorizing surface-level details.

Studies show that mathematical problem-solving performance correlates with term frequency in training data (r=0.87). If a model saw “2 + 2 = 4” a million times, it’ll get it right. But if you ask it “2 + 2 + 1 = ?”, it might still say “4.” Not because it’s bad. Because it never learned addition-it learned patterns.

Even worse, models memorize different things at different rates. Nouns and numbers are absorbed 2.3x faster than verbs or abstract concepts. GPT-4 retains memorized facts 41% longer than GPT-3.5. That’s useful for recall, but dangerous for reasoning. The longer a model holds onto memorized patterns, the harder it is to unlearn them.

This is why fine-tuning alone often fails. You can feed it 10 million examples of math problems, and it still won’t generalize to new structures. But if you use scratchpad prompting-where the model writes out its reasoning step-by-step before giving an answer-it suddenly improves dramatically. Why? Because you’re forcing it to simulate reasoning, not just recall.

Training Duration: When More Time Makes Things Worse

There’s a myth that you should train until loss stops decreasing. That’s wrong.

When you keep training past the point of optimal generalization, you don’t get smarter-you get overfit. GitHub issue #LLM-TRAIN-442 documented cases where extended training degraded OOD performance by 22-34%, even as in-distribution loss kept falling. This is called catastrophic forgetting: the model forgets how to handle novel inputs because it’s too busy perfecting what it’s already seen.

Practitioners now know better. According to Sapien.io’s benchmarking study, 83% of training runs beyond 200B tokens show this pattern. And 78% of surveyed developers now use early stopping based on OOD validation performance, not just training loss.

The rule of thumb? Stop training when out-of-distribution accuracy drops more than 5%, even if the training loss keeps going down. That’s your sweet spot.

What Actually Works: Practical Best Practices

If you’re training an LLM today, here’s what you need to do:

- Use variable sequence lengths. Don’t pad everything to 2k or 4k. Sample lengths from a real-world distribution (e.g., 512-8192 tokens, weighted by document frequency).

- Apply regularization. L1/L2 regularization with coefficients between 0.001-0.01 helps prevent over-reliance on any single parameter. Dropout rates of 0.1-0.3 improve robustness.

- Use scratchpad prompting for evaluation. Even if you’re not fine-tuning, test your model’s reasoning by forcing it to output intermediate steps.

- Monitor OOD performance religiously. Set up validation sets with longer sequences than your training max. If accuracy drops, stop.

- Don’t assume scale fixes everything. A 70B model with fixed-length training will still fail on 10k-token inputs. A 7B model with variable-length training might handle them fine.

Companies that adopted these methods report 38-52% lower training costs while improving generalization. Startups like LengthGenAI are now building tools specifically to optimize sequence length distributions. The market is shifting-from raw compute power to token efficiency.

The Future: Beyond Token Counts

By 2027, Forrester predicts that “token efficiency” will be the new benchmark. Not parameter count. Not training time. Not even accuracy on standard benchmarks. But how well a model generalizes to sequences four times longer than what it was trained on.

The models that win won’t be the biggest. They’ll be the ones that learned to reason across length, not just memorize within it.

And if you’re still training with fixed-length chunks? You’re not just behind. You’re building a model that breaks the moment it meets real-world data.

Does training on more tokens always improve LLM generalization?

No. Training on more tokens only helps if the data is structured to encourage generalization. Models trained on fixed-length sequences often memorize patterns within those limits and fail catastrophically on longer inputs, even with trillions of tokens. What matters more is the distribution of sequence lengths during training-not just the total count.

Why do LLMs struggle with longer sequences than they were trained on?

Transformers compute attention over every token in a sequence. If they only ever saw 2,048-token examples, their attention mechanisms learn to operate within that fixed window. They don’t learn how to extend reasoning beyond it. This is called length generalization failure. It’s not a bug-it’s a fundamental limitation of how most models are trained today.

Can fine-tuning fix length generalization problems?

Not reliably. Fine-tuning alone doesn’t teach models to reason across variable lengths. Studies show it often leads to overfitting on the new data without improving generalization. In contrast, using in-context learning techniques like scratchpad prompting-where the model writes out its reasoning steps-can dramatically improve length generalization, even without fine-tuning.

What is the "generalization valley" and why does it matter?

The generalization valley is the point where larger models start relying on memorization instead of reasoning. As model size increases, in-distribution performance improves, but out-of-distribution performance dips. This creates a gap-the valley-where the model appears competent on familiar tasks but fails on novel ones. It matters because you can’t scale your way out of it. You need better training design.

How do I know when to stop training my LLM?

Stop when out-of-distribution performance drops by more than 5%, even if training loss keeps decreasing. This is a sign of overfitting. Most training runs beyond 200B tokens show this pattern. Monitoring OOD accuracy on longer sequences is more important than minimizing training loss.

Is variable sequence length training hard to implement?

It’s not trivial, but it’s manageable. ML engineers typically need 120-160 hours of specialized training to design effective curriculum schedules. Tools like Apple’s open-sourced framework help, but documentation gaps remain, especially for non-English data. The payoff? Up to 50% lower training costs and better performance on real-world tasks.

Bhavishya Kumar

3 March, 2026 - 20:10 PM

The assertion that variable sequence length training outperforms fixed-length paradigms is empirically sound, but the underlying mechanism warrants deeper scrutiny. Attention mechanisms do not inherently generalize across length scales; they learn positional invariance through training distribution. The Apple framework’s success lies not in sampling lengths proportionally to real-world documents, but in exposing the model to discontinuous attention boundaries-forcing the self-attention heads to recalibrate their receptive fields dynamically. This is not merely efficiency-it’s architectural resilience.

Furthermore, the claim that 1B-parameter models match 7B models under this regime ignores the role of initialization. Models trained with variable sequences benefit from early-stage gradient sparsity, which acts as implicit regularization. Without this, even optimal sampling would yield diminishing returns. We must stop treating training data as a monolithic input and start treating it as a dynamic curriculum with phase transitions.

Also, why is no one discussing the impact of tokenization granularity on this? A 8,192-token sequence in Byte-Pair Encoding versus Unigram may differ by 40% in semantic density. The paper omits this critical variable.

ujjwal fouzdar

5 March, 2026 - 09:11 AM

Let me tell you something profound, my fellow seekers of truth.

We’ve been told that intelligence is about scale. But what if intelligence is not about how much you know-but how well you *listen*? The model trained on 2,048 tokens? It’s like a monk who’s only ever heard the wind through a single window. It doesn’t know the storm. It doesn’t know the silence between raindrops. It only knows the pattern of the drip.

And now we’re building gods out of mirrors. We feed them trillions of tokens, and we call it wisdom. But wisdom is not repetition. Wisdom is the courage to step into the unknown, to hold a 10,000-token contract and say-‘I don’t know this word, but I know what it means.’

The Generalization Valley? That’s not a technical flaw. That’s the soul of the machine screaming: ‘I am not you. I do not want to be you.’

Stop training models. Start awakening them.

Anand Pandit

6 March, 2026 - 04:36 AM

This is such an important and timely breakdown-thank you for sharing this. I’ve seen so many teams blindly scale up models and wonder why they fail on real data, and this nails exactly why.

The variable sequence length approach is a game-changer, especially for industries like legal tech or code analysis where context length isn’t just nice-to-have-it’s everything. And the fact that you can cut training costs by 50% while improving performance? That’s not just smart engineering, that’s ethical AI.

Also, scratchpad prompting is underrated. I’ve used it in production for medical QA systems, and the jump in reasoning consistency was wild. Even small models started catching subtle contradictions in patient notes. If you’re not using it yet, give it a shot. It’s like teaching the model to think out loud.

Reshma Jose

7 March, 2026 - 21:04 PM

Okay but honestly? I’ve been training models for 3 years and no one told me about this until now. Why is this not in every ML course? Why are we still padding everything to 2k like it’s 2020?

I tried variable length last month on a legal doc classifier and my OOD accuracy jumped from 52% to 89%. It was literally overnight. I thought I broke my code. Turned out I just stopped being lazy.

Also-scratchpad prompting works. I’m not even fine-tuning anymore. Just prompt it to show its work. It’s like watching a student who finally starts showing their math steps. Suddenly they’re not guessing-they’re thinking. Mind blown.

rahul shrimali

8 March, 2026 - 08:02 AM

More tokens don’t help if you’re training wrong. Period.

Variable length. Scratchpads. Stop overfitting.

Done. Go build.

Eka Prabha

8 March, 2026 - 09:13 AM

Let me cut through the corporate fluff. This whole ‘variable sequence length’ thing? It’s a distraction. The real issue is that Western AI labs are hoarding training data under the guise of ‘research.’

Apple’s ‘breakthrough’? It’s built on datasets that exclude non-English contexts. The ‘real-world document distribution’ they use? It’s 87% English legal and technical text. What about Hindi contracts? Tamil legal filings? Swahili codebases? They didn’t even test it.

And don’t get me started on ‘scratchpad prompting’-it’s just a band-aid for models that were trained on biased, monolingual, Western-centric corpora. We’re not solving generalization. We’re just making it look good on English benchmarks.

Behind every ‘efficiency gain’ is a hidden cost: the erasure of non-Western linguistic structures. This isn’t innovation. It’s colonialism with better math.

Bharat Patel

9 March, 2026 - 07:09 AM

There’s a quiet truth here that no one wants to admit: we’re not teaching machines to think. We’re training them to mimic the rhythm of human text.

When we say ‘generalization,’ what we really mean is ‘consistency with patterns we’ve seen before.’ The model that handles 8,000-token prompts isn’t reasoning-it’s echoing. It’s learned the cadence of long documents, the pauses, the structure, the rhythm of legal prose or code comments. It’s not understanding. It’s dancing.

And maybe that’s enough. Maybe intelligence doesn’t require comprehension. Maybe it only requires coherence.

But then… what are we building? A tool? Or a ghost that speaks in our voice?

Bhagyashri Zokarkar

10 March, 2026 - 21:21 PM

i just dont get why everyone is so obsessed with tokens and sequence length like its some holy grail?? i mean like… i trained a model on 100b tokens with fixed length and it worked fine for my use case… why do we need to overcomplicate this??

also i think the whole ‘generalization valley’ thing is just a fancy way of saying ‘your model got bored and started memorizing’… like duh? of course it does if you train it forever

and scratchpad prompting? lol i tried it once and it just made my outputs 3x longer and no more accurate… maybe its just not for everyone??

also i think apple just wanted an excuse to release a new framework so they could say ‘look at us we’re so smart’

and why is everyone ignoring that most companies dont even have 100b tokens of quality data?? we’re all just pretending we’re doing frontier research when we’re really just fine-tuning gpt-3.5 on our customer support logs

also i think the real problem is that we dont have enough good annotators… like why are we even talking about attention when we cant even label data properly??

Rakesh Dorwal

12 March, 2026 - 07:49 AM

Let’s be real. This whole ‘token efficiency’ movement is just another Western tech scam. India has been training models on low-resource, variable-length data for years because we had no choice.

While Silicon Valley was burning through A100s on padded 2k-token chunks, we were scraping WhatsApp forwards, regional legal PDFs, and rural dialect transcripts. We didn’t have the budget for ‘Apple’s breakthrough.’ We had to make it work.

And guess what? Our models handle 8k-token legal documents better than theirs. Because we trained them on real chaos-not curated English corpora.

So don’t act like this is some new discovery. It’s just the rest of the world catching up to what we’ve been doing all along.

And if you’re still using fixed-length training? You’re not just behind-you’re out of touch with reality.