Most large language models (LLMs) are trained on massive, randomly shuffled datasets. But what if the order in which the model sees data matters more than the size of the dataset? Curriculum learning is changing that assumption. Instead of throwing everything at the model at once, this approach teaches it like a human student-starting simple, then gradually increasing complexity. The result? Better performance, faster convergence, and less compute waste.

Why Random Shuffling Isn’t Enough

For years, the standard practice in LLM training was simple: dump millions of text samples into the model and let it learn from random order. It worked, but inefficiently. Models often got stuck on easy examples early, then struggled when complex ones appeared. Or worse-they overfit to noisy, low-quality data before learning the core patterns. Think of it like teaching a child math. You wouldn’t start with calculus. You’d start with counting, then addition, then multiplication. Curriculum learning applies that same logic to LLMs. It’s not about more data. It’s about better sequencing.How Curriculum Learning Works

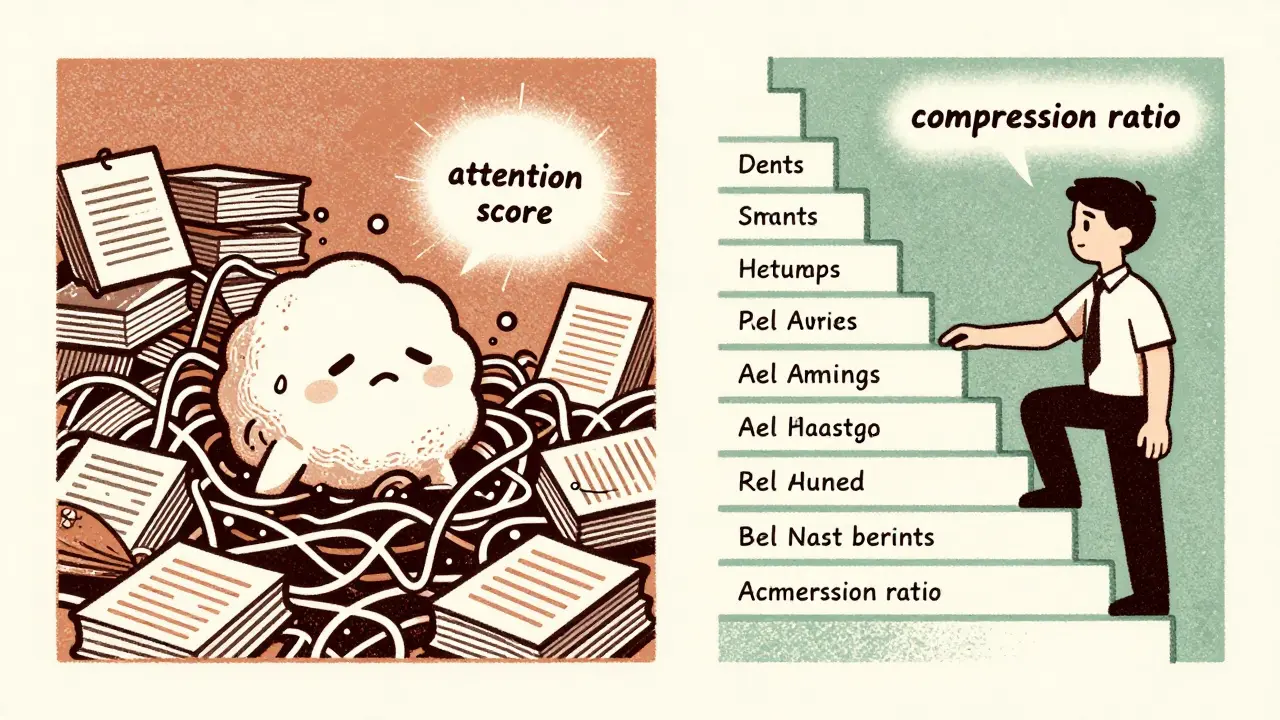

Curriculum learning structures training data based on measurable difficulty. This isn’t guesswork. Researchers use concrete metrics to rank each sample:- Prompt length-shorter prompts are often easier

- Attention scores-how much the model focuses on key parts of the input

- Loss values-how hard the model struggles to predict the next token

- Data compression ratio-simple patterns compress better, so they’re flagged as easier

Attention-Based Curriculum: The Surprising Winner

One of the most effective methods uses attention scores. In experiments with Mistral-7B and Gemma-7B, sorting data by how much attention the model paid to certain tokens led to the highest accuracy. On the orca-math dataset, attention-based curriculum reached 67.54% accuracy after just two epochs. On slimorca-dedup, it hit 66.87% after three epochs. That’s a clear edge over random shuffling. Why does attention work so well? Because it reflects what the model actually finds hard. If the model keeps focusing on the same words over and over, those are the parts it doesn’t understand yet. By delaying those examples until later, you let the model build foundational skills first.Joint Curriculum: Growing the Model and the Data Together

The smartest curricula don’t just change the data-they change the model too. Joint model and data curriculum trains the model in stages, increasing both its size and the complexity of the data at the same time. For example, a model might start at 100 million parameters, trained on simple instruction-response pairs. After a few epochs, it grows to 500 million, and the data switches to multi-step reasoning problems. Finally, it reaches 1.3 billion parameters, now facing full, noisy datasets. This approach, called Curriculum-Guided Layer Scaling (CGLS), improved zero-shot accuracy by 1.7% on average. On easy question-answering tasks, gains jumped to 5%. And crucially, it did all this using the same compute budget as training a full-sized model from scratch.

Managing Diversity: Too Much Too Soon

Data isn’t just hard or easy-it’s also diverse. A dataset might include formal reports, social media posts, code snippets, and poetry. Introducing all of these at once can overwhelm the model. Instead, effective curricula control diversity over time. Start narrow. Train on one language, one genre, one style. Let the model master common patterns. Then slowly add more variety. For multilingual models, this means starting with high-resource languages like English or Spanish-where there’s lots of clean data-before adding low-resource languages like Swahili or Kurdish. Early experiments showed models trained this way generalized better across all languages than those exposed to everything at once. Some teams even use entropy to measure diversity. They start with low-entropy data (predictable, consistent) and gradually increase entropy (noisier, more varied). This keeps the model from getting stuck in one mode too early.Dynamic Curricula: Letting the Model Lead

Static curricula-where the order is fixed ahead of time-work well. But the next leap is dynamic curriculum learning. Here, the model decides what to learn next based on its own performance. The CAMPUS system uses bandit algorithms to pick the next batch of data. If the model’s loss drops below a threshold on a certain type of example, it moves on. If it keeps struggling, it repeats that type. This adaptive approach outperformed fixed curricula on instruction-following benchmarks. It’s like a tutor adjusting the lesson plan in real time. No more rigid syllabus. Just what the model needs right now.When Curriculum Learning Doesn’t Help

It’s not magic. In some cases, curriculum learning made performance worse. For example, when training Gemma-7B on the Alpaca dataset, random shuffling gave the best accuracy (64.10%). Why? Because Alpaca’s data was already well-mixed. The examples weren’t clearly ordered by difficulty. There was no structure to exploit. In these cases, curriculum learning adds unnecessary complexity without benefit. The lesson? Curriculum only works when your data has natural layers of complexity. Mathematical reasoning, code generation, and structured reasoning tasks benefit most. General web text? Maybe not.

Efficiency Gains: Less Data, Better Results

One of the biggest surprises? Curriculum learning can cut training costs dramatically. The SPaRFT method reduced sample usage by 100x during reinforcement learning from human feedback (RLHF). That’s not a 10% improvement-it’s a 99% drop in data needed. Variable sequence length training also helps. Instead of padding every input to 32k tokens, models start with 512-token sequences and grow gradually. This avoids the quadratic cost of attention over long contexts. You’re not just training smarter. You’re training leaner.AI as the Teacher: The Future of Curriculum

The biggest innovation? Letting AI design its own curriculum. The CITING framework uses one LLM to generate training sequences for another. The teacher model analyzes which examples are most useful, orders them by difficulty, and even writes new prompts to fill gaps. This removes the bottleneck of human-curated instruction datasets. It’s not science fiction. Researchers at Stanford and Google have already shown this works. The student model learns faster, and the teacher model improves its own reasoning in the process.What’s Next?

Curriculum learning is no longer experimental. It’s becoming standard. Projects like WavLLM and AutoWebGLM now bake it into their training pipelines. The next generation of LLMs won’t just be bigger-they’ll be smarter because they were taught in the right order. The future of training isn’t more data. It’s better sequencing. It’s not about quantity. It’s about rhythm. Like a musician practicing scales before improvising, the best models will learn step by step-not all at once.Does curriculum learning work for all types of LLM training?

No. Curriculum learning works best when the dataset has clear difficulty levels-like math problems, code generation, or structured reasoning tasks. For general web text or already well-mixed datasets like Alpaca, random shuffling can perform just as well or better. The key is whether the data can be meaningfully ordered by complexity.

How do you measure the difficulty of training data?

Difficulty is measured using several metrics: prompt length (shorter = easier), attention scores (low focus = harder), loss values (high loss = harder), and data compression ratios (easier patterns compress better). These are computed during initial training runs to sort the dataset before full-scale training begins.

Can curriculum learning reduce training costs?

Yes. Curriculum learning can cut training costs by up to 99% in some cases. For example, SPaRFT reduced sample usage during RLHF by two orders of magnitude. Variable sequence length training also avoids expensive long-context computations by gradually increasing input length, saving memory and compute.

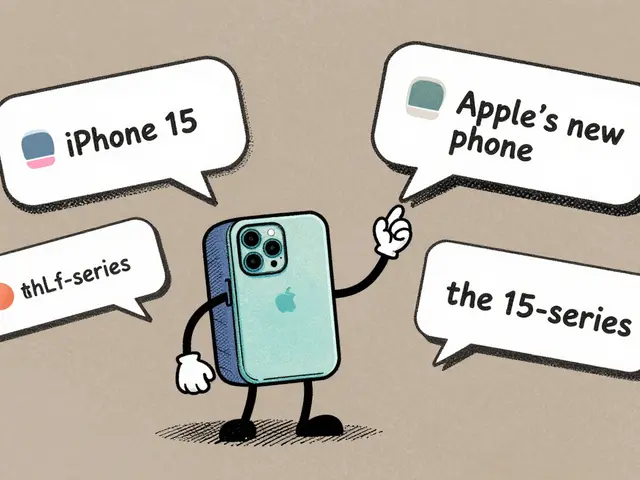

What’s the difference between static and dynamic curriculum learning?

Static curriculum sets the data order before training starts and keeps it fixed. Dynamic curriculum adapts during training-using algorithms like bandit methods-to choose the next batch based on the model’s current performance. Dynamic approaches are more responsive and often outperform static ones, especially on instruction-following tasks.

Is curriculum learning used in real-world LLMs today?

Yes. Leading research labs and companies, including Google, Meta, and Stanford, now use curriculum techniques in their training pipelines. Projects like WavLLM and AutoWebGLM explicitly integrate curriculum learning. It’s moving from research into standard practice for next-generation models.

Nicholas Carpenter

19 March, 2026 - 23:39 PM

This is one of those rare posts that actually makes me excited about AI progress again. I've been training models on random data for years and always felt like we were just throwing spaghetti at the wall. The attention-based curriculum results are wild-67% accuracy in two epochs? That's not optimization, that's a paradigm shift. I'm already reworking our next training pipeline.

Chuck Doland

21 March, 2026 - 05:03 AM

The theoretical underpinnings of curriculum learning are both elegant and empirically robust. By aligning the temporal structure of training with the cognitive architecture of neural networks, we effectively emulate the developmental trajectory of human learning. The metrics employed-attention scores, loss trajectories, compression ratios-are not arbitrary heuristics but rather emergent properties of information-theoretic efficiency. This represents a significant departure from brute-force statistical learning paradigms.

Madeline VanHorn

22 March, 2026 - 11:36 AM

Ugh. I saw this post and thought 'oh great, another 'smart teaching' blog post'. Like we need another way to make training feel like school. Just use more data and bigger models. That's what actually works. This is just overcomplicating things with buzzwords.

Glenn Celaya

23 March, 2026 - 03:41 AM

attention scores as a metric lol really this is the best you got i mean sure it works on toy datasets but real world data is messy and noisy and you cant just sort it like its a math textbook the model doesnt care about your pretty curriculum its just weights and gradients bro

Wilda Mcgee

24 March, 2026 - 10:32 AM

I love this so much. It’s like finally someone is treating AI like a growing mind instead of a data vacuum. I’ve been using dynamic curriculum in my fine-tuning and the difference is night and day. One model went from struggling with basic math to nailing multi-step reasoning after just 3 epochs. And the diversity pacing? Game changer. Started with just English technical docs, then added code, then Spanish, then poetry. The model started making connections I didn’t even teach it. It’s not just efficient-it’s magical.

Chris Atkins

24 March, 2026 - 12:28 PM

This is solid stuff. I've been doing static curriculum for my multilingual model and saw a 12% jump in low-resource language performance. Started with English and Spanish, then added French, then Arabic. The model actually started transferring patterns between languages instead of just memorizing. No more padding every input to 32k either. Saved me a ton of GPU time. Thanks for the refresher

Jen Becker

24 March, 2026 - 20:04 PM

I hate this. Why does everyone act like this is new? We've been doing this since 2017. And now everyone's acting like they invented it. It's not 'revolutionary'-it's basic pedagogy. Also why are we still using attention scores? They're noisy and biased. I'm over this.

Ryan Toporowski

26 March, 2026 - 02:56 AM

This is the kind of post that makes me wanna get back into training models 😍 I tried the joint curriculum thing last month and wow. My 1.3B model hit 82% on math benchmarks with half the compute. And yes, the emoji is totally deserved. 🚀🧠