The Shift from Static to Contextual Meaning

In the early days, we used static embeddings. Tools like Word2Vec, introduced by Google in 2013, assigned a single fixed vector to each word. This was a massive leap forward, but it had a glaring flaw: it couldn't handle words with multiple meanings. Consider the word "bank." In a static model, the "bank" of a river and a "bank" where you keep money have the exact same numerical representation. The model is essentially blind to the context. Everything changed with the arrival of transformer-based architectures. Models like BERT (Bidirectional Encoder Representations from Transformers) introduced contextual embeddings. Instead of a fixed dictionary, BERT looks at the entire sentence before assigning a vector. If the sentence is "I sat by the river bank," the embedding for "bank" shifts to reflect the geographic context. If the sentence is "I deposited money at the bank," the vector moves toward the financial sector of the vector space. This ability to adapt based on surrounding tokens is why today's LLMs feel so much more human.| Feature | Static (e.g., Word2Vec, GloVe) | Contextual (e.g., BERT, GPT) |

|---|---|---|

| Representation | One vector per word | Vector changes based on context |

| Polysemy Handling | Poor (cannot distinguish meanings) | Excellent (dynamic representation) |

| Typical Dimensions | 50 to 300 | 384 to 4,096 |

| Computational Cost | Low/Fast | High/Resource Intensive |

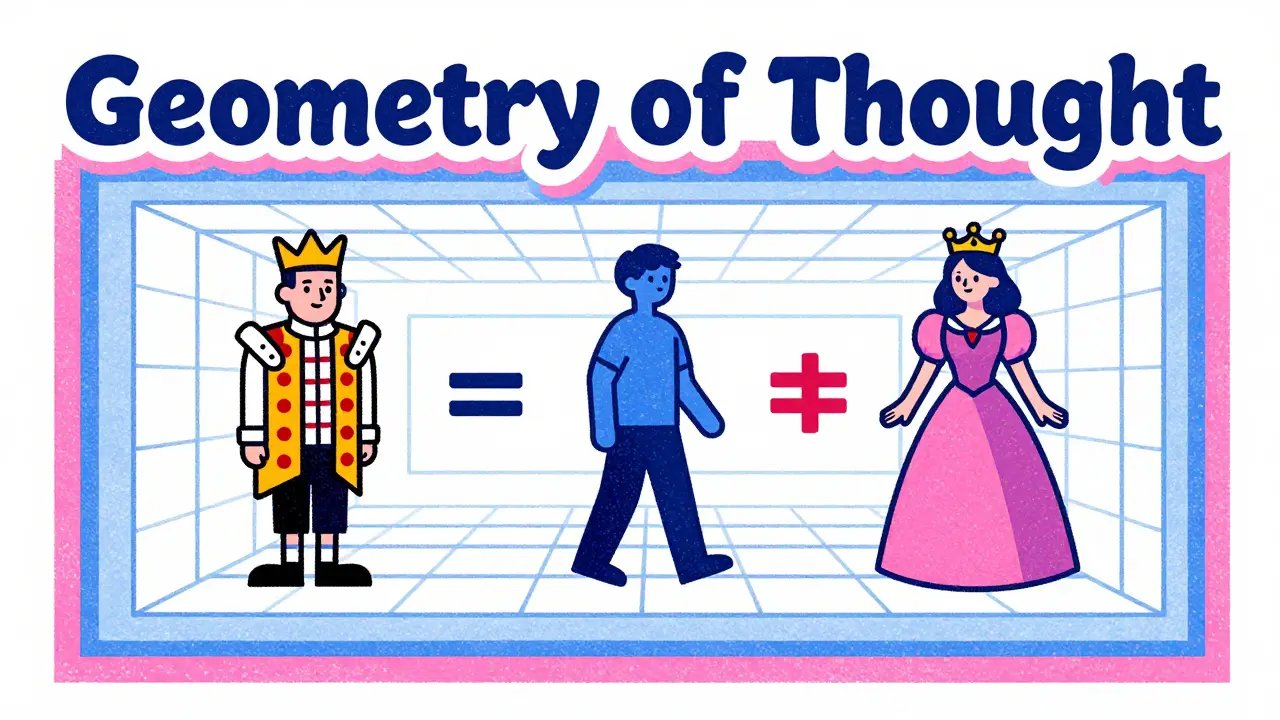

The Geometry of Thought: How Vectors Work

At its core, an embedding is just a list of numbers, like [0.2, -0.15, 0.88, ...]. While these numbers look like gibberish to us, each position (or dimension) represents a latent feature. We don't always know exactly what "Dimension 42" means, but the model might use it to track "femininity" or "royalty." This creates a fascinating property called vector arithmetic. One of the most famous examples in NLP is:Vector("King") - Vector("Man") + Vector("Woman") ≈ Vector("Queen")

By subtracting the concept of "man" from "king" and adding "woman," the model lands almost exactly on the vector for "queen." This proves that the model hasn't just memorized words, but has captured the underlying relationship between them. To make this work, models use positional encodings to keep track of where words appear in a sentence, ensuring that "The dog bit the man" and "The man bit the dog" result in different vectors despite having the same words.

Scaling the Space: Dimensions and Trade-offs

How many dimensions do you actually need? It's a balancing act between expressiveness and speed. If you have too few dimensions, the model collapses distinct concepts into the same space (collisions). If you have too many, the model becomes incredibly slow and requires massive amounts of GPU memory. For basic syntactic tasks, 300 dimensions are often enough. However, for deep semantic understanding-the kind needed for complex reasoning-modern models push much higher. The LLM embeddings used in high-end enterprise systems often range from 768 to 1,536 dimensions. For instance, the Sentence-BERT model 'all-MiniLM-L6-v2' uses 384 dimensions to keep things snappy for real-time similarity searches, while larger models prioritize accuracy over latency.Putting Embeddings to Work: RAG and Vector Databases

Embeddings aren't just internal brain-matter for LLMs; they are the foundation for Retrieval-Augmented Generation (RAG). Instead of relying on the model's frozen training data, RAG allows an AI to look up facts in a private database in real-time. Here is how that process works in the real world:- Indexing: You turn your company's documents into embeddings and store them in a Vector Database.

- Querying: When a user asks a question, the system turns that question into a vector using the same embedding model.

- Similarity Search: The system looks for the vectors in the database that are closest to the query vector, usually using Cosine Similarity.

- Augmentation: The most relevant chunks of text are fed to the LLM as a prompt, which then writes a grounded, factual answer.

Where Embeddings Fall Short

Despite the magic, embeddings aren't perfect. One of the biggest hurdles is the "negation problem." In a vector space, "good" and "not good" often end up very close to each other because they appear in similar contexts. The model knows they are both talking about quality, but it often struggles to mathematically represent the flip in meaning. There's also the issue of domain specificity. An embedding model trained on Wikipedia might struggle with a legal brief or a medical report. Studies have shown a performance drop of 15-20% when general-purpose models are used on highly specialized text. This is why experts recommend "fine-tuning" or using domain-specific embedding models for healthcare or finance applications. Finally, we have to address the bias problem. Because embeddings learn from human-generated text, they mirror human prejudices. If a training set contains gender stereotypes, the vector for "doctor" might be mathematically closer to "man" than "woman." Researchers use the Word Embedding Association Test (WEAT) to measure this, and it's a constant battle to debias these spaces.The Future: Beyond Simple Vectors

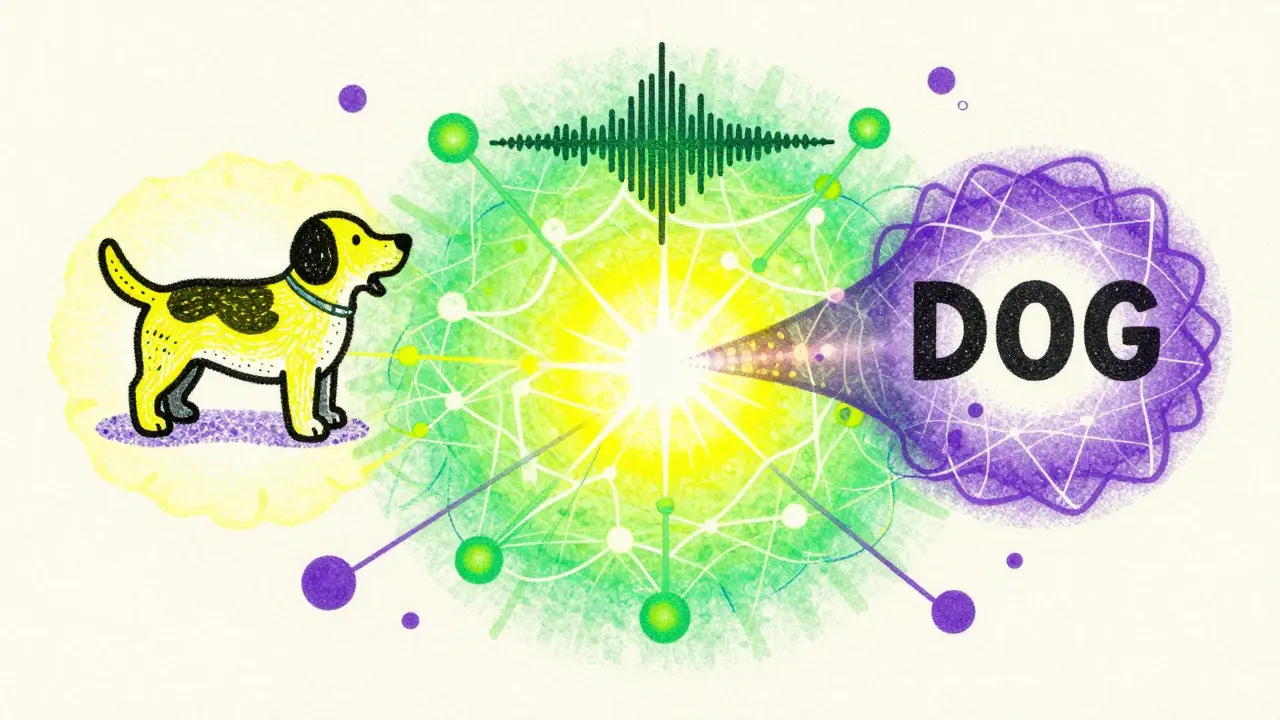

We are moving toward a world of multimodal embeddings. Meta's ImageBind is a great example-it creates a shared vector space for text, images, and audio. This means a picture of a dog, the sound of a bark, and the word "dog" all map to the same conceptual area in the AI's mind. We're also seeing the rise of sparse embeddings. Instead of a dense list of 1,000 numbers, sparse embeddings use a few highly significant values, reducing dimensionality by up to 75% without losing much semantic a-curacy. Looking further ahead, researchers are working on dynamic embeddings that change over time, allowing AI to track how the meaning of a word like "cloud" evolved from a weather term to a computing term.What is the difference between a token and an embedding?

A token is just a piece of a word (like "embed" and "dings") assigned a unique ID number. An embedding is the actual numerical vector that represents the *meaning* of that token. Think of the token as a library call number and the embedding as the actual content of the book.

Why use cosine similarity instead of Euclidean distance?

Euclidean distance measures the straight-line distance between two points. However, in high-dimensional space, the *angle* between vectors is a much better indicator of similarity than the distance. Cosine similarity focuses on this angle, which typically results in about 12% higher accuracy for semantic tasks.

Can embeddings be used for images or audio?

Yes. Any data can be turned into an embedding. Contrastive Learning models like CLIP translate images and text into the same vector space, allowing you to search for an image using a text description.

What happens to words the model hasn't seen before (Out-of-Vocabulary)?

Older models would simply fail or use a generic "unknown" vector, leading to significant accuracy drops. Modern LLMs use sub-word tokenization (like Byte Pair Encoding), so they can construct an embedding for a new word by combining the embeddings of its smaller pieces.

How do I choose the right embedding dimension for my project?

It depends on your constraints. For fast, real-time search on mobile devices, go for lower dimensions (384). For high-precision legal or medical analysis where accuracy is paramount, use 768 or 1,536 dimensions. Always benchmark your specific dataset to find the point of diminishing returns.