The New Reality of Building Software

When you open your editor today, the process looks different than it did five years ago. You aren't just typing syntax anymore. You are articulating intent. Vibe coding is a modern approach to software development where developers focus on high-level vision, aesthetics, and user flows while leveraging Large Language Models (LLMs) to handle code generation. This shift changes everything about how we architect systems. If you treat an AI assistant like a magic box without structure, you get garbage output. Treat it like a senior partner who needs clear briefs, and you get enterprise-grade solutions.

The core problem isn't generating code; it's maintaining consistency across thousands of generated lines. Without guardrails, the model drifts. That is why specific design patterns have emerged to ground the AI in reality. These aren't the same GoF patterns from 1995. They are workflow architectures designed for the interaction between human intent and machine execution.

Vertical Slice Architecture

Most legacy projects suffer because they try to define the entire database before writing a single line of frontend logic. This fails in AI-assisted workflows. Vertical Slice Architecture is an architectural approach involving implementing features end-to-end in full-stack "slices" progressing from database to user interface. Instead of building all models first, you tackle the simplest form of a full-stack feature individually.

Imagine you are building a login system. A traditional layered approach might ask you to create the User table, then the Auth service, then the Controller, then the View. An AI working without a vertical slice plan often gets lost in the middle layers. With a vertical slice, you tell the LLM to build the login flow from scratch. You ask for the database column, the API endpoint, and the input field in one go. Then you move to the next slice, like profile management.

This reduces hallucination significantly. When the AI knows the scope is limited to just authentication, it doesn't invent unnecessary tables or complex dependencies that won't be used for months. It keeps the context window clean and focused on immediate business value. The benefit compounds over time because each slice stands as a functional unit that can be tested independently before adding the next layer of complexity.

Context Engineering Strategies

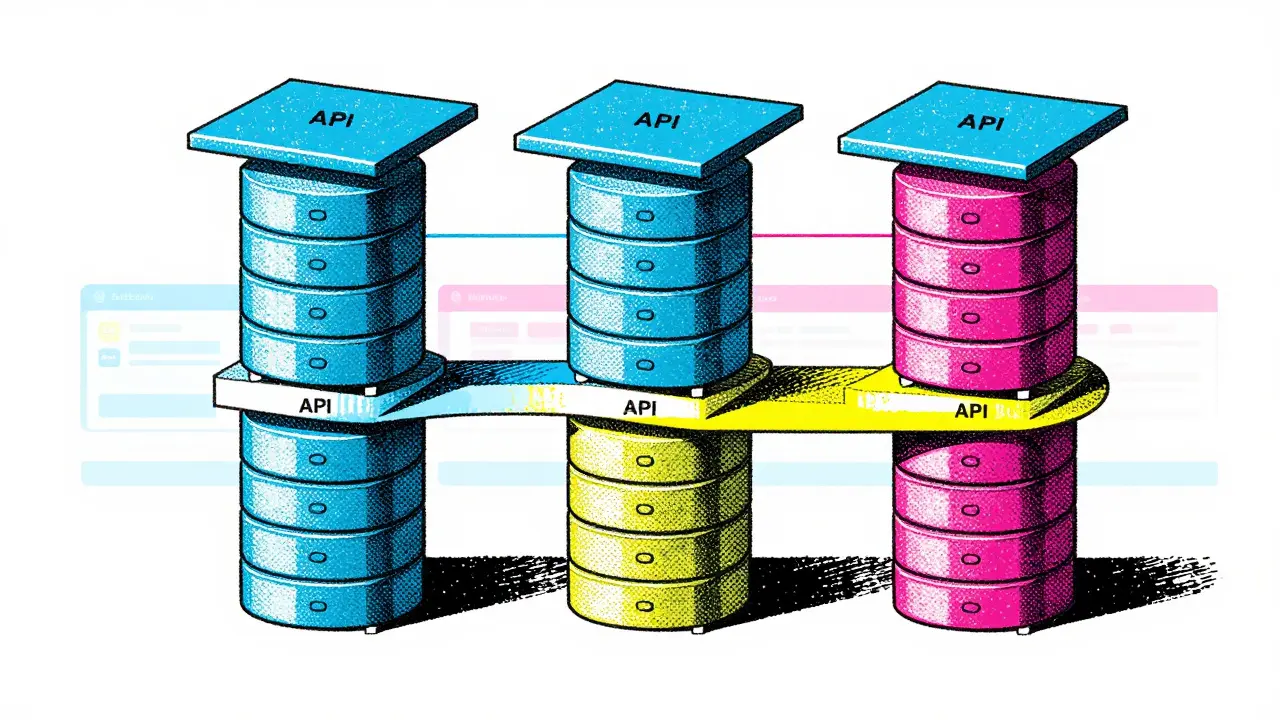

You cannot rely on the AI remembering project specifics forever. Context windows reset, and sessions end. That is why explicit documentation of rules has become a critical asset. This is called Context Engineering. Cursor is a code editor and AI tool often used in conjunction with LLMs to manage project context via .cursor/rules directories. Inside your repository, you create hidden configuration files that act as a constitution for the codebase.

You document things like naming conventions, error handling standards, and preferred library versions. For example, you might specify that all React components use functional hooks rather than classes, or that API responses always include a standardized error structure. When the AI agent starts a task, it reads these rules first. It stops guessing based on generic training data and starts adhering to your team's unique style.

Without this, every file feels like it was written by a different developer. With context engineering, the voice remains consistent even if you switch computers or team members. You are essentially teaching the LLM the internal dialect of your organization. It turns a generalist chatbot into a specialist engineer who knows your company's way of doing things.

Component Libraries and Template Patterns

Hallucinations often happen when styling or layout decisions are made from scratch. The safest bet is to give the AI a set of pre-approved building blocks. Component Library is a collection of reusable UI components that provide a known foundation for LLM generation, ensuring consistency. Before asking the AI to build a dashboard, you provide it with access to a design system or a set of boilerplate templates.

Think of this like giving a carpenter a toolbox before handing them a blank wall. If you know the buttons and forms already exist in your codebase, the AI focuses purely on connecting the data to those elements. Full-stack frameworks like Wasp or Laravel help here because they come with batteries included. The infrastructure is already there, so the AI doesn't waste tokens reinventing the wheel for authentication or routing.

This pattern drastically speeds up the initial phases of development. Instead of negotiating CSS variables, the AI applies existing styles. It ensures that as your application grows, it doesn't look patchworked together. Every button looks the same. Every modal opens the same way. Consistency is the hallmark of quality software, and template patterns enforce that automatically.

Refactoring-Driven Development

There is a temptation to want perfect code on the first try. That rarely happens. A smarter strategy for working with AI is to separate correctness from optimization. Refactoring-Driven Development is a pragmatic approach where developers make code correct first, write good tests, and then let the LLM refactor for performance later. You accept messy code initially as long as the tests pass and the feature works.

Once the logic is solid, you hand the file back to the LLM with a specific instruction: optimize for performance or readability. The AI excels at recognizing patterns within its own output and suggesting cleaner structures. It can see the redundancy in loops or suggest modern JavaScript syntax that saves characters. By deferring optimization, you avoid getting stuck in analysis paralysis during the implementation phase.

This aligns well with iterative development. You validate the idea quickly, then polish the engine later. It prevents the AI from spending cycles trying to be clever instead of being useful. Functional correctness always beats premature optimization.

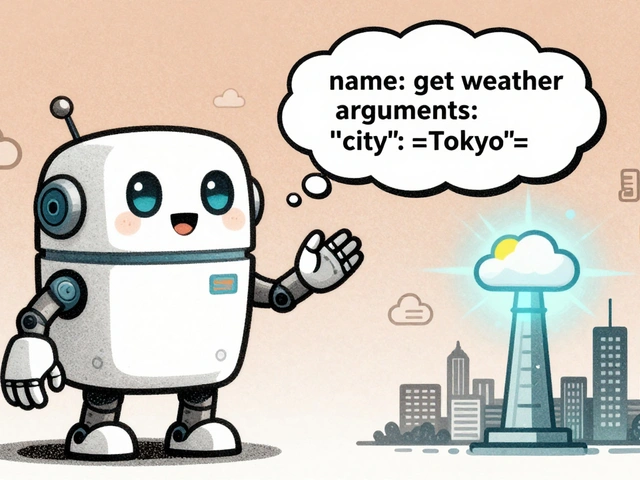

Agent-Based Workflow Management

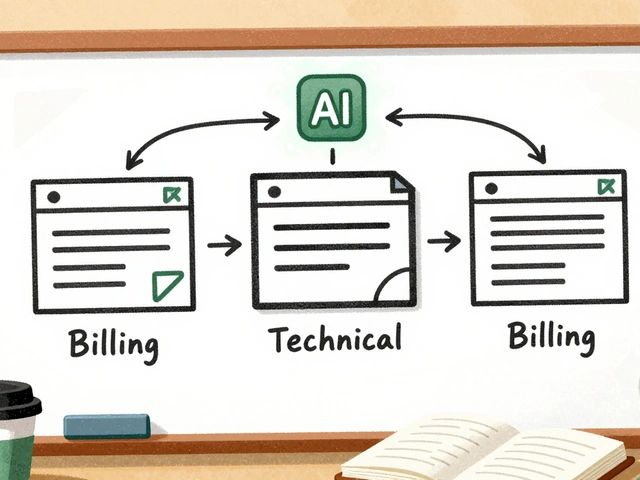

Treating the LLM like a terminal command line is inefficient. Treating it like a junior developer is much better. Agent-Based Workflow is a cyclical approach involving presenting problems to the agent, reviewing results, providing feedback, and iterating toward solutions. This mirrors managing a scrum team or guiding a research student.

Instead of pasting a massive prompt, you break the request down. You say: "Here is the bug report. What do you think is wrong?" You read the diagnosis. If it looks good, you say: "Go ahead and apply the fix." If not, you critique the reasoning. This dialogue creates a feedback loop that improves the result with every turn.

Single-pass prompting rarely solves complex architectural problems. Multi-turn conversation allows the AI to reason through steps before touching the code. It gives you oversight on the decision-making process. You are the architect; the AI is the builder. You check the blueprints, and it pours the concrete.

Comparing Traditional vs. Vibe Coding Patterns

| Aspect | Traditional Waterfall | LLM-Assisted Vibe Coding |

|---|---|---|

| Feature Scope | Layer-by-Layer (All DBs, then Logic) | Vertical Slices (End-to-End Feature) |

| Guidance Method | Static Documentation | Dynamic Context Rules |

| Code Quality Control | Manual Code Review | AI Refactoring Pass |

| Error Handling | Fix after Compilation | Iterative Dialogue Correction |

Frequently Asked Questions

What is the main risk of using LLMs for architecture?

The primary risk is hallucinated dependencies where the AI invents libraries or methods that do not exist. Using Vertical Slices and strict Context Engineering rules limits this scope.

Do I need to know code to use Vibe Coding?

Yes, you still need technical literacy to evaluate the output and understand the implications of the generated code. Vibe coding enhances skills; it does not replace the need for engineering knowledge.

How often should I update my context rules?

Update the .cursor/rules or equivalent documentation whenever you adopt a new library or change a major convention. Consistency relies on these rules being current.

Is Vertical Slice Architecture better than MVC?

They serve different purposes. MVC is a structural pattern for code organization. Vertical Slice is a delivery methodology. You can use both simultaneously, organizing each slice using MVC principles.

Which tools support Context Engineering best?

Tools like Cursor and Claude Code have built-in support for reading local rule files. Standard VS Code extensions can also load project-specific instruction sets effectively.

Ashley Kuehnel

29 March, 2026 - 20:14 PM

Its reall cool that context enginering helps us keep things tidy without going crazy tryng to memorize every rule orelves.

Nicholas Zeitler

31 March, 2026 - 02:50 AM

You are doing an excellent job; articulating these complex concepts clearly.

Keep up the amazing work with your team and their processes!!

Tyler Springall

1 April, 2026 - 12:21 PM

The reliance on stochastic parrots for architectural decisions demeans the discipline of engineering for the true connoisseur.

Amy P

2 April, 2026 - 05:03 AM

This literally changed my whole perspective on how I build apps now!

I never thought about treating the AI like a junior dev before reading this though.

Mongezi Mkhwanazi

2 April, 2026 - 20:44 PM

We must understand the gravity of this shift in paradigm. Many developers ignore the foundational principles of vertical slicing entirely. They simply throw data into the void hoping for coherence. This negligence leads to systems that crumble under minimal pressure. It is imperative that we enforce strict boundaries on our agents. Without such guardrails, chaos inevitably ensues in the codebase. You see the decay in legacy projects everywhere you look today. The vertical slice approach mitigates this specific entropy effectively. Yet even that method requires human oversight to function correctly. One cannot simply abdicate all responsibility to a machine model. The machine is merely a tool designed for efficiency purposes. It lacks the intrinsic understanding of business value logic. Therefore we must remain vigilant in our supervision duties. Ignorance is not bliss when dealing with production environments live. We must prioritize stability over mere speed of generation always.

Patrick Bass

4 April, 2026 - 20:03 PM

Your analysis regarding human oversight is technically sound and well articulated here.

Mark Nitka

5 April, 2026 - 12:04 PM

We need to stop pretending AI replaces senior engineering skills completely.

adam smith

7 April, 2026 - 08:29 AM

The documentation is clear.

Colby Havard

8 April, 2026 - 13:15 PM

Indeed; the philosophical implication of delegating architecture suggests a shift in the definition of craftsmanship itself.