Business Technology AI Scripts: Deploy LLMs, Cut Costs, and Stay Compliant

When you're building AI into your business tech, you're not just writing code—you're managing large language models, AI systems that process and generate human-like text based on massive datasets. Also known as LLMs, they power chatbots, automate reports, and even guide field technicians—but only if you handle them right. Most companies fail because they treat LLMs like regular software. They spin up a model, throw in some prompts, and hope for the best. Then the bills spike, the legal team panics, and the engineers are stuck debugging a black box that costs $2,000 a day to run.

That’s where smart business tech comes in. It’s not about having the fanciest model. It’s about knowing how cloud cost optimization, strategies like autoscaling and spot instances that reduce AI infrastructure expenses without losing performance works. It’s about understanding how AI compliance, the rules around data use, export controls, and state-level laws that govern how AI models are trained and deployed affects your bottom line. And it’s about using LLM autoscaling, automated systems that adjust computing power based on real-time demand to avoid paying for idle GPUs so you’re not overpaying during slow hours. These aren’t theoretical ideas. They’re the difference between a prototype that dies and a tool that makes your team 10x more efficient.

You’ll find posts here that show you exactly how to fix the biggest headaches in business AI: why your LLM bill jumps when users ask long questions, how California’s new law forces you to track training data, how spot instances can slash your cloud costs by 60%, and how field service teams use AI to cut repair times in half. No fluff. No buzzwords. Just real strategies used by teams running AI in production—where mistakes cost money, time, and trust.

What follows isn’t a list of tools. It’s a roadmap. A way to move from guessing what your AI will do next to knowing exactly how it behaves, how much it costs, and whether you’re breaking any rules. If you’re building, managing, or paying for AI in your business, this is where you start.

Legal Services and Generative AI: Automate Documents, Review Contracts, and Manage Knowledge

Generative AI is transforming legal services by automating document creation, speeding up contract review, and unlocking instant access to legal knowledge. Firms using these tools save hundreds of hours per lawyer annually while improving accuracy and client trust.

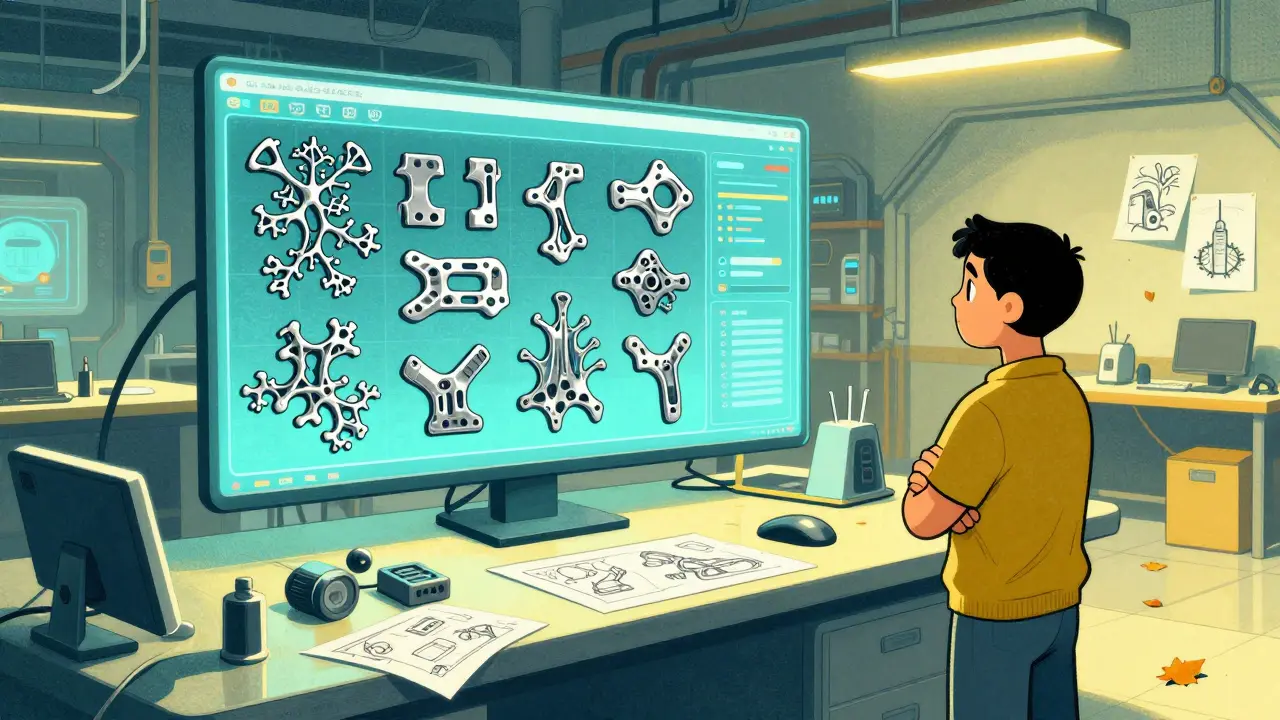

Read MoreManufacturing with Generative AI: Design, Maintenance, and Quality Control in 2026

Generative AI is transforming manufacturing by automating design, predicting equipment failures, and catching defects before they happen. By 2026, it’s no longer optional-it’s essential for efficiency, quality, and sustainability.

Read MoreOpen-Source Generative AI Compliance: Licenses, Attribution, and Derivative Works

Open-source generative AI offers control and privacy-but only if you follow license rules. Learn what attribution, derivative works, and commercial use really mean for your business.

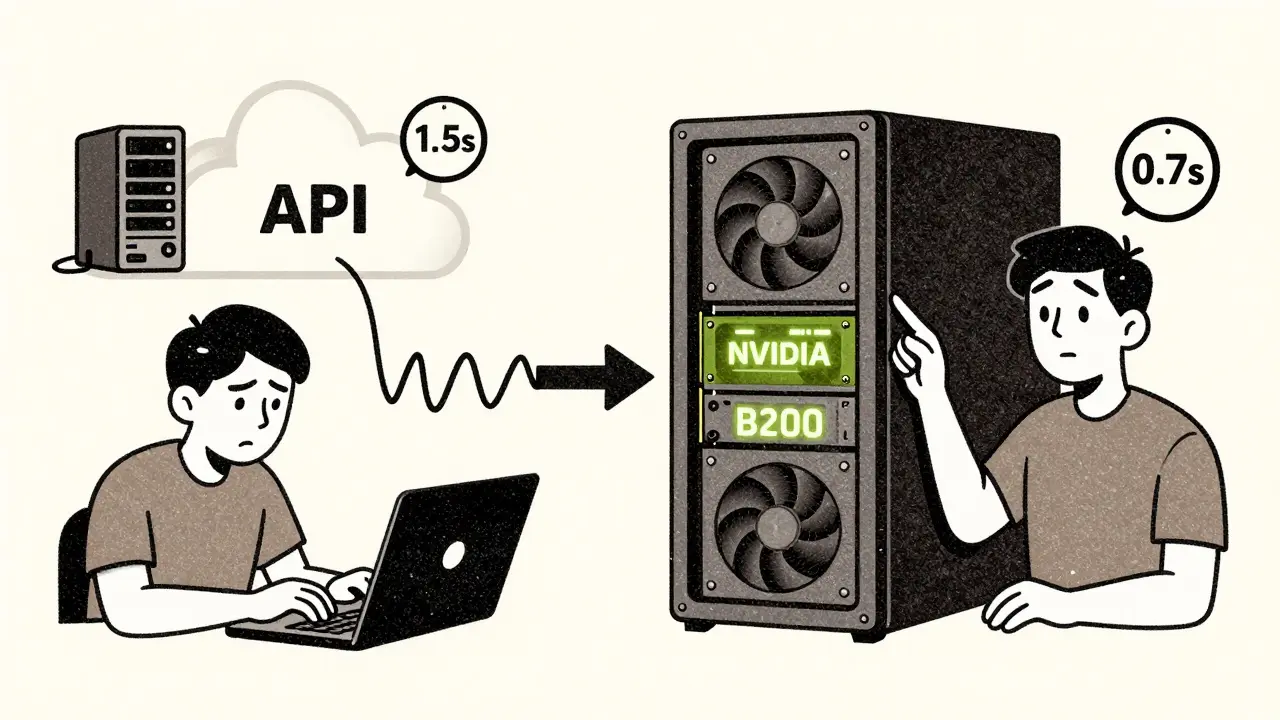

Read MoreLatency and Control Tradeoffs: API LLMs vs On-Prem Deployment

Choosing between API-based LLMs and on-prem deployment affects latency, data control, cost, and scalability. Learn when to use each-and how top companies combine both for optimal results.

Read MoreConsent Management in Generative AI: How Users Control Their Data

Generative AI learns from your data-but do you really control how it’s used? Learn how consent management works, what rights you have, and how to take back control over your personal information.

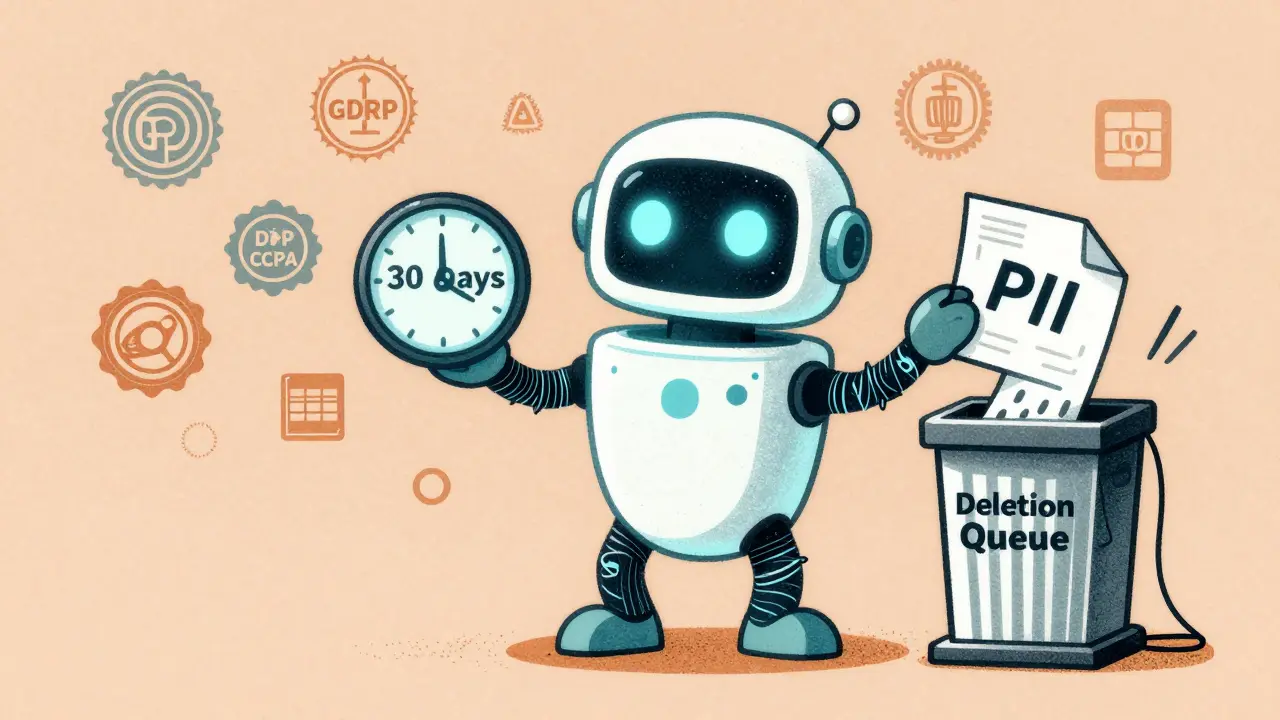

Read MoreRetention and Deletion Policies for LLM Prompts and Logs: What You Need to Know

LLM prompts and logs contain sensitive data that must be retained and deleted carefully. Learn how retention timelines, encryption, and automation impact compliance and security in enterprise AI systems.

Read MoreEthical Guidelines for Deploying Large Language Models in Regulated Domains

Deploying large language models in healthcare, finance, or justice requires more than just good intentions. Ethical guidelines must include continuous monitoring, explainability, accountability, and domain-specific compliance to avoid real-world harm and legal consequences.

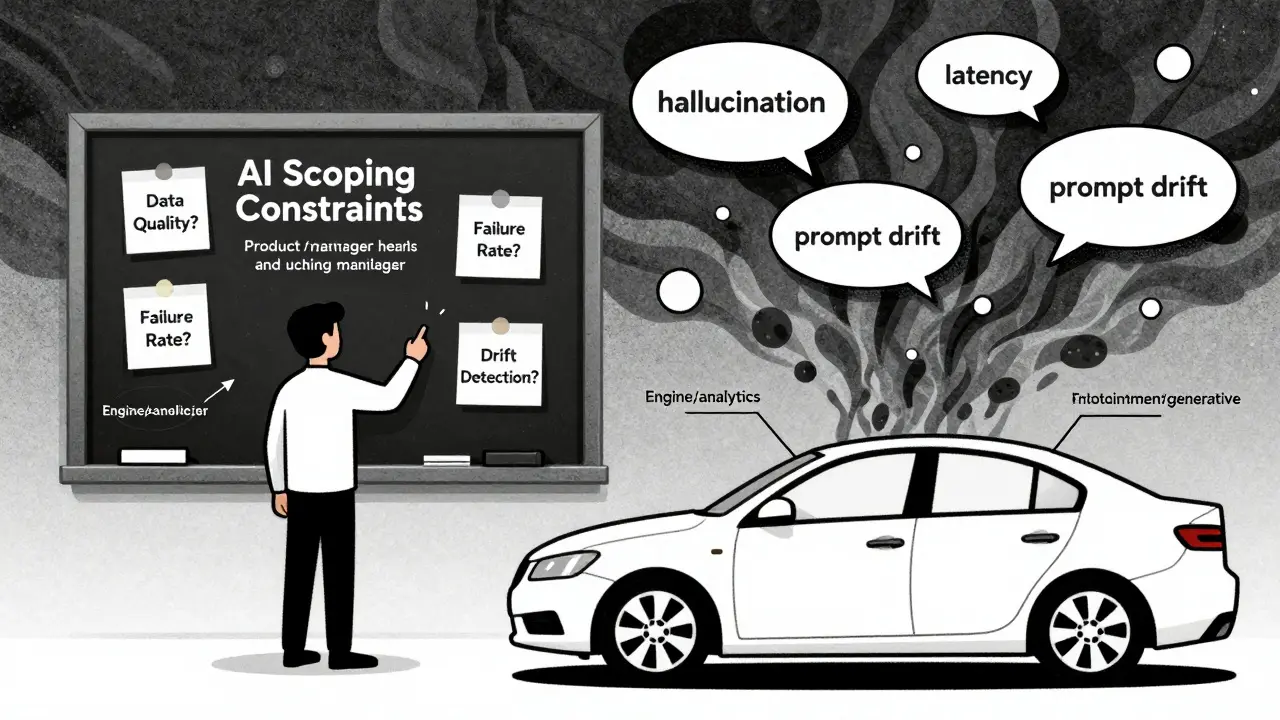

Read MoreProduct Management for Generative AI Features: Scoping, MVPs, and Metrics

Learn how to scope, build, and measure generative AI features without falling into common traps. Real-world strategies for MVPs, metrics, and team alignment that actually work.

Read MoreToken Budgets and Quotas: How to Stop LLM Costs from Spiralng Out of Control

Token budgets and quotas are the only way to stop LLM costs from spiraling out of control. Learn how top companies use precise limits on input and output tokens to cut AI spending by up to 63%-without sacrificing performance.

Read MoreHow Generative AI Is Transforming Performance Reviews and Career Paths in HR

Generative AI is transforming performance reviews and career paths by making feedback fairer, faster, and more personalized. Learn how it works, its real-world impact, and the risks HR teams must manage in 2026.

Read MoreMarketing Analytics with LLMs: How AI Detects Trends and Powers Campaigns in 2026

LLMs are transforming marketing analytics by detecting trends 37% faster and cutting analysis time by 64%. Learn how top brands use AI for real-time campaign insights, the tools behind them, and why transparency and human oversight still matter in 2026.

Read MoreEnterprise Adoption, Governance, and Risk Management for Vibe Coding

Enterprise vibe coding accelerates development but introduces new risks. Learn how to govern AI-generated code, enforce compliance, and manage security without slowing innovation.

Read More