When companies start using open-source generative AI models, they think they’re getting a free tool. But freedom doesn’t mean no rules. Open-source generative AI comes with legal obligations that can trip up even experienced teams. Skip the paperwork, and you risk lawsuits, data breaches, or regulatory fines. This isn’t about being paranoid-it’s about knowing what you’re legally responsible for when you use models like LLaMA 3, Mistral, or Gemma.

What Licenses Actually Require

Not all open-source AI licenses are the same. You can’t treat them like free software from GitHub. The three most common licenses for generative AI are Apache 2.0, MIT, and GPL. Each has different rules, and mixing them can break compliance.Apache 2.0 is a permissive license that allows commercial use, requires attribution, and mandates disclosure of modifications. It’s used by Google Gemma, Mistral AI’s Mixtral, and H2O.ai’s h2oGPT. If you tweak Gemma to work better with your internal documents, Apache 2.0 says: include the original license text, list every change you made, and clearly mark modified files. No hidden edits. No silent rebranding. If you miss this, you’re in violation-even if no one finds out.

MIT License is simpler: give credit and you’re good. No need to document every change. But it doesn’t protect you from patent lawsuits. If someone sues you over the code, you lose your right to use it. That’s why many enterprises avoid MIT for production AI systems.

GNU GPL is the strictest. If you modify a GPL-licensed model and distribute it-even internally-you must release your entire modified version under GPL. That means your proprietary business logic, training data, and custom prompts become public. Most companies avoid GPL models for this reason.

Attribution Isn’t Just a Thank You

Attribution isn’t a footnote. It’s a legal requirement. For Apache 2.0, you need to:- Include the full license text with your deployment

- List all modifications made to the original model

- Place clear notices in user interfaces, documentation, or API responses

One financial services firm used Mistral for customer service chatbots. They didn’t document changes. When auditors reviewed their AI system, they found 17 hidden modifications. The company had to rebuild their entire deployment, lose three months of development time, and pay legal fees. Attribution isn’t optional-it’s an audit trail.

Some models, like Japanese-LLaMA from rinna, explicitly forbid commercial use. Others, like OpenHermes from Nous Research, allow it-but require attribution in every output. If your AI assistant says, “I’m powered by OpenHermes,” that’s not a feature. It’s a legal necessity.

Derivative Works: When Your AI Becomes Something New

A derivative work isn’t just a tweaked model. It’s anything that builds on the original code, weights, or training data. Fine-tuning? That’s a derivative. Adding custom prompts? That’s a derivative. Training on internal documents using the model’s architecture? That’s still a derivative.Companies think they’re safe if they train on private data. They’re not. If the base model is under Apache 2.0, your fine-tuned version still inherits the license. You can’t lock it behind a paywall without fulfilling attribution. You can’t sell it as your own AI without disclosing the original source.

Take a healthcare startup that used LLaMA 3 to build a diagnostic assistant. They trained it on thousands of anonymized patient records. They thought the data made it theirs. Legally, it didn’t. The underlying model was still Meta’s LLaMA 3. They had to add attribution banners in their app, publish a change log, and include the Apache 2.0 license in their documentation. No exceptions.

Why Local Deployment Matters

Commercial AI APIs like ChatGPT, Gemini, or Claude are easy. But they’re risky. When you send data to OpenAI, you’re trusting them with your secrets. That’s fine for public websites. Not for medical records, legal contracts, or financial reports.Open-source models let you run AI inside your own servers. No data leaves your network. That’s why companies in healthcare, law, and defense are shifting to local deployment. It’s not just about security-it’s about compliance with HIPAA, GDPR, and SOC 2.

Google Gemma (2B and 7B versions) runs on a single GPU. Mistral’s MoE architecture cuts inference costs by 60% compared to full models. These aren’t just technical specs-they’re compliance enablers. You can deploy a powerful AI without touching the cloud.

Commercial vs. Open-Source: The Real Trade-Off

Microsoft Copilot (with A3/A5 licenses) offers enterprise data protection. It’s convenient. But it’s tied to Microsoft’s infrastructure. You can’t audit how your data is used. You can’t customize the model. You’re at their mercy.Open-source gives you control. But you pay in complexity. You need engineers who understand licensing. You need systems to track modifications. You need legal teams to review every model you adopt.

Free tiers of ChatGPT and Gemini? They’re not just risky-they’re non-compliant for business use. OpenAI’s terms say they can use your inputs to train future models. If you feed them a confidential contract, you’re giving away your IP. No one warns you about this until you’re sued.

How to Stay Compliant

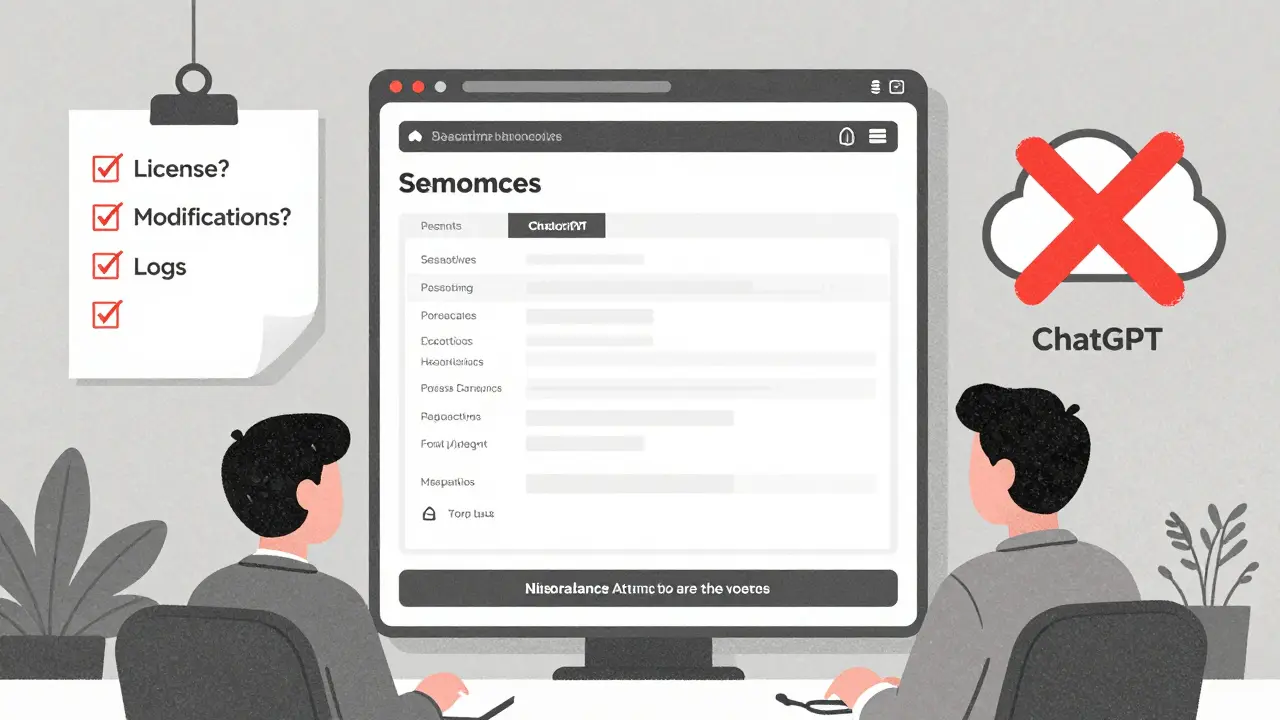

Here’s what actually works:- Start with a license checklist. Know which license each model uses before you download it.

- Use a model inventory tool. Track every model you deploy, where it came from, and what changes you made.

- Automate attribution. Build a system that inserts license notices into AI outputs automatically.

- Never use GPL models for commercial products unless you’re ready to open-source everything.

- Train on private data? Document the training process. Include the base model name, version, and license in your audit logs.

- Test before you deploy. Run a compliance scan on your AI stack quarterly.

H2O.ai’s h2oGPT helps by bundling models with built-in license tracking. It supports PDFs, Word docs, and emails-and auto-generates attribution logs. That’s not magic. It’s intentional design.

What Happens When You Ignore This

A European SaaS company used an open-source LLM to automate contract reviews. They didn’t attribute the model. A competitor found the unlicensed code in their app and sued for copyright infringement. The court ordered them to shut down the AI feature and pay $2.1 million in damages. The model? LLaMA 2 under Meta’s non-commercial license.Another company in Canada used a modified Mistral model for customer support. They didn’t document changes. When the EU’s AI Act came into force, regulators flagged them for non-compliance. They had to rebuild their entire system under Apache 2.0 and pay €480,000 in fines.

These aren’t edge cases. They’re becoming standard enforcement actions.

Where to Start

If you’re thinking about using open-source AI:- Read the license. Don’t assume. Don’t skip.

- Choose models with clear commercial use terms: Gemma, Mistral, Mixtral, OpenHermes.

- Deploy locally. Avoid cloud APIs for sensitive data.

- Build attribution into your workflow-not as an afterthought, but as a core requirement.

Open-source AI isn’t free. It’s a responsibility. The best models aren’t the ones with the highest scores. They’re the ones you can legally use-and defend.

Can I use LLaMA 3 for my business?

Yes, but only if you follow Meta’s Commercial Use Allowed terms. You can use LLaMA 3 in production, but you can’t claim you built it. You must include attribution in your product documentation and user interfaces. You also can’t use it to train competing models. Check Meta’s official license page for the latest terms.

Do I need to attribute if I only use the model internally?

Yes. Even if you’re not distributing the model externally, most licenses (like Apache 2.0) still require attribution in documentation, system logs, or internal reports. If you modify the model, you must document those changes. Internal use doesn’t mean internal exemption.

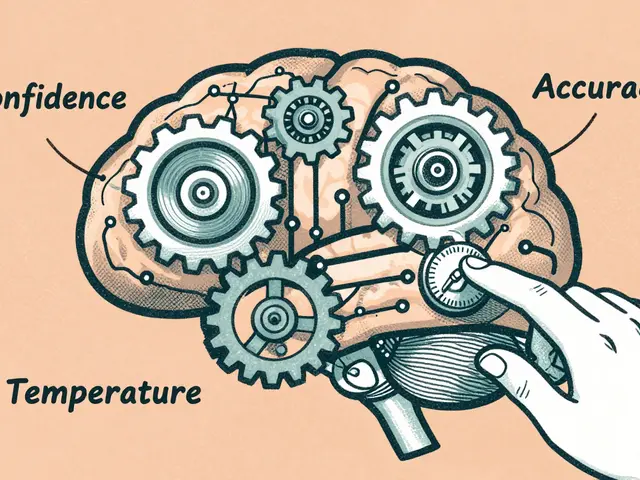

What’s the difference between fine-tuning and training from scratch?

Fine-tuning starts with an existing model and adjusts its weights using your data. That’s still a derivative work under most licenses. Training from scratch means building a new model without using the original weights. That’s usually not a derivative-but it’s extremely rare. Most businesses fine-tune, which means they inherit the original license obligations.

Can I sell a product built on an open-source AI model?

Yes-if the license allows commercial use and you follow attribution rules. Apache 2.0, MIT, and BSD licenses permit sales. GPL does too, but you must release your source code. Always verify the license before building a product. Selling without compliance is a lawsuit waiting to happen.

Is Google Gemma safer than ChatGPT for enterprise use?

Yes, if you deploy it locally. Gemma is Apache 2.0 licensed, so you can run it on your servers without sending data to Google. ChatGPT’s free tier doesn’t offer data protection-even paid tiers can use your inputs for training. For compliance, local deployment beats cloud access every time.

poonam upadhyay

24 February, 2026 - 18:07 PM

Oh my god, this post is like a horror movie for legal teams-except the monster is a tiny Apache 2.0 license file buried in a GitHub repo. I once used Mistral for a client project and thought, 'Eh, it's just a model, who cares?' Two weeks later, my lawyer called me at 3 a.m. screaming about 'unattributed derivative works.' I had to delete 14 fine-tuned models, rewrite 300 pages of documentation, and apologize to my CTO like I'd set his cat on fire. Attribution isn't optional. It's a tattoo you didn't ask for but now can't remove.

Shivam Mogha

24 February, 2026 - 20:59 PM

Just read the license. Done.

mani kandan

25 February, 2026 - 21:10 PM

I appreciate how this breaks down the real-world consequences-not just legal jargon but actual business fallout. The part about the European SaaS company losing $2.1 million? That’s not a cautionary tale. That’s a wake-up call wrapped in a court order. I’ve seen teams skip attribution because ‘no one’s checking,’ only to get flagged during a vendor audit. It’s not about trust-it’s about traceability. And honestly? Automating attribution isn’t a luxury anymore. It’s the new DevOps baseline. If your CI/CD pipeline doesn’t spit out license notices with every build, you’re already behind.

Also, local deployment isn’t just about security-it’s about sovereignty. When your AI runs on your hardware, your data stays yours. No cloud vendor can claim ownership, no TOS can bury you in fine print. Gemma on a single GPU? That’s not just efficient-it’s empowering.

And yes, fine-tuning = derivative. Always. Even if you change one weight. The license doesn’t care how ‘minor’ you think it is. That’s the whole point: the original creators didn’t give you freedom to ignore them. They gave you freedom to build-on their terms.

One thing I’d add: start a model inventory spreadsheet. Not a fancy tool. Just a Google Sheet. Model name. License. Version. Date deployed. Changes made. Who approved it. That’s your first line of defense. Simple. Sustainable. Survival-ready.

Rahul Borole

27 February, 2026 - 01:21 AM

Thank you for this comprehensive and urgently necessary overview. The rise of open-source generative AI has outpaced institutional compliance frameworks, and the consequences are no longer theoretical. Organizations that treat licensing as an afterthought are not merely taking risks-they are actively exposing themselves to existential liability.

It is imperative that legal, engineering, and procurement teams operate in synchronized alignment. A single unattributed model can trigger regulatory scrutiny, contractual breach, and reputational damage that spans global jurisdictions. The examples cited-from the EU AI Act penalties to the $2.1 million litigation-are not outliers; they are harbingers.

Our enterprise has implemented a mandatory pre-deployment compliance checklist modeled after H2O.ai’s framework, integrated directly into our Kubernetes deployment manifests. Every containerized AI service now carries embedded license metadata, automated attribution headers in API responses, and a signed attestation from the responsible engineer. This is not overhead. This is due diligence.

Furthermore, we have discontinued the use of all MIT-licensed models in production environments. While permissive, the lack of patent protection renders them unacceptable for commercial deployment. Apache 2.0 remains our gold standard. GPL remains off-limits unless full open-sourcing of proprietary logic is strategically aligned with business objectives-something we have yet to encounter.

Proactive compliance is not a cost center. It is a competitive advantage. Companies that institutionalize this discipline will outlast those who gamble on negligence.

Sheetal Srivastava

27 February, 2026 - 09:15 AM

Ugh, I can't believe we're still having this conversation. The entire premise is so... basic. If you're deploying generative AI without a full-scale IP governance framework, you're not a technologist-you're a liability magnet. And let's be honest, most teams don't even understand what a 'derivative work' means in the context of weight-space interpolation. It's not just fine-tuning-it's semantic entanglement. You're not 'training' on data; you're co-creating a latent space that inherits the original model's DNA. That's not a metaphor. That's a legal fact under copyright jurisprudence.

And attribution? Please. You think slapping a footer in your UI is enough? No. You need cryptographic provenance trails. Chain-of-custody logs. Model lineage graphs. NIST SP 800-190 compliance. If you're not using SBOMs (Software Bill of Materials) with SPDX identifiers for every LLM variant, you're operating in a legal gray zone that's about to get painted red by regulators.

And don't even get me started on 'internal use.' That's a myth perpetuated by engineers who think GDPR doesn't apply to their sandbox. Wrong. If your fine-tuned LLaMA 3 is processing EU citizen data-even anonymously-it triggers Article 30 obligations. You need a DPA. You need a Data Protection Impact Assessment. You need a legal opinion signed by someone who actually read the GDPR recitals.

Open-source AI isn't free. It's a compliance minefield with a glittery logo. And if you're not ready to invest in a full-stack legal engineering team? Then don't touch it. Seriously. Walk away. The cost of a single violation isn't just financial-it's career-ending.