Best PHP AI Scripts - Page 6

Scaling for Reasoning: How Thinking Tokens Are Rewriting LLM Performance Rules

Thinking tokens are transforming how LLMs reason by targeting inference-time bottlenecks. Unlike traditional scaling, they boost accuracy on math and logic tasks without retraining - but at a high compute cost.

Read MoreMeasuring Hallucination Rate in Production LLM Systems: Key Metrics and Real-World Dashboards

Learn how to measure hallucination rates in production LLM systems using real-world metrics like semantic entropy and RAGAS. Discover what works, what doesn’t, and how top companies are reducing factuality risks in 2025.

Read MoreWhy Multimodality Is the Next Big Leap in Generative AI

Multimodal AI combines text, images, audio, and video to understand context like humans do-making generative AI smarter, faster, and more accurate than text-only systems. Here's how it's already changing healthcare, customer service, and marketing.

Read MoreCode Ownership Models for Vibe-Coded Repos: Avoiding Orphaned Modules

Vibe coding with AI tools like GitHub Copilot is speeding up development-but leaving behind orphaned modules no one understands or owns. Learn the three proven ownership models that prevent production disasters and how to enforce them today.

Read MoreThreat Modeling for Vibe-Coded Applications: A Practical Workshop Guide

Vibe coding speeds up development but introduces serious security risks. This guide shows how to run a lightweight threat modeling workshop to catch AI-generated flaws before they reach production.

Read MoreLegal and Licensing Considerations for Deploying Open-Source Large Language Models

Open-source LLMs can save millions in API costs-but only if you follow the license rules. Learn how MIT, Apache 2.0, and GPL licenses affect commercial use, training data risks, and compliance steps to avoid lawsuits.

Read MoreModel Compression Economics: How Quantization and Distillation Cut LLM Costs by 90%

Quantization and distillation cut LLM inference costs by up to 95%, enabling affordable AI on edge devices and budget clouds. Learn how these techniques work, when to use them, and what hardware you need.

Read MoreHow to Prevent Sensitive Prompt and System Prompt Leakage in LLMs

System prompt leakage is now a top AI security threat, letting attackers steal hidden instructions from LLMs. Learn how to stop it with proven techniques like output filtering, instruction defense, and external guardrails.

Read MoreMultimodal Generative AI: Models That Understand Text, Images, Video, and Audio

Multimodal generative AI understands text, images, audio, and video together-making it smarter than older AI systems. Learn how models like GPT-4o and Llama 4 work, where they’re used, and why they’re changing industries in 2025.

Read MoreRetail and Generative AI: How AI Is Transforming Product Copy, Merchandising, and Visual Assets

Generative AI is transforming retail by automating product copy, personalizing merchandising, and creating virtual try-ons. Learn how top retailers are using AI to boost conversions, cut costs, and stay ahead-without losing brand voice.

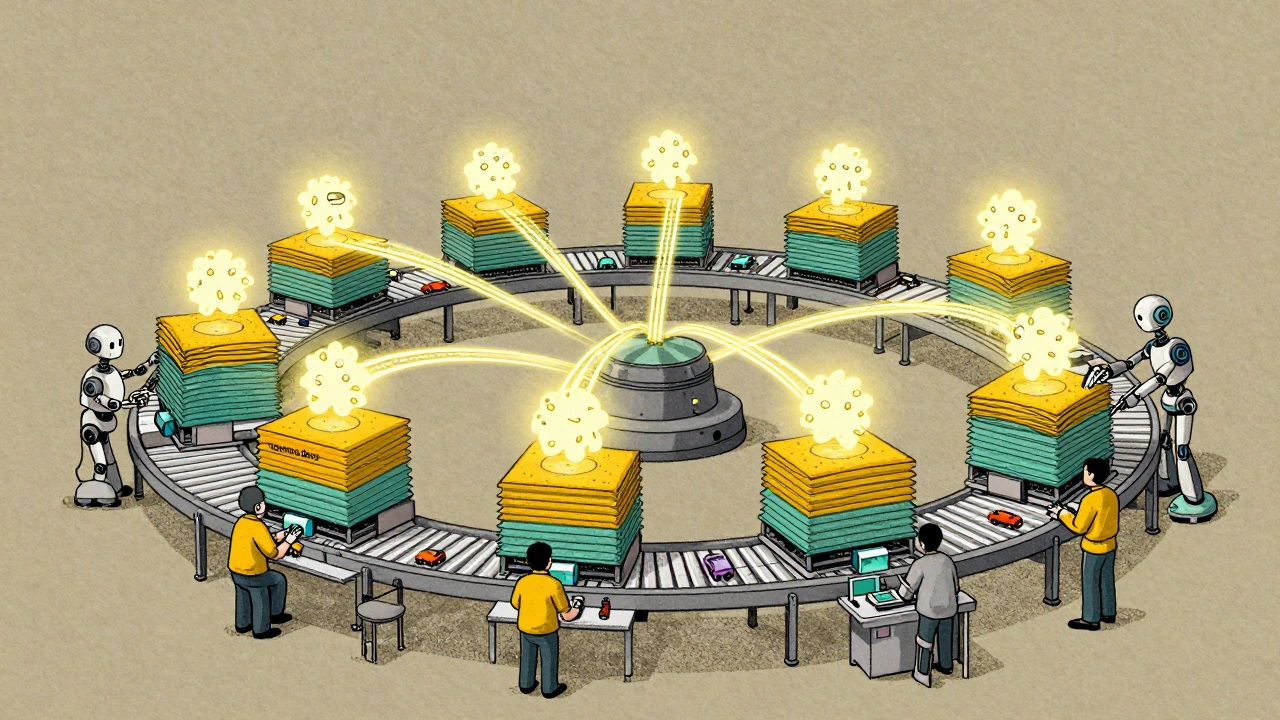

Read MoreModel Parallelism and Pipeline Parallelism in Large Generative AI Training

Model and pipeline parallelism enable training of massive generative AI models by splitting them across multiple GPUs. Learn how these techniques overcome GPU memory limits and power models like GPT-3 and Claude 2.

Read MoreFew-Shot vs Fine-Tuned Generative AI: How Product Teams Should Decide

Learn how product teams should choose between few-shot learning and fine-tuning for generative AI. Real cost, performance, and time comparisons for practical decision-making.

Read More