Best PHP AI Scripts - Page 4

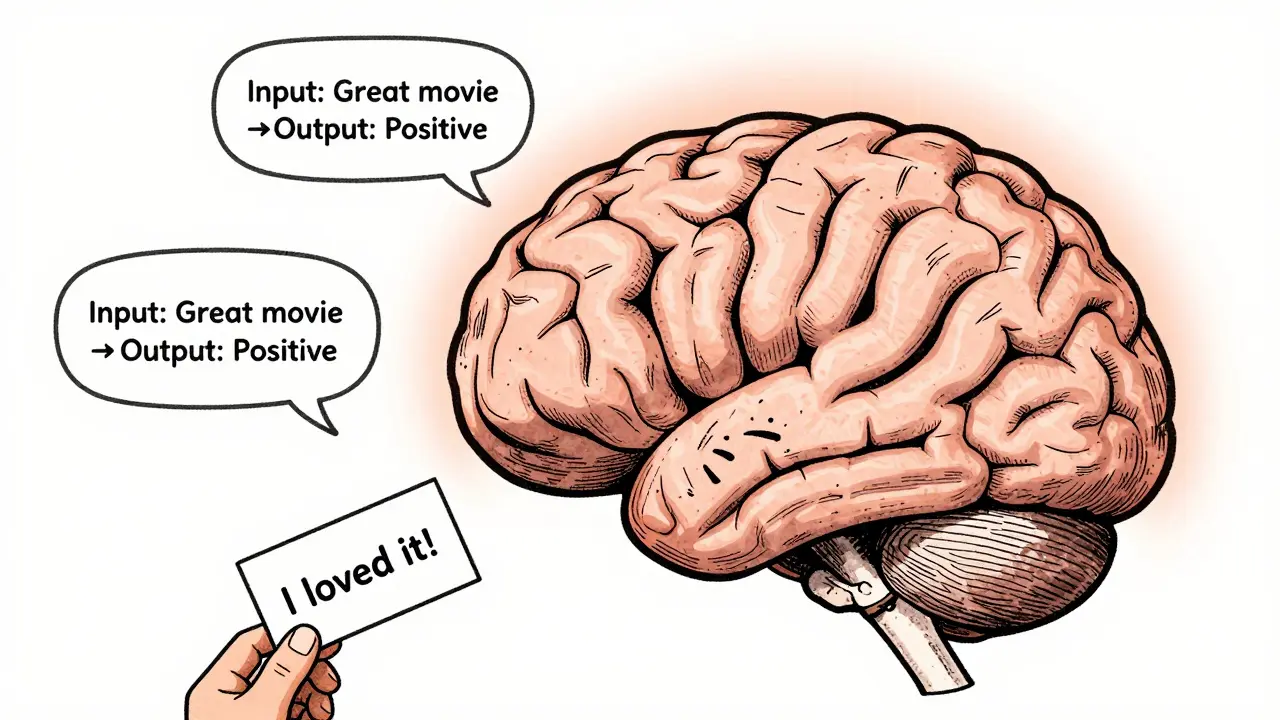

In-Context Learning in Large Language Models: How LLMs Learn from Prompts Without Training

In-context learning lets large language models perform new tasks just by seeing examples in prompts-no training needed. Discover how it works, why it's replacing fine-tuning, and how to use it effectively.

Read MoreCurriculum and Blending: How to Mix Datasets for Better Large Language Models

Curriculum learning improves LLM performance by sequencing training data from simple to complex. This method boosts accuracy, cuts compute costs, and works best on structured tasks like math and code. It's becoming standard in modern AI training pipelines.

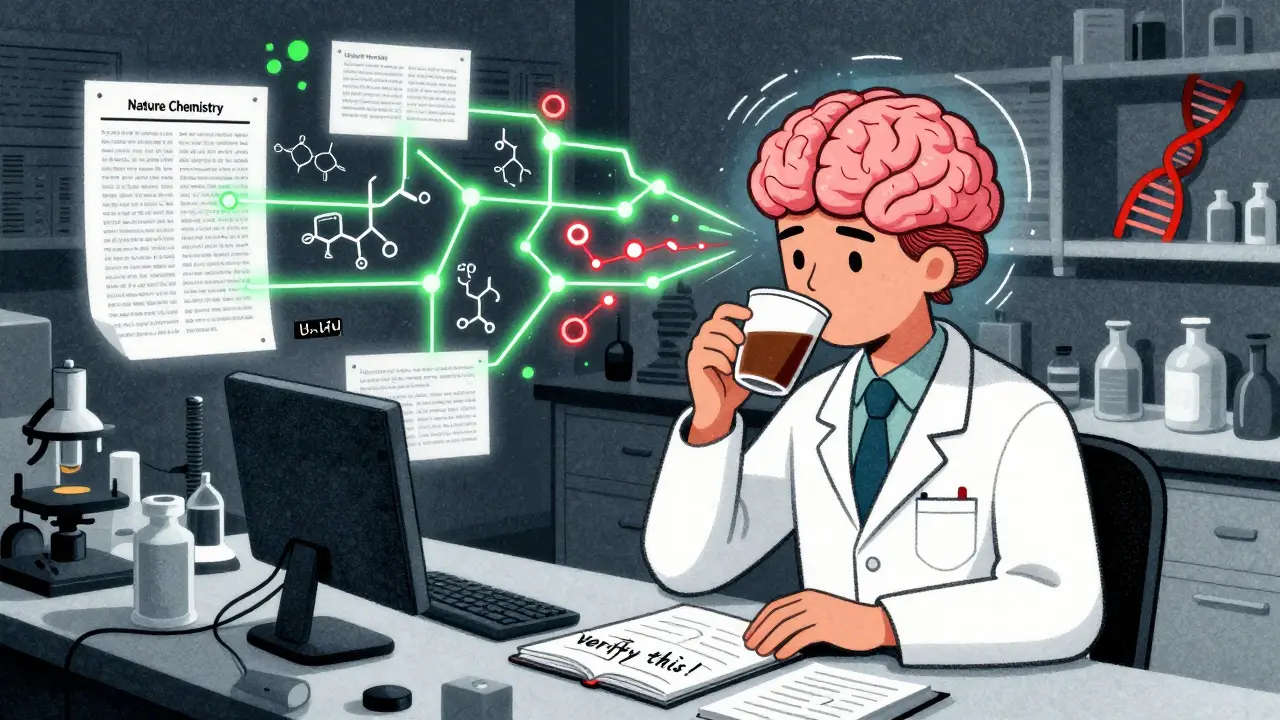

Read MoreScientific Workflows with Large Language Models: How Hypotheses and Methods Are Changing Research

Large language models are transforming scientific research by automating literature reviews, generating hypotheses, and designing experiments. But they come with serious risks-hallucinations, errors, and overreliance. Learn how Sci-LLMs work, where they excel, and how to use them safely.

Read MoreInternal Marketplaces for Vibe-Coded Components and Services

Internal marketplaces for vibe-coded components let non-engineers build and share AI-generated tools safely. With proper governance, companies save hundreds of hours, reduce shadow IT, and turn every employee into a creator.

Read MoreLong-Context Risks in Generative AI: Distortion, Drift, and Lost Salience

Long-context AI models can process massive amounts of text, but they struggle with distortion, drift, and lost salience-especially in the middle of documents. Learn how these risks undermine reliability and what’s being done to fix them.

Read MorePost-Training Quantization for Large Language Models: 8-Bit and 4-Bit Methods Explained

Post-training quantization cuts LLM memory use and speeds up inference by 2-3x without retraining. Learn how 8-bit and 4-bit methods like SmoothQuant, AWQ, and GPTQ make it possible-and what you need to know to use them.

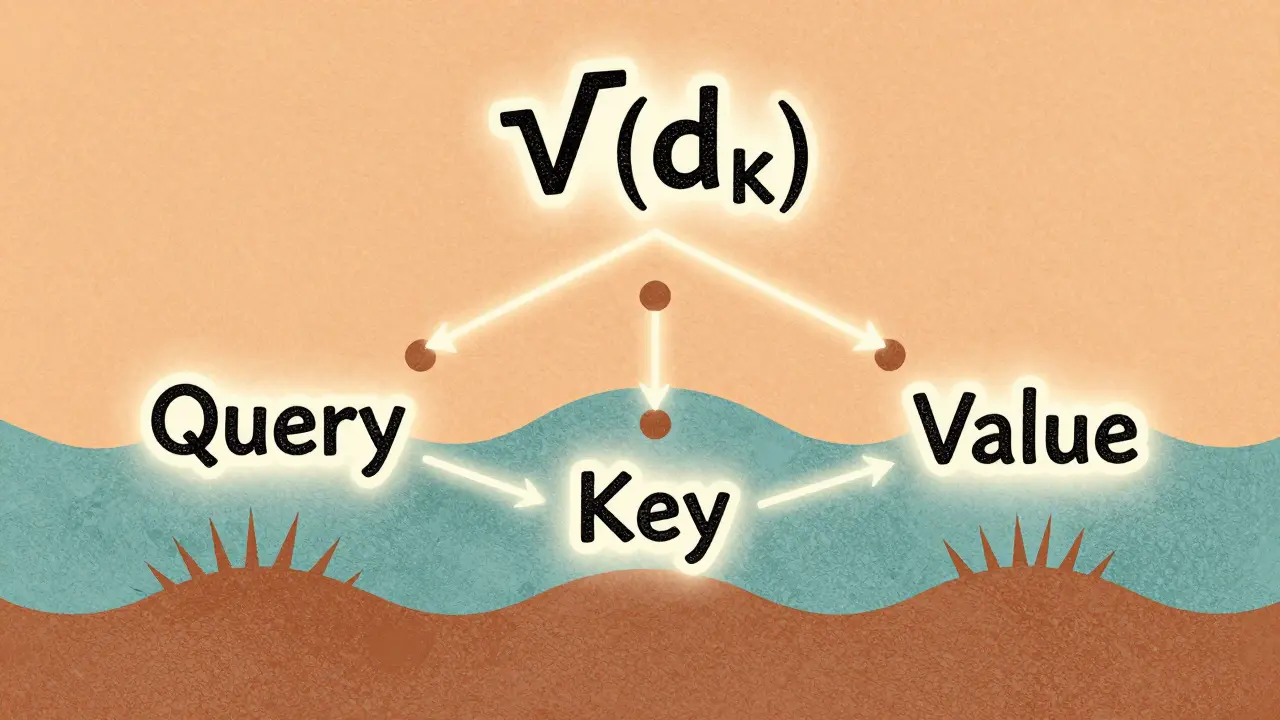

Read MoreScaled Dot-Product Attention Explained for Large Language Model Practitioners

Scaled dot-product attention is the core mechanism behind modern LLMs like GPT and Llama. Learn why the 1/√(d_k) scaling is non-negotiable, how it prevents training collapse, and what pitfalls to avoid in practice.

Read MoreEthical AI Agents for Code: How Guardrails Enforce Policy by Default

Ethical AI agents for code are designed to refuse illegal or unethical commands by default, using policy-as-code architecture to enforce compliance without human intervention. This approach is becoming the new standard for trustworthy AI in government, finance, and development.

Read MoreSafety by Design in Generative AI: How to Embed Protections into Product Architecture

Safety by Design embeds child protection and harm prevention directly into generative AI architecture-from training data to real-time filtering. This isn't optional. It's the only way to build AI that doesn't become a weapon.

Read MoreTransparency and Explainability in Large Language Model Decisions

Transparency and explainability in large language models are critical for trust and fairness. Without knowing how decisions are made, AI risks reinforcing bias and eroding public trust - especially in high-stakes areas like finance and healthcare.

Read MoreData Augmentation for LLM Fine-Tuning: Synthetic and Human-in-the-Loop Approaches

Data augmentation boosts LLM fine-tuning by generating realistic training examples using synthetic methods and human feedback. Learn how synthetic data and human-in-the-loop approaches improve accuracy, reduce costs, and work with LoRA for efficient model adaptation.

Read MoreCitations and Sources in Large Language Models: What They Can and Cannot Do

LLMs can generate convincing citations-but most are fake. Learn why AI hallucinates sources, how often they get it wrong, and how to use them safely without trusting their references.

Read More