Remember when writing a simple API endpoint took an hour of boilerplate? That era is dead. By early 2026, developers aren't just asking Large Language Models to write snippets; they are handing over entire repositories to models like GPT-5.2 or Gemini 3 Pro. The shift isn't just about speed-it's about scale. But with great power comes a messy reality: your AI assistant might introduce a critical security flaw while refactoring your authentication logic. You need to know what these models can actually do, where they break, and how to keep your codebase secure.

The State of Code Generation in 2026

We have moved past the "wow" factor of GPT-3. Today, code generation is a utility, like electricity. The difference lies in precision. In January 2026, benchmarks like LiveCodeBench set a clear bar: scores above 85% mean excellent capability, while anything above 70% is considered production-ready for most tasks. Leading closed-source models like OpenAI’s GPT-5.2 hit that 89% mark, showing near-human reliability in standard programming challenges.

But it’s not just about raw accuracy. It’s about context. Google’s Gemini 3 family changed the game with its massive context window-up to 2 million tokens. This allows you to upload an entire library, video instructions, and multiple folders simultaneously. The model doesn’t just see a function; it sees the whole project. This holistic view helps identify bugs caused by interactions across dozens of files, something older models struggled with because they only looked at isolated snippets.

Open Source vs. Closed Source: The Real Trade-offs

You might think paying for API access is the easiest route, but the open-source landscape has caught up dramatically. Models like Alibaba’s Qwen3.5-397B-A17B and Zhipu AI’s GLM-5 now rival proprietary tools in performance. GLM-5, for instance, achieves state-of-the-art scores on software engineering benchmarks like SWE-bench. It uses DeepSeek Sparse Attention (DSA) to reduce compute costs while handling long contexts efficiently.

| Model | Type | Key Strength | Context Window | Best For |

|---|---|---|---|---|

| GPT-5.2 | Closed | Reasoning & Accuracy (89%) | Standard | General purpose, rapid prototyping |

| Gemini 3 Pro | Closed | Holistic Project View | Up to 2 Million Tokens | Complex refactoring, multi-file debugging |

| GLM-5 | Open Source | Agent Workflows & Cost Efficiency | Long (via DSA) | Enterprise self-hosting, terminal coding |

| Ling-1T | Open Source | Front-end Aesthetics & Tool Use | 128K Native | UI/UX generation, visual components |

Choosing between them depends on your constraints. If you prioritize privacy and control, self-hosting GLM-5 or Qwen3.5 makes sense, though you’ll need serious GPU infrastructure (think 1TB+ memory for ultra-long contexts). If you want convenience and don’t mind vendor lock-in, GPT-5.2 offers a frictionless experience via simple API calls. However, be aware that closed-source pricing can be unpredictable, and you lose visibility into how the model processes your data.

The Rise of Autonomous Coding Agents

The biggest shift in 2026 isn't just generating code-it's agents acting on it. Autonomous agents can clone repositories, create branches, write code, run tests, and fix errors until they succeed. GLM-5 excels here, focusing heavily on complex agent workflows including web browsing and terminal-based coding environments. This means the AI doesn't just suggest a fix; it implements it, runs the test suite, and iterates if it fails.

InclusionAI’s Ling-1T represents another frontier. With a trillion-parameter architecture using Mixture-of-Experts (MoE), it achieves around 70% tool-call accuracy without extensive fine-tuning. It ranks first among open-source models on ArtifactsBench because it produces not just functional code, but visually refined front-end layouts. This hybrid approach-combining syntax, function, and aesthetics-is crucial for modern full-stack development.

Security Risks You Can't Ignore

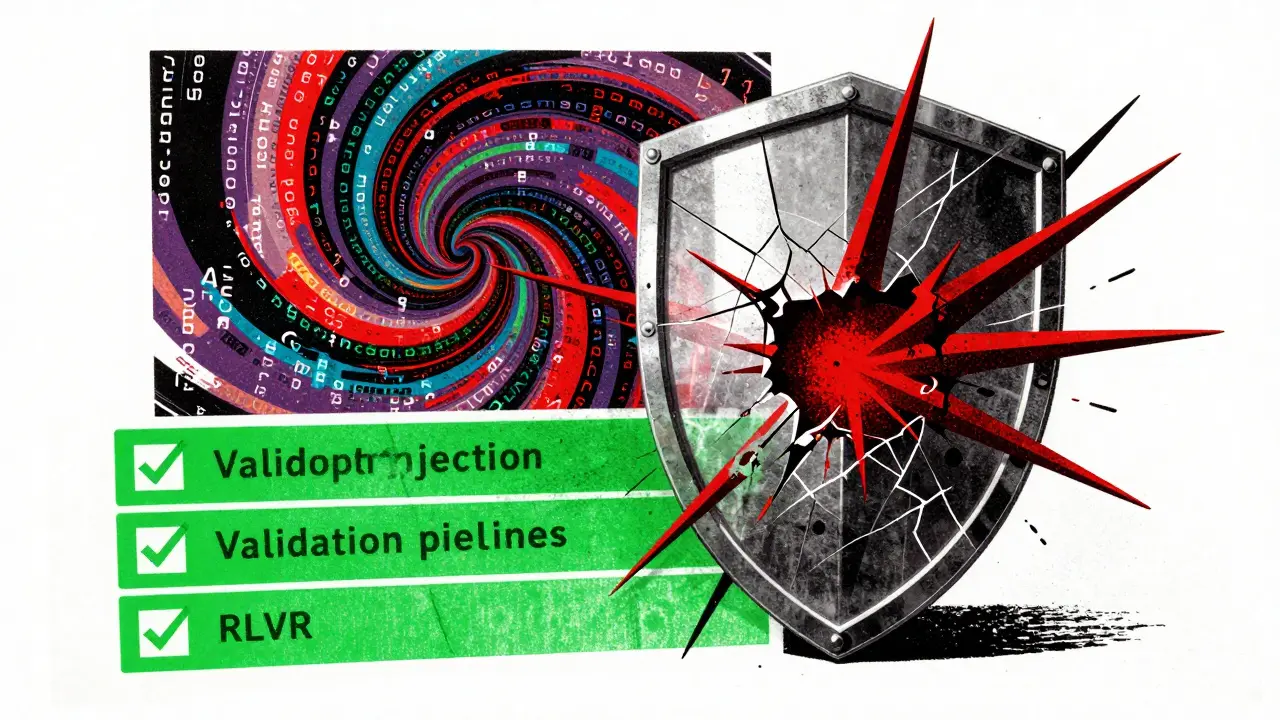

Here is the hard truth: LLMs are probabilistic engines, not deterministic compilers. They hallucinate. When applied to code, this means they can generate vulnerable code that looks correct. Common risks include:

- Prompt Injection Vulnerabilities: Malicious inputs can trick the model into ignoring safety guidelines or revealing internal logic.

- Data Leakage: If you feed proprietary code into a public API, there is always a risk that fragments could end up in future training datasets unless you use enterprise-grade isolation.

- Outdated Practices: Models trained on vast corpora may suggest deprecated libraries or insecure patterns that were common years ago but are now dangerous.

- Adversarial Attacks: Attackers can craft specific prompts to force the model to generate backdoors or bypass authentication checks.

The integration of agentic workflows adds another layer of risk. If an agent has terminal access and is tasked with "fixing the bug," it might inadvertently execute a destructive command or install a malicious package if the underlying model misinterprets the intent. This is why Reinforcement Learning from Verifiable Rewards (RLVR) is becoming critical. RLVR trains AI systems using measurable, verifiable feedback rather than subjective human preferences. For code, correctness is objective-if the tests pass and the security scan is clean, the reward is positive. This shift toward verification is essential for building trust in autonomous coding.

Implementation Strategies for Developers

To leverage these capabilities safely, adopt a hybrid approach. Use local models for sensitive, small-scale tasks where privacy is paramount. Route complex architectural planning or large-scale refactoring to powerful cloud providers like Gemini 3 Pro, which can handle the entire codebase context. Always enforce strict validation pipelines. Never commit AI-generated code directly to production. Run it through static analysis tools, security scanners, and manual review.

Also, consider the specialization trend. General-purpose models are good, but specialized models for specific ecosystems-like Rust or Swift experts-understand memory management and security nuances better. As the market consolidates, expect more fragmentation where generalists coexist with deep specialists for particular languages.

Future Trajectory

Looking ahead, the focus will shift from raw parameter counts to efficiency and trust. MoE architectures will become standard, allowing larger models to run faster and cheaper. Multimodal integration will let models understand code in visual contexts, such as UI mockups or system diagrams. Most importantly, security and verifiability will become non-negotiable features. As code generation moves into mission-critical applications, the industry will demand higher standards for transparency and reliability.

Is GPT-5.2 better than open-source models for coding?

GPT-5.2 leads in reasoning and accuracy with an 89% score on LiveCodeBench, making it excellent for general-purpose tasks. However, open-source models like GLM-5 and Qwen3.5 now match or exceed it in specific areas like cost-efficiency, context handling, and agent workflows. Choose GPT-5.2 for ease of use and top-tier reasoning; choose open-source for privacy, customization, and lower inference costs.

What is the biggest security risk of using LLMs for code generation?

The biggest risk is introducing subtle vulnerabilities that look correct. LLMs can hallucinate insecure patterns, suggest deprecated libraries, or be tricked by prompt injection attacks. Additionally, feeding proprietary code into public APIs poses data leakage risks. Always validate AI-generated code with automated security scans and manual reviews.

How does Gemini 3 Pro's context window help with debugging?

Gemini 3 Pro supports up to 2 million tokens, allowing it to ingest entire projects, including multiple files, folders, and even video instructions. This holistic view enables the model to identify bugs resulting from interactions across different parts of the codebase, which smaller context windows miss because they only analyze isolated snippets.

What is RLVR in the context of code generation?

Reinforcement Learning from Verifiable Rewards (RLVR) is a training method that uses objective, measurable feedback instead of subjective human preferences. For code, this means the model is rewarded based on whether the code passes tests, compiles correctly, or meets security standards. This improves reliability and reduces hallucinations in critical applications.

Can I self-host these large models on my own hardware?

Yes, but it requires significant resources. Frontier models like Qwen3.5-397B-A17B may need up to 1TB of GPU memory for ultra-long contexts. Smaller open-source models like GLM-5 are more efficient due to Mixture-of-Experts (MoE) architecture, but still require substantial compute power. Many teams use a hybrid approach, running smaller models locally and routing heavy tasks to the cloud.