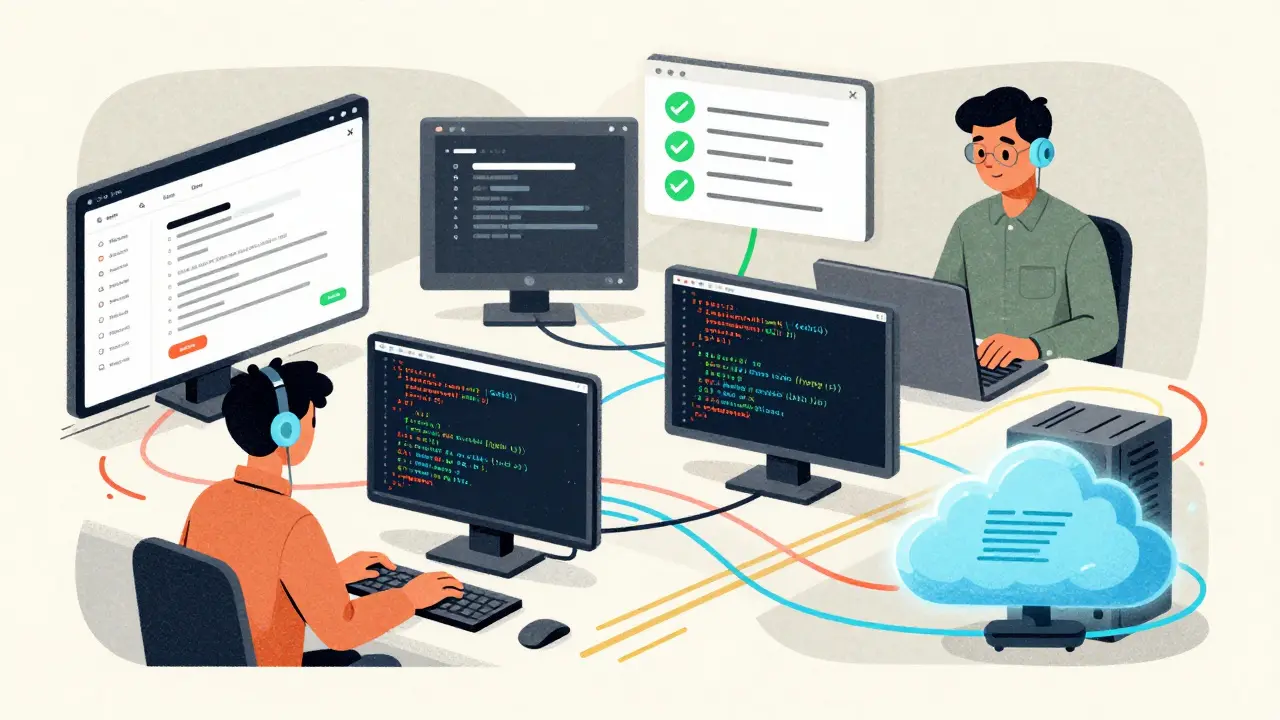

Imagine you’re trying to build a website from scratch. You could ask one AI assistant to handle everything: design, code, testing, deployment. But it’s like asking one person to be a designer, a coder, a QA tester, and a project manager all at once. It’s messy. It’s slow. And it often fails. Now imagine instead that you assign each task to a specialist: one agent designs the UI, another writes clean React code, a third runs automated tests, and a fourth deploys to the cloud-all talking to each other in real time, correcting mistakes, and building on each other’s work. That’s what multi-agent systems with LLMs are doing today. They’re not just smarter AI. They’re organized teams of AI agents, each with a job, working together like a well-oiled machine.

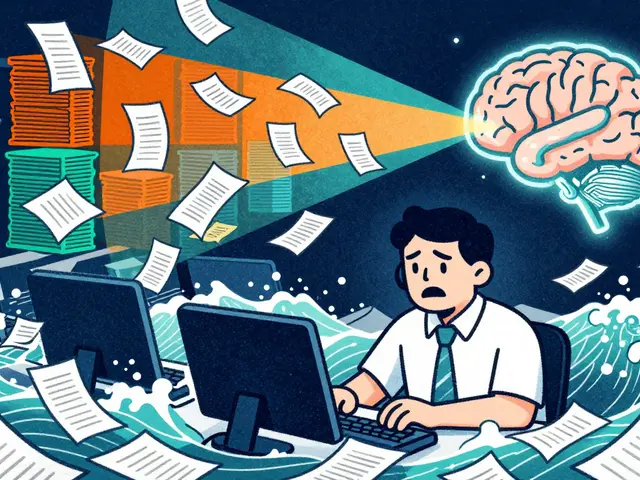

Why Single LLMs Hit Their Limits

Single large language models (LLMs) are powerful. They can write essays, answer questions, and even generate code. But when you ask them to solve something complex-like analyzing climate data, debugging a 10,000-line software system, or planning a supply chain across five continents-they start to struggle. Why? Because they’re trying to do too much at once. They don’t have memory that lasts. They lose context. They hallucinate. And they get stuck in loops. Research from OpenReview (K3n5jPkrU6) and the arXiv survey (2501.06322) shows that when you push a single LLM beyond 5,000 tokens of context, its accuracy drops sharply. That’s why engineers stopped relying on one model to do everything. The breakthrough came when teams started breaking big problems into smaller pieces-and assigning each piece to a different agent.How Multi-Agent Systems Work

At its core, a multi-agent system with LLMs has four key parts:- Task Breakdown: The system receives a high-level request (e.g., “Design a sustainable energy grid for a city”) and splits it into subtasks.

- Role Assignment: Each subtask gets assigned to an agent with a specific role-like Data Analyst, Urban Planner, Cost Estimator, or Regulatory Compliance Checker.

- Collaboration: Agents communicate with each other, share findings, challenge assumptions, and refine outputs. This isn’t just back-and-forth messaging. It’s structured reasoning.

- Output Assembly: A final agent gathers all contributions, checks for contradictions, and delivers a polished result.

Three Leading Frameworks and How They Differ

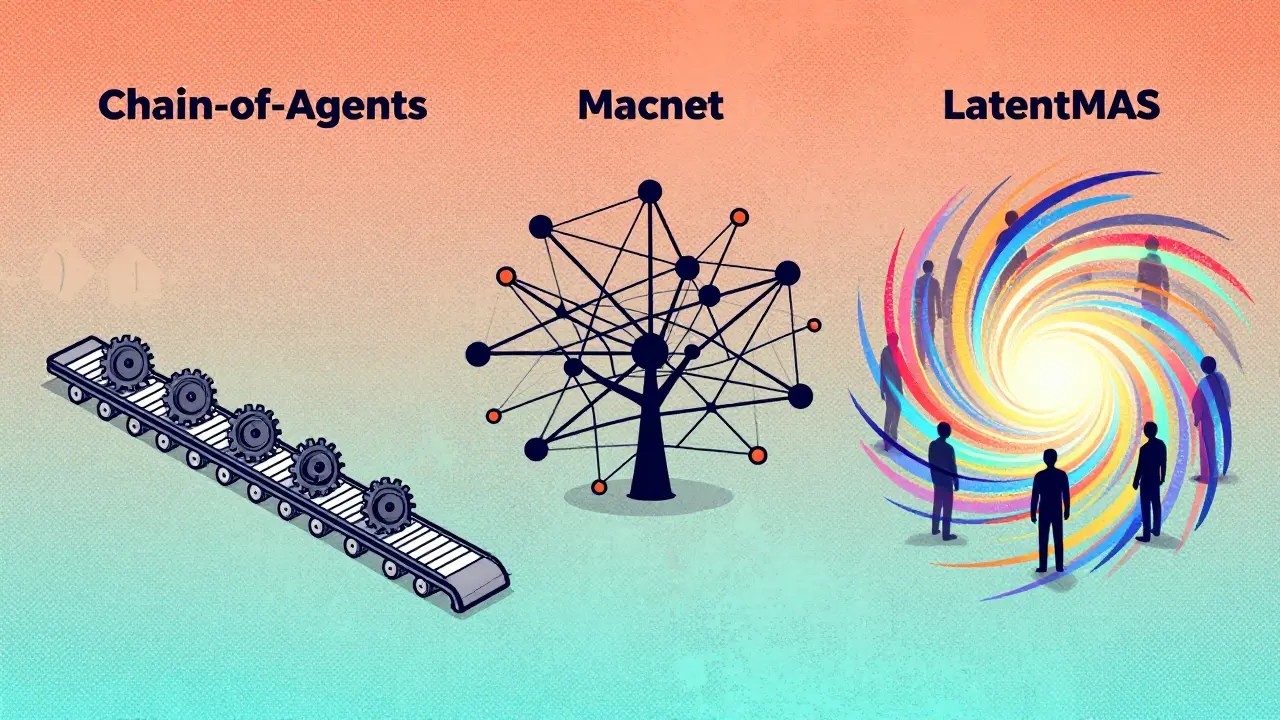

As of late 2025, three major frameworks dominate the space, each with a unique approach:| Framework | Core Approach | Strengths | Weaknesses | Token Efficiency |

|---|---|---|---|---|

| Chain-of-Agents (CoA) | Sequential collaboration | 10.4% better than RAG on long-context tasks; simple to implement | 35% higher API cost than single-agent; slow for parallel tasks | Standard (no reduction) |

| MacNet | Directed acyclic graph (DAG) topology | 15.2% improvement on creative tasks; scales to 1,000+ agents | 2.3x slower with 100 agents; complex setup | Standard |

| LatentMAS | Latent space collaboration (no text exchange) | 14.6% higher accuracy; 70.8% fewer tokens; 4x faster inference | Harder to debug; requires advanced training | 70.8%-83.7% reduction |

Chain-of-Agents, introduced by Google in January 2025, works like an assembly line. Agent 1 writes a draft. Agent 2 refines it. Agent 3 fact-checks. It’s reliable, transparent, and easy to trace. But every step means another API call-and that adds up in cost.

MacNet, developed by OpenBMB, uses a network of agents connected like a web. Some agents talk to five others. Some only talk to one. This irregular structure actually performs better than rigid hierarchies. It’s ideal for creative tasks like simulating social dynamics or designing new products. But setting up the network? That’s a full-time job.

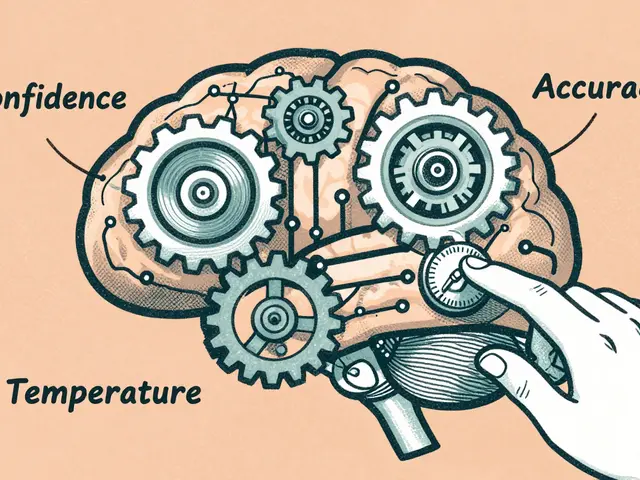

LatentMAS is the wildcard. Instead of agents sending text back and forth, they exchange compressed numerical vectors in a shared “latent space.” Think of it like two people thinking in pictures instead of words. This cuts down on token usage dramatically-up to 83.7% less. That’s huge for companies paying per API call. It’s also faster. But because there’s no text to read, debugging becomes a black box. You know it works. You just don’t know why.

Real-World Use Cases

These systems aren’t just lab experiments. They’re already being used in production:- Climate Modeling: SuperAnnotate deployed a 12-agent system that continuously exchanges weather, satellite, and policy data. It now predicts local flooding risks with 92% accuracy-up from 68% with a single model.

- Enterprise Software Debugging: A Fortune 500 company used MacNet to analyze a legacy Java system. One agent mapped dependencies. Another scanned for security flaws. A third suggested fixes. The result? 47 hours of manual work cut to 6 hours.

- Legal Document Review: An AI law firm now uses a five-agent team: one reads contracts, another flags clauses, a third checks jurisdiction rules, a fourth compares to past cases, and a fifth writes a summary. Accuracy jumped 31%.

These aren’t hypotheticals. They’re happening now. And they’re not replacing humans-they’re giving humans superpowers. Legal teams now review 10x more documents. Engineers fix bugs before users even notice them.

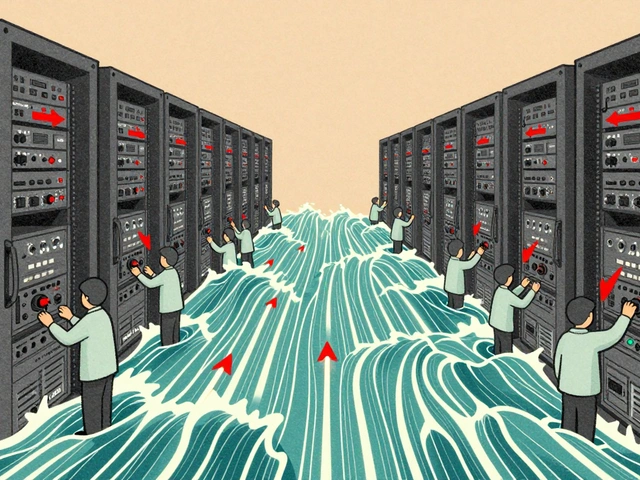

The Hidden Costs and Risks

It’s not all smooth sailing. Multi-agent systems come with real trade-offs:- Cost: CoA and MacNet can cost 30-40% more than single-agent setups due to repeated API calls.

- Latency: With 100+ agents, response times can jump from 2 seconds to over 5 seconds. That’s too slow for real-time apps.

- Emergent Bugs: In one MacNet case (GitHub issue #287), 50 agents agreed on a solution that was completely wrong-because they all reinforced each other’s hallucinations.

- Bias Amplification: Dr. Emily Bender’s research found multi-agent systems can amplify bias by 22.7% compared to single models. If one agent starts with a skewed assumption, others may unconsciously agree.

- Debugging Nightmares: 87% of developers on HackerNews say debugging multi-agent systems is harder than writing the original code.

These aren’t bugs you can fix with a patch. They’re systemic. You need to design for them from day one.

What You Need to Get Started

If you’re thinking of building your own multi-agent system, here’s what you actually need:- LLM Access: Use models with at least 128K context windows (like Claude 3.5 or GPT-4o). Smaller windows won’t handle agent conversations.

- Orchestration Layer: You need something to manage agents. LangChain, LlamaIndex, or AWS Bedrock’s built-in tools work.

- Clear Role Definitions: Don’t say “Agent A does research.” Say “Agent A retrieves peer-reviewed papers from PubMed and summarizes them in bullet points.” Specificity prevents chaos.

- Validation Step: Always include a final agent that checks for contradictions, hallucinations, and logical gaps.

- Monitoring: Track token usage, response time, and error rates per agent. Use dashboards. Watch for runaway conversations.

Most teams take 2-3 weeks to build their first working system. Six months to make it production-ready. Don’t rush it. Start small. Try three agents for one task. Measure. Improve.

The Future: Agent Collectives, Not Monoliths

The writing is on the wall. By 2028, Forrester predicts most advanced AI systems won’t be single models. They’ll be collectives-teams of specialized agents, each doing one thing brilliantly, and talking to each other seamlessly.Google is already working on “self-organizing agent collectives” that automatically adjust their size and structure based on the task. MacNet’s team is building dynamic topology optimization for early 2026. IEEE has formed a working group to standardize how agents communicate.

This isn’t science fiction. It’s the next phase of AI. We’re moving from “one model to rule them all” to “many models, working together.” And the winners won’t be the ones with the biggest models. They’ll be the ones who build the best teams.

Can multi-agent systems replace human teams?

No. They augment them. Human oversight is still critical for setting goals, interpreting ethical trade-offs, and catching systemic biases. Multi-agent systems handle the heavy lifting of research, analysis, and iteration-but humans decide what’s worth doing and why.

Which framework is best for beginners?

Chain-of-Agents (CoA) is the most beginner-friendly. It’s sequential, transparent, and well-documented. Start with two agents: one writes, one checks. Use Google’s GitHub repo and their January 2025 blog post as your guide. Avoid MacNet or LatentMAS until you’ve built at least three working systems.

How do I prevent agents from hallucinating together?

Use a dedicated Validator agent with strict grounding rules. It should cross-check every claim against trusted sources (e.g., PubMed, official standards, verified databases). Also, limit agent communication to structured prompts-no open-ended debates. If an agent says “I think X is true,” force it to say “X is supported by source Y.”

Are multi-agent systems more expensive to run?

Yes, at first. Text-based systems like CoA and MacNet can cost 30-40% more per task due to repeated API calls. But LatentMAS cuts costs by up to 80% by avoiding text exchange. For high-volume tasks, the long-term savings can outweigh the setup cost. Always model your total cost per task-not just per agent.

What industries are adopting this the fastest?

Healthcare (for diagnostics and research), finance (for risk modeling), and enterprise software (for code review and system migration) are leading. Gartner predicts 65% of enterprise LLM deployments will use multi-agent systems by 2027. The fastest adopters are companies with complex, multi-step workflows that used to require dozens of specialists.

Next Steps

If you’re a developer, start with CoA. Try it on a small task-like summarizing a 5,000-word report. Add a second agent to fact-check. Then a third to format the output. Measure the time saved. Track the errors. Compare it to a single LLM. That’s your baseline.If you’re a manager, don’t ask “Can we automate this?” Ask “Which parts of this process are repetitive, error-prone, or require different expertise?” That’s where agents shine.

Multi-agent systems aren’t about building smarter AI. They’re about building smarter teams. And that’s a change worth making.

Zelda Breach

3 March, 2026 - 01:44 AM

This is the most overhyped garbage I've read all year. Multi-agent systems? More like multi-cost systems. You're paying for 10 API calls to do what one GPT-4o can handle in one shot. And don't get me started on LatentMAS - 'no text exchange'? That's not innovation, that's obfuscation. You can't debug a black box when your entire system is built on floating-point hallucinations. This isn't progress. It's a vendor-driven scam wrapped in IEEE jargon.

Alan Crierie

4 March, 2026 - 06:37 AM

I appreciate the depth here, but I think we're missing the human element. These systems aren't meant to replace collaboration-they're meant to augment it. I've used CoA in my team to handle initial draft reviews, and it's cut our turnaround time by 40%. The key is keeping humans in the loop, especially for ethical and contextual decisions. It's not about AI teams-it's about AI as a thoughtful assistant.

Nicholas Zeitler

4 March, 2026 - 11:23 AM

Okay, let’s be real: Chain-of-Agents isn’t just ‘beginner-friendly’-it’s the only sane option if you’re not a PhD in distributed AI systems. I started with two agents: one writes, one fact-checks. That’s it. And yes, it’s still cheaper than hiring a junior dev. But here’s the thing-always include a third agent as a ‘truth guard.’ Not just for hallucinations, but for tone, clarity, and consistency. I’ve seen so many teams skip this and end up with beautifully formatted nonsense.

Teja kumar Baliga

5 March, 2026 - 15:45 PM

From India, I can say this: we’ve been doing this for years in our IT support teams. One person gathers data, another analyzes, a third drafts, and a fourth reviews. The AI version is just faster. No magic here. Just smart分工. Also, please stop calling it ‘LatentMAS’ like it’s a superhero. It’s a compression trick. We’ve used similar techniques in telecom for decades. The name doesn’t make it better.

k arnold

7 March, 2026 - 13:24 PM

Wow. 12-agent climate model? Cool. Now tell me how many engineers it took to build it. How many hours were spent debugging why agent 7 kept insisting Antarctica was a shopping mall. This isn’t AI. It’s a Rube Goldberg machine made of GPT-4s.

Tiffany Ho

7 March, 2026 - 13:54 PM

I love how this article shows AI can be like a team. I’ve tried it with my writing and it really helps. One agent writes, one checks grammar, one makes it sound nicer. It’s not perfect but it’s way better than doing it alone. I’m not a tech person but this felt like having a quiet helper. Thank you for sharing.

michael Melanson

9 March, 2026 - 07:22 AM

LatentMAS is the future, no question. Text-based agents are like sending letters via postal service when you could send a quantum signal. The token savings alone make it worth the black-box risk. And yes, debugging is hell-but so was writing assembly code in the 90s. We adapted. We’ll adapt again. The real win? Scaling. You can run 500 agents on a single GPU now. That’s not theory. That’s AWS billing data.

lucia burton

10 March, 2026 - 15:38 PM

Let me just say-this is paradigm-shifting. We’re not talking about incremental improvements here. We’re talking about a fundamental reconfiguration of how intelligence is structured. The shift from monolithic LLMs to agent collectives is the equivalent of moving from mainframes to distributed computing. And the implications? Unfathomable. Imagine legal teams with 20 agents cross-referencing global statutes in real time. Or medical diagnostics aggregating genomic, radiological, and epidemiological data in a single coherent insight. This isn’t automation. This is cognitive augmentation at scale. And the cost? A rounding error when you factor in the reduction in human error and the acceleration of innovation cycles. We’re not just building tools. We’re evolving the architecture of thought itself.

Denise Young

11 March, 2026 - 11:15 AM

Oh please. ‘LatentMAS cuts tokens by 83%’? Sure. And my toaster cuts my electricity bill by 90% if I just stop using it. You’re trading transparency for efficiency-and then pretending that’s a feature, not a bug. I’ve seen teams use LatentMAS and then spend three weeks trying to figure out why the final output was racially biased. No logs. No trace. Just ‘the system decided.’ That’s not AI. That’s a black box with a marketing team.

Sam Rittenhouse

11 March, 2026 - 20:08 PM

I’ve been on the front lines of this. We deployed a 7-agent system for our customer service pipeline. One reads the email. One identifies urgency. One pulls past tickets. One drafts a reply. One checks tone. One reviews compliance. One sends it. We went from 48-hour response times to under 4 minutes. And yes, there were hiccups. One agent kept calling customers ‘honey.’ Another thought ‘refund’ meant ‘free vacation.’ But we fixed it. Slowly. Carefully. With patience. This isn’t about the tech. It’s about building trust-in the system, in the team, in the process. And that? That takes time. But it’s worth every second.