When you type a prompt into an AI chatbot, the system doesn't read your words like a human does. It sees a long list of numbers. This transformation from text to numbers is called tokenization, which is the process of breaking down input text into smaller units that neural networks can process efficiently. Without this step, large language models would struggle to handle the infinite variety of human language. The strategy you choose for tokenization directly impacts how fast your model runs, how much it costs to operate, and how well it understands different languages.

If you are building or fine-tuning an LLM, understanding these strategies is not just academic-it’s practical. A poor choice here can make your application twice as slow or significantly more expensive for non-English users. Let’s look at how the three dominant methods work, why they differ, and which one fits your specific needs.

The Core Problem: Why We Need Subword Segmentation

In the early days of natural language processing, systems tried to treat every unique word in a dictionary as a single unit. This approach hit a wall quickly. If a model encounters a word it hasn’t seen before-like a new slang term, a typo, or a rare technical jargon-it simply breaks. This is known as the out-of-vocabulary (OOV) problem.

To fix this, researchers turned to subword segmentation. Instead of storing millions of whole words, tokenizers break words into smaller, reusable pieces. For example, the word "unbelievable" might be split into "un", "believe", and "able". If the model knows those parts, it can understand the whole word even if it never saw "unbelievable" during training. This balances lexical coverage with computational efficiency, allowing models to handle rare vocabulary without exploding their memory requirements.

Byte-Pair Encoding (BPE): The Industry Standard

Byte-Pair Encoding is a compression-based algorithm that iteratively merges the most frequent pairs of characters or bytes into single tokens until a predefined vocabulary size is reached. Originally developed by Philip Gage in the 1990s for data compression, BPE became the go-to method for modern LLMs after OpenAI adopted it for GPT-3 and subsequent models.

Here is how it works in practice:

- The tokenizer starts with a basic alphabet of all individual characters found in the training data.

- It scans the corpus to find the most frequently occurring pair of adjacent symbols.

- It merges that pair into a new symbol and adds it to the vocabulary.

- This process repeats until the vocabulary reaches a set limit, such as 50,000 tokens.

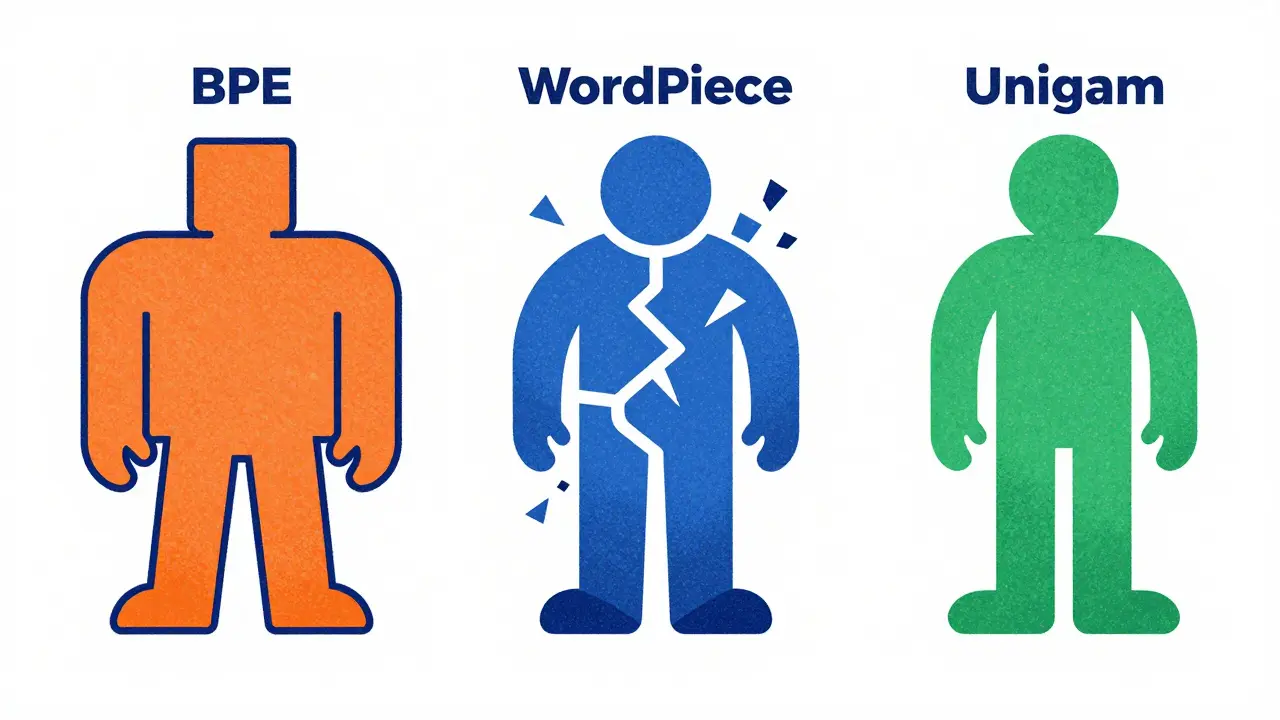

BPE is popular because it strikes a solid balance. Research indicates it achieves optimal performance for general-purpose language modeling, outperforming alternatives by about 7.3% accuracy points in masked token prediction tasks when paired with standard architectures. Most commercial LLMs, including earlier versions of GPT, rely on BPE. Its main strength is simplicity and robustness, but it can sometimes create arbitrary splits that don't align with linguistic roots.

WordPiece: Precision Over Frequency

WordPiece is a tokenization algorithm used primarily by Google's BERT models that selects merges based on likelihood scores rather than simple frequency counts. While BPE looks only at how often two characters appear together, WordPiece considers the probability of a token appearing given its context. This subtle difference changes how the tokenizer behaves.

WordPiece tends to have higher "fertility," meaning it produces more tokens per word on average. Studies show WordPiece averages 1.7 tokens per word compared to BPE’s 1.4. At first glance, this sounds inefficient. More tokens mean longer sequences, which increase computation time and memory usage. However, this granularity preserves more detailed linguistic information. For tasks requiring deep semantic analysis or precise part-of-speech tagging, WordPiece’s finer-grained output can be beneficial.

Google uses WordPiece in many of its foundational models, including BERT and T5. If your application prioritizes interpretability or detailed linguistic structure over raw speed, WordPiece is a strong candidate. Just be aware that the 18% larger sequence lengths can add up in high-volume API calls.

Unigram: Efficiency and Compression

Unigram is a probabilistic tokenization method that optimizes a fixed vocabulary to maximize the likelihood of the training data, resulting in superior compression efficiency. Unlike BPE and WordPiece, which build vocabularies through iterative merging, Unigram starts with a large candidate set and prunes it down based on statistical likelihood.

Unigram shines in scenarios where compact representation matters. Benchmarks from Hugging Face show Unigram has a fertility score of just 1.2 tokens per word, making it the most efficient of the three major methods. In machine code processing tasks, Unigram demonstrated 22% better compression efficiency than BPE and 31% better than WordPiece. This means fewer tokens to process, leading to faster inference times and lower costs.

XGBoost and many recent open-source models favor Unigram for its speed. If you are deploying an LLM on resource-constrained devices or need to minimize latency, Unigram is often the best choice. However, it may sacrifice some of the morphological nuance that WordPiece captures.

| Feature | Byte-Pair Encoding (BPE) | WordPiece | Unigram |

|---|---|---|---|

| Primary Use Case | General-purpose LLMs | Linguistic analysis & NLU | Speed-critical applications |

| Fertility (Tokens/Word) | ~1.4 | ~1.7 | ~1.2 |

| Merge Logic | Frequency-based | Likelihood-based | Probability optimization |

| Notable Implementations | GPT-3, GPT-4 | BERT, T5 | XGBoost, Fairseq |

| Compression Efficiency | Moderate | Low | High |

The Hidden Cost: Language Bias and Efficiency Gaps

One of the most critical issues in modern tokenization is language bias. Most large language models are trained primarily on English data. This creates a significant efficiency gap. English text typically requires 1.3 tokens per word in optimized tokenizers, while languages with complex morphological structures, like Turkish or Swahili, may require 2.1 tokens per word.

This discrepancy isn't just a technical detail-it affects your bottom line. Research shows that non-English requests can cost up to 43% more in computational resources due to longer sequence lengths. For global enterprises, this means serving customers in low-resource languages is disproportionately expensive. Meta’s Llama 3.2 addressed this by expanding its vocabulary to 128,000 tokens, reducing the English-to-Swahili tokenization gap from 37% to 22%. When choosing a tokenizer, consider whether your audience speaks multiple languages and factor in these hidden costs.

Practical Implementation Tips

Implementing a custom tokenizer doesn’t have to be a nightmare. Here are some actionable steps to ensure success:

- Start with pre-trained tokenizers: Training from scratch takes weeks. Using libraries like Hugging Face’s Transformers reduces implementation time by 63%. Fine-tune on your domain-specific data later if needed.

- Preprocess your data: Normalizing text before tokenization improves downstream performance by 11.7%. For example, replacing varying address values in code datasets with sequential identifiers reduces outliers and boosts accuracy.

- Watch whitespace handling: Improper handling of spaces and newlines can inflate token counts by 15-20%. Always verify how your tokenizer treats whitespace to avoid unexpected cost spikes.

- Select vocabulary size wisely: Larger vocabularies improve coverage but slow down inference. Sean Trott, a computational linguist, notes that this trade-off is the most critical decision point. Aim for a size that covers 99% of your target corpus without bloating the model.

Future Directions: Beyond Fixed Vocabularies

The field is moving toward more sophisticated approaches. Dynamic vocabulary allocation, predicted to improve non-English efficiency by 15-20% by 2026, allows models to adjust their token sets based on context. Additionally, neural tokenization approaches are emerging, learning segmentation directly from raw bytes. These methods could eventually eliminate fixed vocabularies altogether, offering seamless multilingual support without the current efficiency penalties.

For now, however, BPE, WordPiece, and Unigram remain the pillars of LLM infrastructure. Understanding their strengths and weaknesses helps you build faster, cheaper, and more inclusive AI applications.

What is the main difference between BPE and WordPiece?

The main difference lies in how they select merges. BPE merges the most frequent character pairs, while WordPiece selects merges based on likelihood scores derived from the training data. This makes WordPiece more sensitive to context but results in higher token counts per word.

Which tokenizer is best for multilingual applications?

Unigram generally offers better compression efficiency across diverse languages due to its lower fertility score. However, models with larger vocabularies like Llama 3.2 (128,000 tokens) perform better for non-English texts by reducing the token count disparity compared to English-only optimized tokenizers.

How does vocabulary size affect model performance?

Larger vocabularies allow for more specific representations, improving lexical coverage and reducing the number of tokens needed for common words. However, they also increase memory usage and slow down inference. The optimal size depends on the trade-off between coverage and speed required for your specific use case.

Why do non-English texts cost more to process?

Most tokenizers are trained primarily on English data, leading to bias. Languages with complex morphology often require more subwords to represent the same amount of information, resulting in longer sequences. Since LLMs charge based on token count, these longer sequences translate to higher costs.

Can I change the tokenizer of a pre-trained model?

Yes, but it requires careful handling. You can replace the tokenizer component in frameworks like Hugging Face Transformers. However, changing the tokenizer alters the input distribution, so you may need to continue pre-training or fine-tune the model to adapt to the new token embeddings.