Technology in AI: How Modern Systems Power LLMs, Security, and Generative Tools

When we talk about technology, the systems and methods used to build, deploy, and secure artificial intelligence applications. Also known as AI infrastructure, it's what makes large language models actually work in the real world—not just in research papers. This isn’t about flashy gadgets. It’s about the hidden layers: how thousands of GPUs talk to each other, how models stay private during use, and why your AI chatbot doesn’t spill your data all over the internet.

Behind every smart AI tool is a stack of core technologies. Large language models, AI systems trained on massive text datasets to understand and generate human-like language. Also known as LLMs, they’re the engine—but they need the right fuel and brakes. That’s where distributed training, the process of splitting AI model training across many machines to handle huge datasets and complex calculations. Also known as multi-GPU training, it’s what lets companies train models faster and cheaper. Without it, you’re stuck waiting weeks for a single model to learn. And when it’s done? AI security, the practices and tools that protect models from tampering, data leaks, and malicious use. Also known as LLM supply chain security, it keeps your AI from becoming a backdoor for hackers. You can’t just drop a model into production and hope for the best. Containers, weights, dependencies—they all need checking. Even the data you feed it has to follow laws like GDPR or PIPL, or you risk fines.

Generative AI doesn’t just write text. It creates images, videos, and even entire UIs—but only if you control the design system. It needs truthfulness checks so it doesn’t lie. It needs retrieval systems so it answers from your own data, not guesswork. And it needs ethical guardrails so teams and users trust it. This collection dives into every layer: how attention mechanisms let models understand context, how encryption-in-use keeps your prompts private, how redaction tools block harmful outputs, and why switching models is sometimes smarter than compressing them. You’ll find real-world benchmarks, deployment traps, and fixes for hallucinations—not theory, but what’s working right now.

Whether you’re deploying a model on-prem, tuning a prompt, or securing a container, the technology behind it all is the same. And if you’re building with PHP, you need to know how these systems talk to your code. Below, you’ll find deep dives into every piece that matters—no fluff, no hype, just the tech that actually moves the needle.

Long-Context Risks in Generative AI: Distortion, Drift, and Lost Salience

Long-context AI models can process massive amounts of text, but they struggle with distortion, drift, and lost salience-especially in the middle of documents. Learn how these risks undermine reliability and what’s being done to fix them.

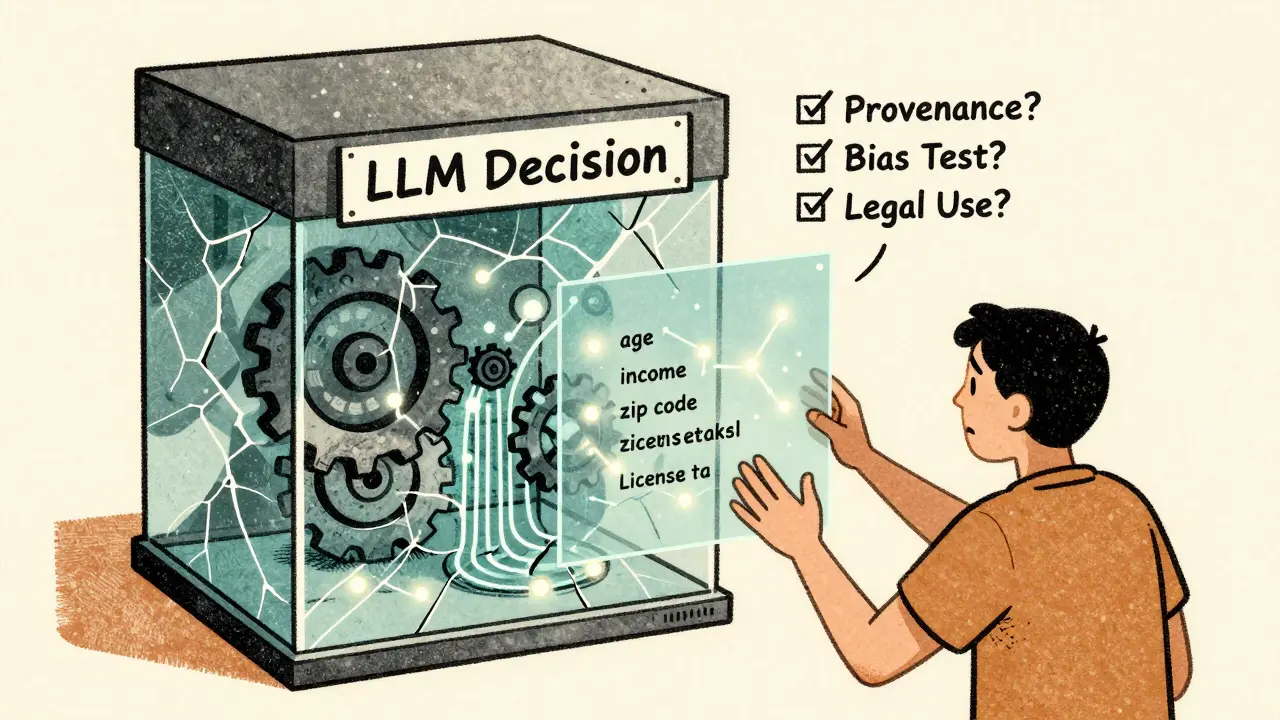

Read MoreTransparency and Explainability in Large Language Model Decisions

Transparency and explainability in large language models are critical for trust and fairness. Without knowing how decisions are made, AI risks reinforcing bias and eroding public trust - especially in high-stakes areas like finance and healthcare.

Read MoreCitations and Sources in Large Language Models: What They Can and Cannot Do

LLMs can generate convincing citations-but most are fake. Learn why AI hallucinates sources, how often they get it wrong, and how to use them safely without trusting their references.

Read MorePretraining Objectives in Generative AI: Masked Modeling, Next-Token Prediction, and Denoising

Masked modeling, next-token prediction, and denoising are the three core pretraining methods powering today’s generative AI. Each excels in different tasks-from understanding text to generating images. Learn how they work, where they shine, and why hybrid approaches are the future.

Read MoreHow Training Duration and Token Counts Affect LLM Generalization

Training duration and token counts don't guarantee better LLM generalization. What matters is how sequence lengths are structured during training. Learn why variable-length training beats raw scale and how to avoid common pitfalls.

Read MoreMulti-Agent Systems with LLMs: How Specialized AI Agents Collaborate to Solve Complex Problems

Multi-agent systems with LLMs use specialized AI agents working together to solve complex tasks better than any single model. Learn how frameworks like Chain-of-Agents, MacNet, and LatentMAS enable collaboration, role specialization, and efficiency gains.

Read MoreHow to Detect Fabricated References in Large Language Model Outputs

Fabricated references from AI models are slipping into real research papers. Learn how to detect them, why they happen, and what institutions must do to stop them before science loses its foundation.

Read MoreAccessibility Regulations for Generative AI: WCAG Compliance and Assistive Features

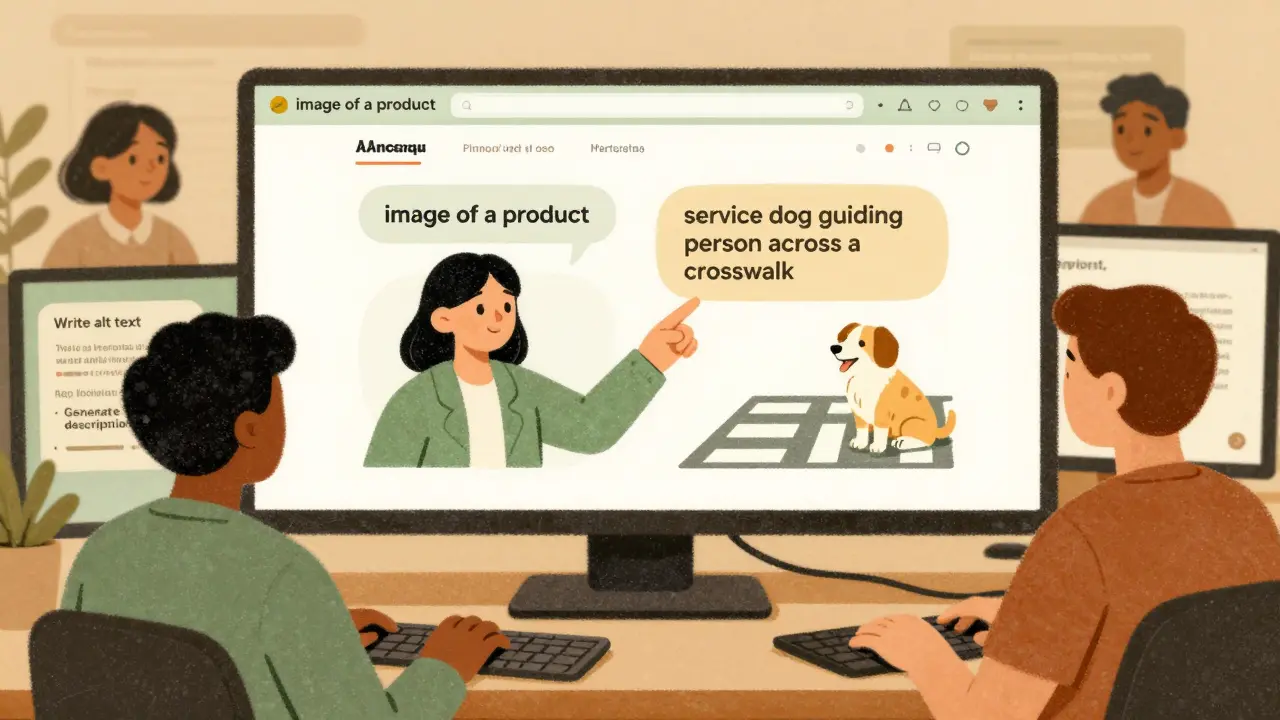

Generative AI must follow WCAG accessibility standards just like human-created content. Learn how to comply with legal requirements, avoid lawsuits, and build inclusive AI systems that work for everyone.

Read MoreHow Combining RAG with Decoding Strategies Improves LLM Accuracy

Combining RAG with advanced decoding strategies like Layer Fused Decoding and entropy-based weighting drastically reduces LLM hallucinations. This approach grounds responses in live data while guiding word-by-word generation for higher accuracy.

Read MoreLife Sciences Research with Generative AI: Protein Design and Literature Reviews

Generative AI is revolutionizing life sciences by designing entirely new proteins for medicine and industry-beyond what nature evolved. From cancer therapies to plastic-eating enzymes, this is how AI is reshaping biology.

Read MoreGPU Selection for LLM Inference: A100 vs H100 vs CPU Offloading

H100 GPUs now outperform A100s and CPU offloading for LLM inference, offering faster responses, lower cost per token, and better scalability. Choose H100 for production, A100 only for small models, and avoid CPU offloading for real-time apps.

Read MoreEnergy Efficiency in Generative AI Training: Sparsity, Pruning, and Low-Rank Methods

Sparsity, pruning, and low-rank methods slash energy use in generative AI training by 30-80% without sacrificing accuracy. Learn how these techniques are reshaping sustainable AI development.

Read More