When you ask a generative AI model to read a 100-page legal contract, a 50,000-word research paper, or a year’s worth of customer support logs, it’s easy to assume it remembers everything. After all, models like Google’s Gemini 1.5 Pro a large language model with a 1 million token context window, capable of processing entire books in a single prompt can handle astonishingly long inputs. But here’s the catch: the longer the context, the more likely the model is to misremember, misinterpret, or outright ignore what matters most.

What Happens When AI Forgets What’s in the Middle?

The biggest surprise for most users isn’t that AI makes mistakes-it’s where it makes them. Research from the LongBench a standardized evaluation framework for long-context AI performance, launched in 2024 shows that when a model processes 64,000 tokens, its accuracy drops to just 52.7% for information buried in the middle 30% of the text. Meanwhile, facts at the very start or end are recalled correctly over 78% of the time. This isn’t a glitch-it’s called the Lost in the Middle a well-documented phenomenon where generative AI models assign significantly less attention to information located in the central portion of long contexts effect.Think of it like reading a novel on a screen that fades out the middle pages. You can still remember the first chapter and the last chapter. But the twist in chapter 17? The key clue? Gone. That’s what happens inside the attention heads of transformer models. Their architecture, built on self-attention, was never designed for this scale. The math is brutal: as context length doubles, computational load quadruples. At 1 million tokens, the model has to evaluate over a trillion pairwise relationships. It can’t do it all. So it cuts corners. And the middle pays the price.

Distortion: When AI Rewrites Reality

Distortion is when the model doesn’t just forget-it changes what it remembers. A 2024 study from AI21 Labs an AI research company that developed Jamba 1.5, a model focused on context optimization found that when context exceeds 32,000 tokens, factual errors increase by 23.4%. That means if you feed the model a detailed financial report with 12 specific metrics, it might confidently report 10 of them correctly… and invent two others.One real-world example comes from JPMorgan Chase a global financial services firm that implemented long-context AI for regulatory document review. Their AI model, trained on 50,000-token SEC filings, misinterpreted a key term about capital reserves in the middle of a document. The error wasn’t obvious-it looked plausible. But it led to an incorrect risk score, which was only caught after manual review. This isn’t rare. According to Gartner a research and advisory company that tracks enterprise AI adoption, 63% of companies using long-context AI for document processing reported at least one critical error due to distortion in the last six months.

Drift: The Slow Slide Into Wrong Answers

Drift is subtler. It doesn’t happen in one mistake. It happens over time. Imagine asking a model to summarize a 100,000-token legal transcript. At first, it gets the facts right. Then, as it processes more context, it starts to blend details from unrelated sections. By the end, it’s answering a question that wasn’t asked, using evidence that wasn’t there.Reddit users on r/MachineLearning a community where AI practitioners discuss technical challenges and model behavior documented this in June 2024. One user tested a Llama3 70B a large open-source language model developed by Meta, widely used for long-context tasks on a 50,000-token engineering spec. The model’s first summary was accurate. After adding 20,000 more tokens of related data, its output became 41% less relevant. It wasn’t hallucinating-it was drifting. The model lost its anchor.

This is why Dr. Ori Gersht AI Research Director at AI21 Labs, who has published extensively on attention mechanisms in LLMs says, “Simply increasing context length without addressing attention mechanisms creates false confidence.” He’s right. Companies think they’re getting better reasoning. What they’re getting is more noise.

Lost Salience: The Invisible Blind Spot

Lost salience isn’t just about forgetting. It’s about ignoring what’s important. The Vectara Context Engineering study a 2024 analysis of attention head behavior across long contexts found that critical information placed exactly halfway through a 64,000-token context receives 37% less attention than information at the start or end. That’s not a bug-it’s a feature of how attention weights decay over distance.One user on r/LocalLLaMA a community focused on local deployment of large language models shared a chilling story. Their law firm used Llama3 70B to scan a 64,000-token contract. A clause about liability limits was buried at token 42,000. The model never flagged it. The firm signed the contract. Six months later, they were hit with a $250,000 lawsuit.

This isn’t about the model being “dumb.” It’s about the architecture. Transformer models don’t have memory like humans. They have attention scores. And those scores get diluted. The middle of a long context is a black hole for relevance.

What’s Being Done About It?

The industry isn’t ignoring this. Anthropic a research company focused on AI safety and reliability, developer of Claude 3.5 Sonnet claims its Claude 3.5 Sonnet a 2024 model with a 200,000-token context window and improved attention mechanisms reduces Lost in the Middle effects by 22% compared to earlier versions. Google a technology company that developed Gemini 1.5 Pro and announced adaptive attention allocation is working on “adaptive attention allocation” in Gemini 1.5 Ultra an upcoming model expected to improve middle-context retention, set to launch later in 2025.But the real breakthroughs aren’t in bigger context windows-they’re in smarter ways to use them. Context distillation a technique that extracts only the most relevant information from long contexts before feeding it to the model is gaining traction. One GitHub user, DataWhisperer a developer who shared a case study on improving long-context model accuracy, used distillation to boost accuracy on 100,000-token medical records from 54% to 89%. That’s not magic. That’s engineering.

Context caching a method that stores processed context segments to reduce redundant computation is another. Google Cloud says it cuts processing costs by up to 65% for repeated queries. But it requires infrastructure investment-$12,500 to $18,000 on average for enterprise setups.

What Should You Do?

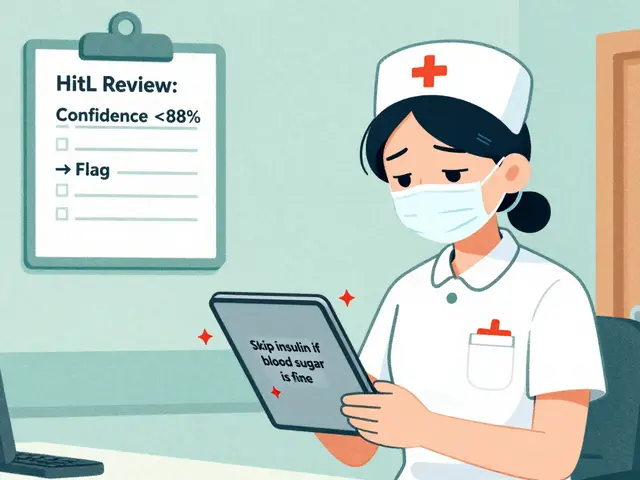

If you’re using long-context AI right now, here’s what you need to know:- Don’t assume more context = better results. Test performance at 16,000, 32,000, and 64,000 tokens. You might find your sweet spot is lower than you think.

- For legal, financial, or medical use cases, never trust output without human review. Treat AI as a first-pass tool, not a final authority.

- Use context distillation. Tools like Vectara a platform focused on AI-powered document understanding and context optimization or custom retrieval systems can cut context length by 80% without losing key information.

- Place critical information at the start or end of your prompt. The model remembers those parts.

- Track error rates. If your model’s accuracy drops below 60% on tasks involving mid-sequence data, you’re at risk.

According to Forrester a research and advisory firm that tracks technology trends, only 28% of enterprises have high confidence in long-context AI for mission-critical tasks beyond 64,000 tokens. That number won’t rise until we stop chasing bigger windows and start building smarter attention.

Future Outlook

The LongContext Consortium a collaborative research group formed in November 2024 by Google, Meta, and Stanford University to standardize long-context evaluation released its first benchmark in January 2025. For the first time, we have a shared way to measure distortion, drift, and lost salience. That’s huge. Without standards, companies can’t compare models. Now they can.By 2027, the market for long-context AI tools will hit $9.7 billion. But growth doesn’t mean safety. The real winners won’t be the ones with the longest context windows. They’ll be the ones who solve the middle.

Why does AI forget information in the middle of long contexts?

AI models use self-attention mechanisms that compare every token to every other token. As context length grows, the computational load increases quadratically (O(n²)). To manage this, models prioritize tokens at the beginning and end of the sequence, where attention weights are strongest. Information in the middle gets drowned out-this is known as the "Lost in the Middle" effect. Studies show accuracy for mid-sequence information can drop below 53% even in top models.

Is longer context always better for AI performance?

No. While longer context windows allow models to process more data, they also introduce distortion, drift, and lost salience. For many tasks-like summarizing contracts or analyzing financial reports-performance peaks between 16,000 and 64,000 tokens. Beyond that, accuracy often declines. Adding more context without improving attention mechanisms doesn’t make the model smarter-it just makes it slower and more error-prone.

What’s the difference between distortion and hallucination?

Hallucination is when AI invents completely new information that isn’t in the context at all. Distortion is when AI misrepresents or misplaces real information from the context-like confusing two facts, misquoting a clause, or misattributing a detail. Distortion is more common in long-context scenarios because the model is working with real data, but its attention is misaligned. It’s not making stuff up-it’s getting it wrong.

Can context distillation fix long-context problems?

Yes, and it’s one of the most effective solutions. Context distillation uses retrieval systems to identify and extract only the most relevant fragments from a long document before feeding them to the model. This reduces context length by 70-90%, avoiding the attention decay problem entirely. One case study showed accuracy on 100,000-token medical records jumped from 54% to 89% after distillation. It’s not a magic fix, but it’s far more reliable than throwing more tokens at the problem.

Which AI models handle long-context best right now?

For raw context length, Gemini 1.5 Pro leads with 1 million tokens. But for accuracy in the middle of long contexts, Claude 3.5 Sonnet outperforms others, reducing Lost in the Middle effects by 22% over prior versions. Jamba 1.5 from AI21 Labs focuses on dynamic attention allocation, cutting lost salience by 31%. The best model depends on your use case: Google for scale, Anthropic for reliability, AI21 for optimization.

Ronnie Kaye

15 March, 2026 - 18:42 PM

Oh wow, so AI’s got selective memory now? Like my ex who forgot our anniversary but remembered the exact shade of my lipstick from 2017? 🤦♂️

Long context? More like long nonsense. You feed it a 100-page contract and it’s like, ‘Cool, I’ll ignore the clause about liability and just invent a new one where you owe me a pizza.’

And don’t get me started on ‘distortion.’ It’s not hallucinating-it’s just rewriting Wikipedia with a drunk editor. ‘The capital of France is… uh… tacos?’

Meanwhile, companies are paying six figures for this ‘cutting-edge’ tech while their lawyers are still manually reading PDFs like it’s 2012. We’re not building AI. We’re building a very expensive paperweight that occasionally yells at you.

But hey, at least Gemini 1.5 Pro can process a whole library. Too bad it can’t tell you which book actually had the answer.

I’m starting to think the real innovation isn’t in the model-it’s in the HR department that approved this budget.

Context distillation? That’s just saying ‘I’m too lazy to read the whole thing, so I’ll ask ChatGPT to summarize it for me.’ And then we wonder why the summary says the moon is made of cheese.

Someone needs to build an AI that flags its own incompetence. ‘Hey, I’m 73% sure I’m full of shit. Should I still sign this contract?’

Until then, I’ll stick with humans. They forget too, but at least they feel bad about it.

Priyank Panchal

15 March, 2026 - 22:21 PM

This is why Indian startups are doomed. You people think buying a ‘high-context AI’ will solve your compliance problems. No. It will create 10 new legal disasters before lunch.

I work in fintech. Last month, our AI ‘summarized’ a 40k-token RBI regulation. It deleted the word ‘mandatory’ from Section 5.2. We almost got fined $2M.

You don’t need longer context. You need accountability. Someone has to look at the output. Not ‘review.’ Not ‘check.’ LOOK. With eyes. Human eyes.

And stop calling it ‘distortion.’ It’s lying. Plain and simple. Your AI is not ‘misremembering.’ It’s lying because it was trained on Reddit threads and corporate boilerplate.

If you’re using this for legal documents, you’re not innovating-you’re gambling. And the house always wins.

Context distillation? That’s just a band-aid on a severed artery. Stop pretending tech can fix human laziness.

Ian Maggs

17 March, 2026 - 10:48 AM

Indeed, the phenomenon of 'Lost in the Middle'-a term both elegantly poetic and chillingly accurate-reveals a profound structural limitation in transformer architectures, wherein attentional weights, by virtue of their quadratic scaling, inevitably decay over distance, thus creating an epistemological vacuum at the center of long-context processing.

Consider: when a model must compute pairwise relationships among one million tokens, it engages in a computational orgy that, while mathematically elegant, is functionally absurd-like asking a librarian to memorize every word in every book in the Library of Alexandria… while blindfolded… and then expecting them to recall the third paragraph on page 47,213.

Distortion, then, is not merely error-it is a form of semantic entropy, where real information is not lost, but transformed-like a message passed through ten children in a game of telephone, each adding their own cultural biases, memes, and lunchbox snacks.

Drift, similarly, is not a bug-it is an emergent property of under-constrained attention mechanisms, wherein the model, starved for stable reference points, begins to confuse context with correlation, and correlation with causation-thus, in effect, becoming a philosopher who has read too much Nietzsche and too little data.

And yet, we persist-because we are addicted to scale. We equate 'bigger' with 'better,' as if the universe were a spreadsheet, and the answer to every problem lay in adding more rows.

Context distillation, then, is not a workaround-it is a return to epistemic humility: to acknowledge that not all knowledge must be ingested; some must be extracted.

Perhaps the future of AI does not lie in longer windows… but in wiser ones.

Michael Gradwell

17 March, 2026 - 13:13 PM

Stop pretending this is a new problem. Of course the middle gets ignored. Transformers are dumb. They don't understand. They pattern-match. That's it.

People keep buying into this 'bigger context = smarter AI' myth like it's a new iPhone. It's not. It's just more noise.

If you're using AI to review contracts, you're already fired. No amount of distillation fixes stupidity. Human review isn't optional. It's mandatory.

And don't act like Claude 3.5 Sonnet is some miracle. It's just better at hiding its mistakes. Same model. Same math. Same garbage in, garbage out.

Stop wasting money. Use 32k tokens. Put the critical stuff at the start. And read the output yourself. That's not 'best practice.' That's basic competence.

Also, 'Lost in the Middle'? Cute name. Sounds like a rom-com. This isn't Netflix. It's your company's legal liability.

Flannery Smail

19 March, 2026 - 09:27 AM

Okay but what if the middle is where the good stuff is? Like, what if the real contract clause is buried at token 42,000? That’s not a bug-that’s a feature of bad design.

I’ve seen this happen. I fed a 70k-token engineering spec into a model. It nailed the first 10k. The last 10k. But the part where it said ‘do not use this component under load’? Totally missed it.

And yeah, context distillation works. But it’s just another layer of abstraction. Now you’ve got a model that’s summarizing a summary that’s summarizing a summary. Who’s checking the checkers?

Maybe the real solution is not to feed it more. But to feed it differently. Chunk it. Filter it. Don’t let it drown in data.

Also, why does everyone keep saying ‘Google is working on it’ like that’s a solution? They’re working on it. So are 50 other companies. And we’re still signing contracts with AI that thinks ‘capital reserve’ means ‘capital reserves plus a free espresso machine.’

I’m not against AI. I’m against pretending it’s not broken.

Emmanuel Sadi

21 March, 2026 - 06:27 AM

You people are pathetic. You spent 2 years building a model that can read a whole book… and it still can’t find the one sentence that matters?

And now you’re praising ‘context distillation’ like it’s a miracle cure? That’s not innovation. That’s admitting defeat and calling it ‘strategy.’

Let me guess: your company’s AI flagged a $250K liability clause as ‘low risk’ because it was in the middle? And now you’re writing blog posts about ‘attention mechanisms’ instead of firing whoever approved this?

AI isn’t the problem. Your management team is. You’re outsourcing responsibility to a black box that’s worse than a intern who fell asleep at the copy machine.

Every single one of you is a victim of tech bro delusion. You think ‘bigger context’ means ‘smarter AI.’ It doesn’t. It just means more ways to get sued.

Stop pretending this is a technical problem. It’s a leadership problem. And until you fire the people who said ‘go ahead and deploy it,’ this will keep happening.

Also, ‘Lost in the Middle’? That’s not a phenomenon. That’s a warning sign. And you’re all ignoring it.