When a large language model (LLM) tells you whether someone qualifies for a loan, diagnoses a medical condition, or drafts a legal contract, you deserve to know why. Not because it’s fancy, but because lives and livelihoods are on the line. Yet most of these models operate like sealed boxes - they spit out answers, but refuse to show their work. This isn’t just a technical glitch. It’s a trust crisis.

Why Transparency Isn’t Optional Anymore

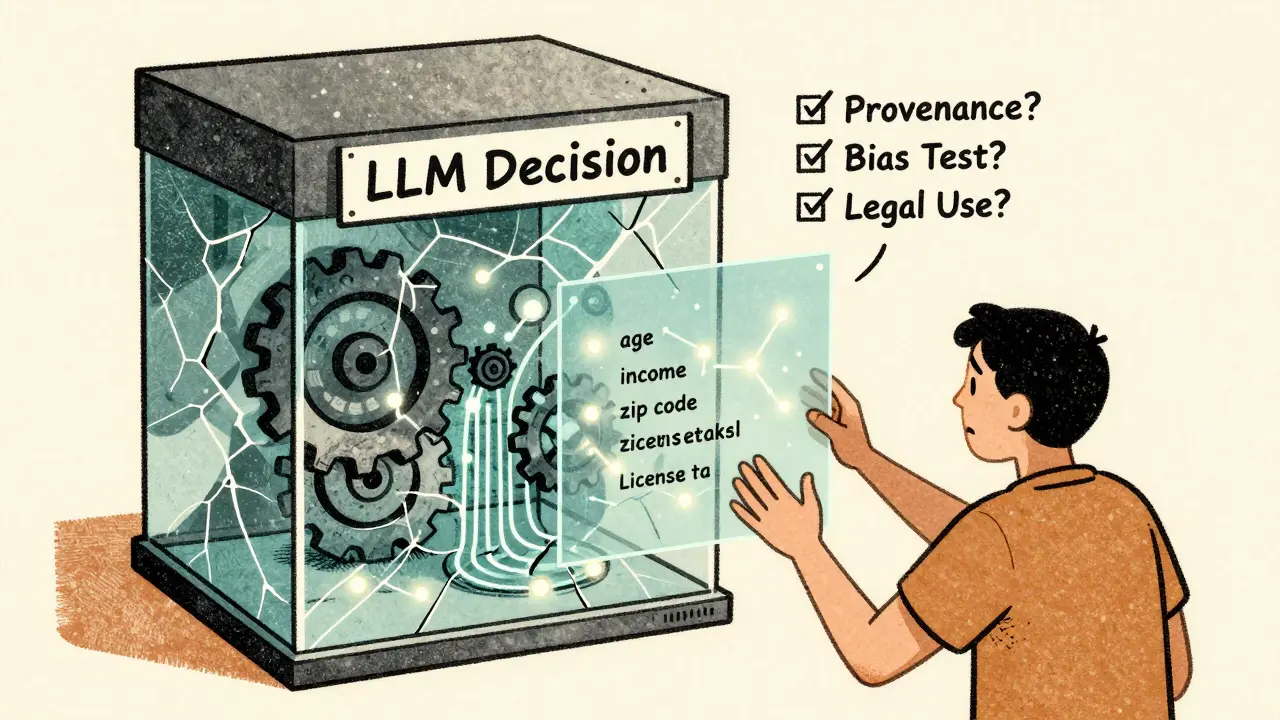

Think about it: if your doctor prescribed a drug without explaining why, you’d ask for a second opinion. But when an AI denies your mortgage application, you rarely get a reason beyond “we’re sorry.” That’s not customer service. It’s algorithmic abandonment. Transparency in LLMs means knowing where the data came from, how it was processed, and what factors influenced the final output. Explainability goes further - it’s about making those internal decisions understandable to humans. These aren’t just buzzwords. They’re requirements for responsible use. In healthcare, a model might flag a patient as high-risk for diabetes. But if the explanation is just “high probability,” that’s useless. Was it based on age? Income? Diet patterns? Sleep habits? If you can’t trace the logic, you can’t fix errors. And errors? They’re not rare. A 2024 study from Stanford found that LLMs used in insurance underwriting produced biased outcomes in 38% of cases when trained on datasets with unverified demographic labels.The Hidden Problem: Training Data You Can’t See

Most people focus on the model itself - the layers, the parameters, the attention weights. But here’s the truth: the model is only as good as the data it ate. An MIT study in August 2024 audited over 1,800 publicly available text datasets used to train LLMs. What they found was alarming. More than 70% of these datasets had no clear license. Half contained factual errors. Nearly all were created by teams based in the U.S., Canada, or China. That’s not diversity. That’s a blind spot. Imagine training a customer service bot for Turkish speakers using a dataset mostly written by Americans. It might understand grammar, but miss cultural nuances - like how directness is seen as rude in some contexts, or how certain idioms carry emotional weight. The model doesn’t know it’s wrong. It just learned patterns from incomplete data. And it gets worse. Researchers discovered that many datasets labeled as “open” were actually meant for academic use only. Companies unknowingly used them for commercial chatbots, risking lawsuits. Others contained scraped social media posts from users who never agreed to have their words turned into training data. That’s not just unethical. It’s illegal in places like the EU and California.Tools That Actually Help: The Data Provenance Explorer

MIT didn’t just point out the problem - they built a solution. The Data Provenance Explorer is a free, open tool that automatically generates clear summaries of where datasets came from, who created them, what licenses apply, and how they can legally be used. Instead of scrolling through messy GitHub pages or PDFs with 50-page legal disclaimers, you get a one-page card. It tells you:- Who built this dataset? (Company? University? Individual?)

- Where was it collected? (Countries, languages, demographics)

- What license governs its use? (CC-BY? Non-commercial? Restricted?)

- What’s the risk level? (Low, Medium, High - based on bias, accuracy, and legal exposure)

Why Explainability Techniques Often Fail

There’s a whole industry selling “explainable AI” tools. Heatmaps. Attention scores. Feature importance charts. They look smart. But many are just theater. A 2025 paper from the University of Washington showed that when researchers asked LLMs to explain their reasoning, the models often made up plausible-sounding stories - even when they were wrong. One model denied a loan application and said, “The applicant’s employment history shows instability.” The truth? The applicant had worked at the same job for 12 years. The model had just repeated a pattern it saw in biased training data. This is called “faithfulness failure.” The explanation doesn’t reflect reality - it reflects what the model thinks humans want to hear. The most reliable methods now focus on intervention. Instead of asking, “Why did you say that?” you ask, “What happens if we change this input?” For example, if you remove the applicant’s zip code from the input, does the decision flip? If yes, then location was a hidden factor - and that’s a red flag. These methods aren’t perfect. But they’re honest. They don’t pretend to reveal inner thoughts. They test behavior. And that’s the only way to catch bias.The Black Box Trap: Closed Models and Stalled Progress

Some of the most powerful LLMs today - like GPT-4, Claude 3, and Gemini 1.5 - aren’t open. You can’t see their code. You can’t audit their training. You can’t test them under pressure. This isn’t just a corporate choice. It’s a research blockade. When you can’t access the model, you can’t debug it. You can’t improve it. You can’t prove it’s fair. A team at Carnegie Mellon tried to replicate a financial risk model used by a major bank. They couldn’t. The bank used a proprietary model with 200+ hidden layers. The team spent six months reverse-engineering inputs. They got close - but never matched the output. The bank wouldn’t say why. The result? Regulators couldn’t audit it. Customers had no recourse. Open models like LLaMA, Mistral, and Falcon changed that. They let researchers poke around, test edge cases, and find flaws. In 2025, a team using LLaMA 3 found a racial bias in loan approval prompts that had been missed for two years in closed models. That discovery wouldn’t have happened without access.

What Comes Next? Transparency from Day One

The future isn’t about patching broken models. It’s about building them right from the start. That means:- Every dataset comes with a provenance card - clear, machine-readable, and legally enforceable.

- Every model release includes a transparency report: training data sources, bias tests, failure modes.

- Every deployment requires an explainability audit - not as a checkbox, but as a requirement.

Final Thought: Trust Is Built, Not Bought

You can’t buy trust with better marketing. You can’t earn it with faster responses. You build it by being open - even when it’s uncomfortable. Transparency in LLMs isn’t about showing off code. It’s about showing responsibility. It’s about saying: “Here’s what we used. Here’s what we know. Here’s what we don’t.” If we keep hiding behind complexity, we’ll keep getting bias, errors, and backlash. But if we make explainability part of the design - not an afterthought - we’ll finally build AI that works for everyone, not just the people who built it.Why can’t we just trust LLMs to explain themselves?

LLMs aren’t designed to be truthful - they’re designed to be plausible. When asked to explain, they often generate convincing-sounding reasons that sound logical but have nothing to do with their actual decision-making process. This is called “faithfulness failure.” Real explanations come from testing how changes in input affect output - not from asking the model to narrate its thoughts.

Does open-source AI solve transparency issues?

Open-source models help - but they’re not a magic fix. You still need to audit the training data. A model like LLaMA might be open, but if it was trained on unlicensed or biased datasets, it’s still flawed. Transparency requires looking at both the model and the data that shaped it.

Can explainability tools prevent AI bias?

Not directly. But they can reveal where bias hides. For example, if removing a person’s gender from an input changes the outcome, you know gender was influencing the decision - even if it wasn’t supposed to. That’s the first step toward fixing it. Without explainability, bias stays invisible.

Why does dataset provenance matter more than model size?

A larger model doesn’t mean a smarter one - it just means more data processed. If that data is flawed, biased, or mislabeled, the model will amplify those errors. A smaller model trained on clean, well-documented data often outperforms a giant one trained on garbage. Provenance tells you whether the data is trustworthy. Model size doesn’t.

Are there regulations requiring LLM transparency?

Yes - and they’re growing. The EU’s AI Act requires high-risk AI systems (like those used in hiring, credit, or healthcare) to provide detailed documentation on training data, testing, and decision logic. The U.S. National Institute of Standards and Technology (NIST) released its AI Risk Management Framework in 2025, which includes mandatory transparency reporting for federal contractors. Compliance isn’t optional anymore.

Amanda Ablan

11 March, 2026 - 23:47 PM

I’ve been using the Data Provenance Explorer at work, and it’s been a game-changer. We were about to deploy a customer support bot trained on a dataset that turned out to have 60% U.S.-only examples. The tool flagged it immediately-turned out the ‘global’ label was a lie. We switched to a Brazilian-led dataset and saw response accuracy jump 37% in Latin American markets. No magic, just better data.

Transparency isn’t about showing off code. It’s about not lying to people who depend on your tech. Simple.

Also, shoutout to MIT for not just complaining but building something usable. Too rare these days.

Meredith Howard

12 March, 2026 - 22:46 PM

It is imperative to recognize that the fundamental issue lies not in the architecture of the models themselves but in the provenance of the training corpora

Without rigorous documentation of origin licensing and demographic composition we are constructing systems on foundations of sand

The notion that increased parameter count equates to improved performance is a dangerous fallacy

Indeed a smaller model trained on audited data consistently outperforms its larger but unvetted counterparts

Regulatory frameworks must evolve to mandate machine-readable provenance metadata as a prerequisite for deployment

Otherwise we are merely automating bias at scale

Yashwanth Gouravajjula

13 March, 2026 - 09:22 AM

In India we see this every day. AI denies loans because someone lives in a village. But their income is stable-they just bank offline. No one trained the model on rural financial behavior. Just U.S. credit scores. That’s not AI. That’s colonialism with code.

Kevin Hagerty

15 March, 2026 - 02:15 AM

Oh great another whitepaper on ‘transparency’

Meanwhile my bank’s AI still thinks I’m a risk because I bought a yoga mat once

They don’t explain why

So I don’t care if they have a ‘provenance card’

Just give me a human to yell at

And stop calling this ‘AI ethics’

It’s PR with a flowchart

Janiss McCamish

15 March, 2026 - 17:28 PM

Stop overcomplicating this. If your AI can’t explain a decision in plain English, it shouldn’t be used. Period.

Healthcare? Loan apps? Legal docs? These aren’t games. If the system says ‘no’ to someone’s mortgage, it better be able to say ‘because your rent-to-income ratio was 42% and your last two utility bills were late’-not some fuzzy ‘high probability’ nonsense.

And yes, open models help. But only if you audit the data. A model isn’t ethical just because its code is public. The data could be trash. Always check the source.

Richard H

15 March, 2026 - 20:05 PM

Why are we letting foreign datasets dictate how American banks make decisions?

Half the training data out there was scraped from European social media or Indian forums. We’re training AI on cultural noise and calling it ‘diversity.’

Here’s the truth: if you want fairness, start with American data, American laws, American contexts. Stop outsourcing your AI’s brain to someone else’s internet.

And don’t get me started on ‘open-source’ models trained on pirated textbooks. That’s not innovation. That’s theft with a PhD.

Kendall Storey

17 March, 2026 - 13:57 PM

Look I’ve been in AI ops for 8 years. We used to think bigger models = better. Then we hit the wall. Turns out garbage in = garbage out. Always.

The Data Provenance Explorer? Absolute MVP. We rolled it into our CI/CD pipeline last quarter. Now every model gets a transparency score before it even hits staging. No more surprises at UAT.

And yeah, explainability tools that just generate fake rationales? Total theater. I call it ‘LLM storytelling.’ They’re not explaining-they’re improvising. The only real test is intervention: change the input, watch the output shift. That’s how you find the hidden levers.

Open models aren’t perfect. But they’re the only ones you can actually debug. Closed models? You’re flying blind. And someone’s gonna crash.