Category: Software Development - Page 2

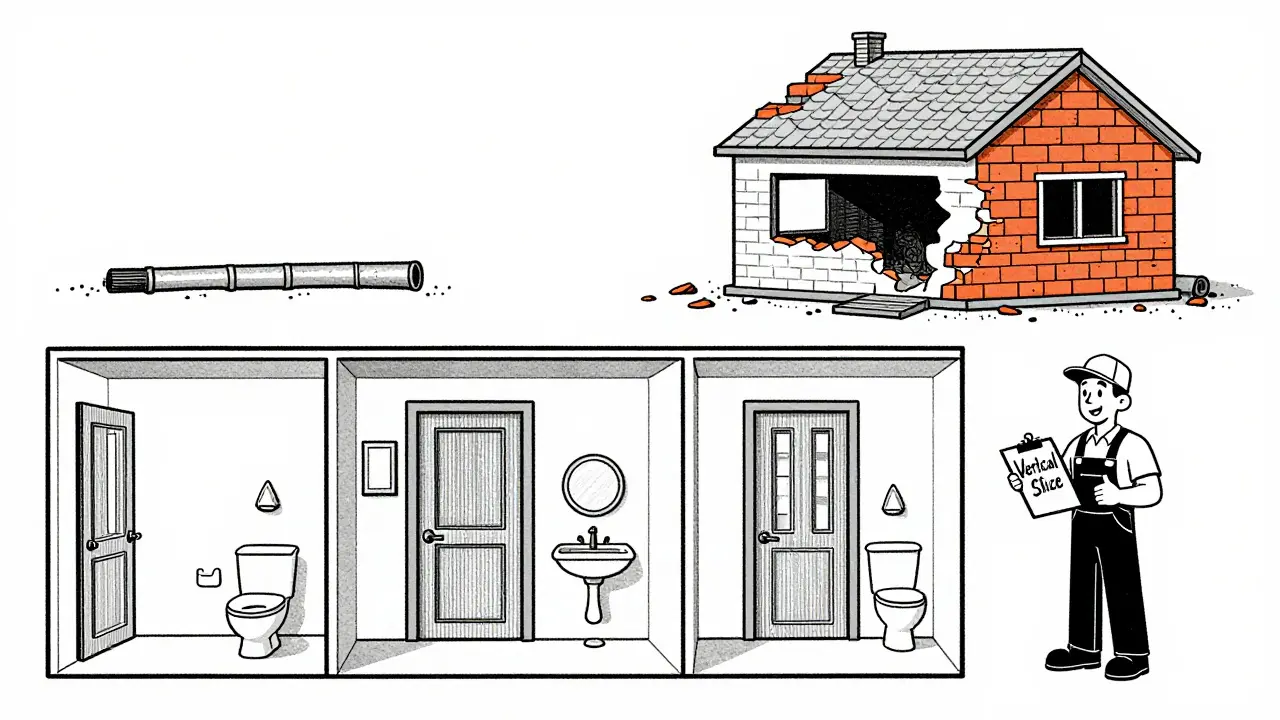

Scoping Prompts to Vertical Slices: End-to-End Over Feature Fragments

Vertical slicing delivers real user value by building end-to-end features instead of fragmented layers. Learn how this Agile method cuts development time by 40%, improves feedback, and transforms how teams ship software.

Read MoreReplit: Cloud Development with AI Agents and One-Click Deploys for Vibe Coding

Replit transforms coding into a smooth, collaborative experience with cloud-based development, AI-powered agents, and one-click deploys. No setup required-just code in your browser. Perfect for startups, education, and teams wanting to ship faster. Learn how Replit’s AI handles 90% of foundational code and deploys apps instantly.

Read MoreHow to Use Vibe Coding for Frontend i18n and Localization

Learn how vibe coding speeds up frontend i18n setup using LLMs, but avoid linguistic pitfalls with proper prompting. Real-world examples, best practices, and tools like i18next explained. Discover why hybrid approaches are becoming standard for global apps.

Read MoreStyle Guides for Prompts: Achieving Consistent Code Across Sessions

A coding style guide ensures consistent, readable code across teams and sessions. Learn how to build a practical, tool-driven guide that reduces review time, cuts bugs, and keeps developers sane-without overwhelming them with rules.

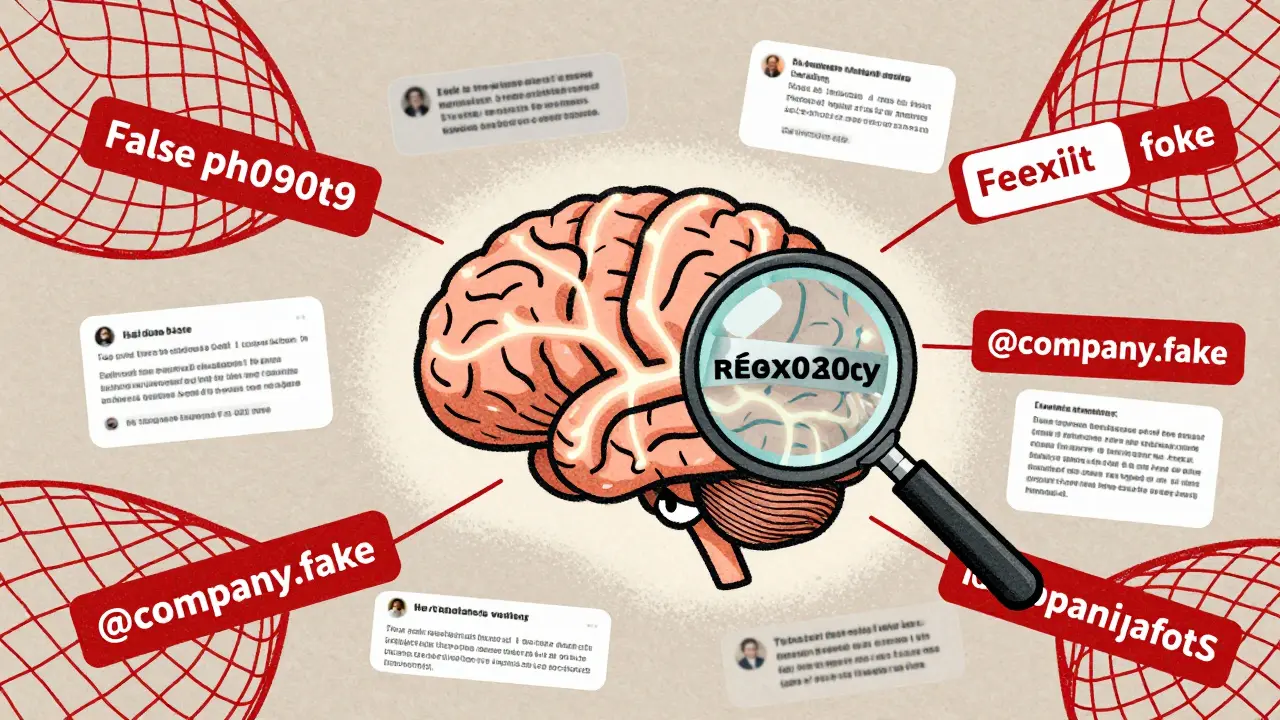

Read MorePost-Processing Validation for Generative AI: Rules, Regex, and Programmatic Checks to Stop Hallucinations

Post-processing validation stops generative AI hallucinations using rules, regex, and programmatic checks. Learn how to build a layered defense that catches lies before they reach users.

Read MoreQuantization-Aware Training for LLMs: How to Keep Accuracy While Shrinking Model Size

Quantization-aware training lets you shrink large language models to 4-bit without losing accuracy. Learn how it works, why it beats traditional methods, and how to use it in 2026.

Read MoreMemory Planning to Avoid OOM in Large Language Model Inference

Learn how memory planning techniques like CAMELoT and Dynamic Memory Sparsification reduce OOM errors in LLM inference without sacrificing accuracy, enabling larger models to run on standard hardware.

Read MoreOpen Source in the Vibe Coding Era: How Community Models Are Shaping AI-Powered Development

Open-source AI models are reshaping software development through community-driven fine-tuning, offering customization and control that closed-source models can't match-especially in privacy-sensitive and legacy code environments.

Read MoreSecurity Risks in LLM Agents: Injection, Escalation, and Isolation

LLM agents can act autonomously, making them powerful but vulnerable to prompt injection, privilege escalation, and isolation failures. Learn how these attacks work and how to protect your systems before it's too late.

Read MoreConstrained Decoding for LLMs: How JSON, Regex, and Schema Control Improve Output Reliability

Learn how constrained decoding ensures LLMs generate valid JSON, regex, and schema-compliant outputs-without manual fixes. See when it helps, when it hurts, and how to use it right.

Read MoreRAG Failure Modes: Diagnosing Retrieval Gaps That Mislead Large Language Models

RAG systems often appear to work but quietly fail due to retrieval gaps that mislead large language models. Learn the 10 hidden failure modes-from embedding drift to citation hallucination-and how to detect them before they cause real damage.

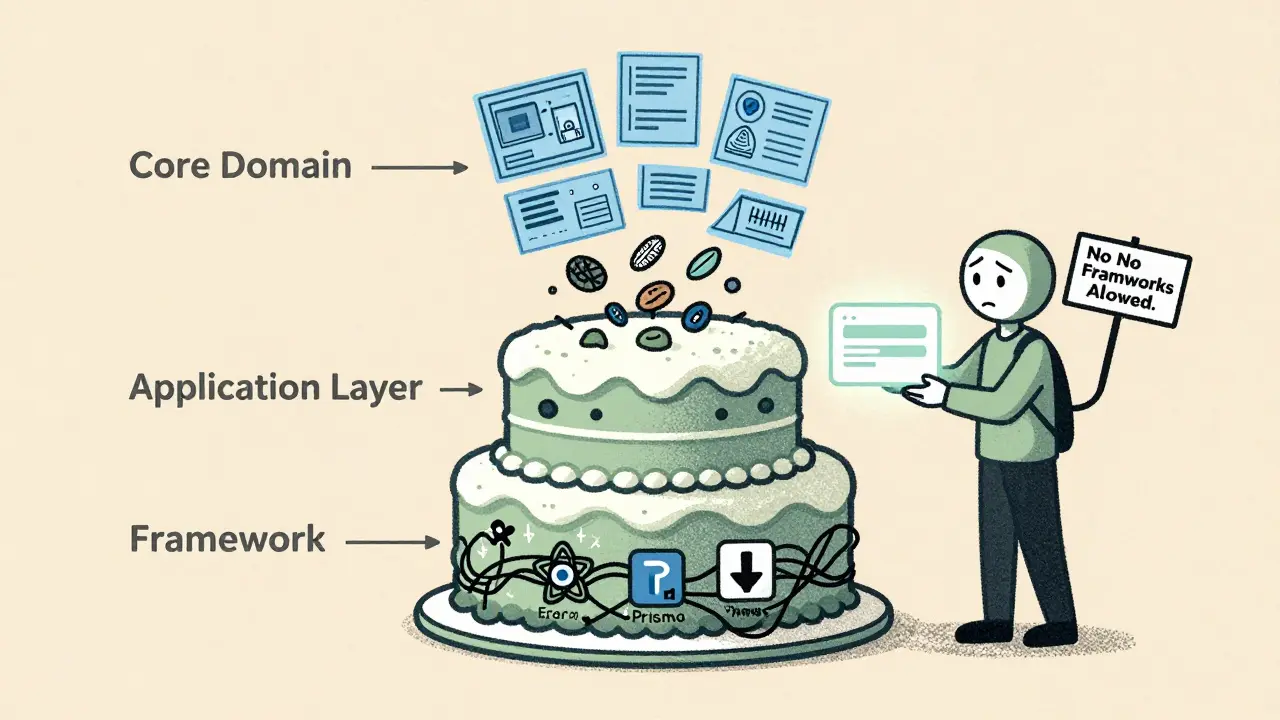

Read MoreClean Architecture in Vibe-Coded Projects: How to Keep Frameworks at the Edges

Clean Architecture keeps business logic separate from frameworks like React or Prisma. In vibe-coded projects, AI tools often mix them-leading to unmaintainable code. Learn how to enforce boundaries early and avoid framework lock-in.

Read More