Category: Business Technology - Page 2

Infrastructure Requirements for Serving Large Language Models in Production

Serving large language models in production requires specialized hardware, dynamic scaling, and smart cost optimization. Learn the real infrastructure needs-VRAM, GPUs, quantization, and hybrid cloud strategies-that make LLMs work at scale.

Read MoreKnowledge Management with Generative AI: Answer Engines Over Enterprise Documents

Generative AI is transforming enterprise knowledge management by turning document repositories into intelligent answer engines that deliver accurate, sourced responses to natural language questions - cutting search time by up to 75% and accelerating onboarding by 50%.

Read MoreLLM Evaluation Gates Before Switching from API to Self-Hosted

Before switching from an LLM API to self-hosted, organizations must pass strict performance, cost, and security gates. Learn the key thresholds, real-world failure rates, and the 7-step evaluation process that separates success from costly mistakes.

Read MoreLatency and Cost in Multimodal Generative AI: How to Budget Across Text, Images, and Video

Multimodal AI can boost accuracy but skyrockets costs and latency. Learn how to budget across text, images, and video by optimizing token use, choosing the right hardware, and avoiding common overspending traps.

Read MorePrivacy-Aware RAG: How to Protect Sensitive Data When Using Large Language Models

Privacy-Aware RAG protects sensitive data in AI systems by removing PII before it reaches large language models. Learn how it works, why it's critical for compliance, and how to implement it without losing accuracy.

Read MoreHuman-in-the-Loop Operations for Generative AI: Review, Approval, and Exceptions

Human-in-the-loop operations for generative AI ensure AI outputs are reviewed, approved, and corrected by people before deployment. Learn how top companies use structured workflows to balance speed, safety, and compliance.

Read MoreHuman-in-the-Loop Review for Generative AI: Catching Errors Before Users See Them

Human-in-the-loop review catches AI hallucinations before users see them, reducing errors by up to 73%. Learn how top companies use confidence scoring, domain experts, and smart workflows to prevent costly mistakes.

Read MoreHuman-in-the-Loop Review for Generative AI: Catching Errors Before Users See Them

Human-in-the-loop review catches dangerous AI hallucinations before users see them. Learn how it works, where it saves money and lives, and why automated filters alone aren't enough.

Read MoreMeasuring Hallucination Rate in Production LLM Systems: Key Metrics and Real-World Dashboards

Learn how to measure hallucination rates in production LLM systems using real-world metrics like semantic entropy and RAGAS. Discover what works, what doesn’t, and how top companies are reducing factuality risks in 2025.

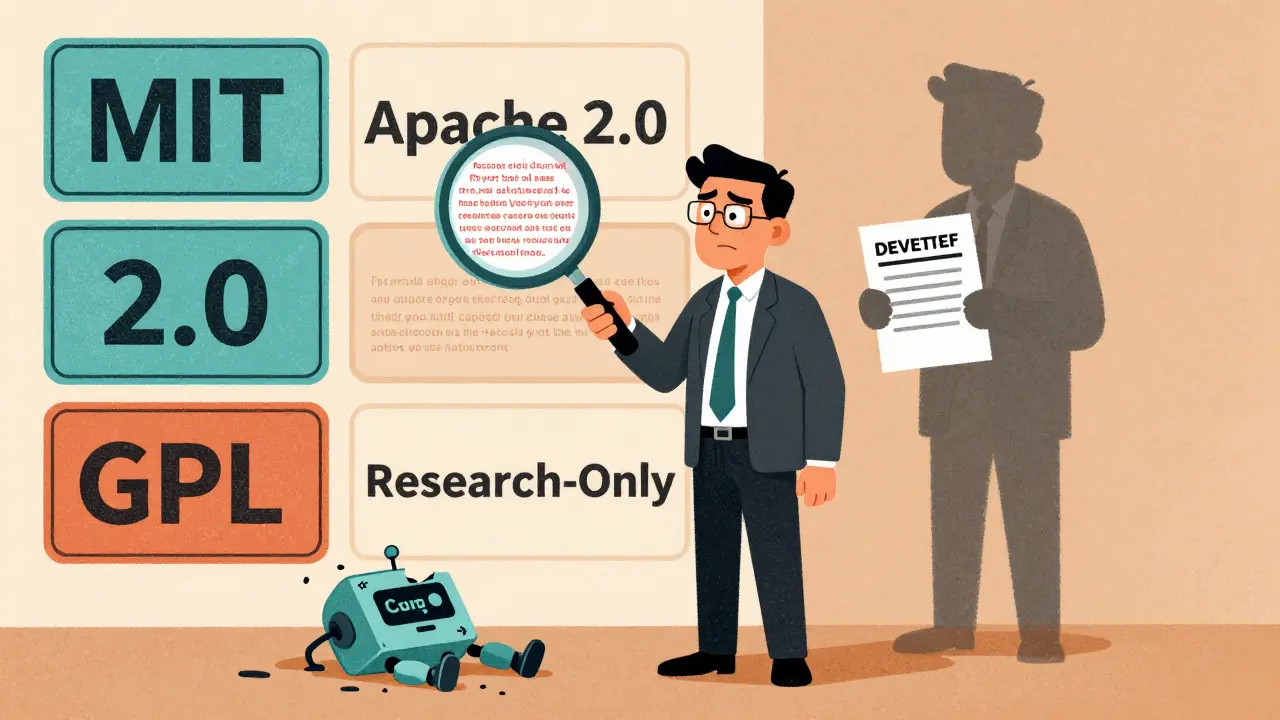

Read MoreLegal and Licensing Considerations for Deploying Open-Source Large Language Models

Open-source LLMs can save millions in API costs-but only if you follow the license rules. Learn how MIT, Apache 2.0, and GPL licenses affect commercial use, training data risks, and compliance steps to avoid lawsuits.

Read MoreModel Compression Economics: How Quantization and Distillation Cut LLM Costs by 90%

Quantization and distillation cut LLM inference costs by up to 95%, enabling affordable AI on edge devices and budget clouds. Learn how these techniques work, when to use them, and what hardware you need.

Read MoreRetail and Generative AI: How AI Is Transforming Product Copy, Merchandising, and Visual Assets

Generative AI is transforming retail by automating product copy, personalizing merchandising, and creating virtual try-ons. Learn how top retailers are using AI to boost conversions, cut costs, and stay ahead-without losing brand voice.

Read More