Author: Calder Rivenhall - Page 5

Knowledge Management with Generative AI: Answer Engines Over Enterprise Documents

Generative AI is transforming enterprise knowledge management by turning document repositories into intelligent answer engines that deliver accurate, sourced responses to natural language questions - cutting search time by up to 75% and accelerating onboarding by 50%.

Read MoreSecurity Risks in LLM Agents: Injection, Escalation, and Isolation

LLM agents can act autonomously, making them powerful but vulnerable to prompt injection, privilege escalation, and isolation failures. Learn how these attacks work and how to protect your systems before it's too late.

Read MoreLLM Evaluation Gates Before Switching from API to Self-Hosted

Before switching from an LLM API to self-hosted, organizations must pass strict performance, cost, and security gates. Learn the key thresholds, real-world failure rates, and the 7-step evaluation process that separates success from costly mistakes.

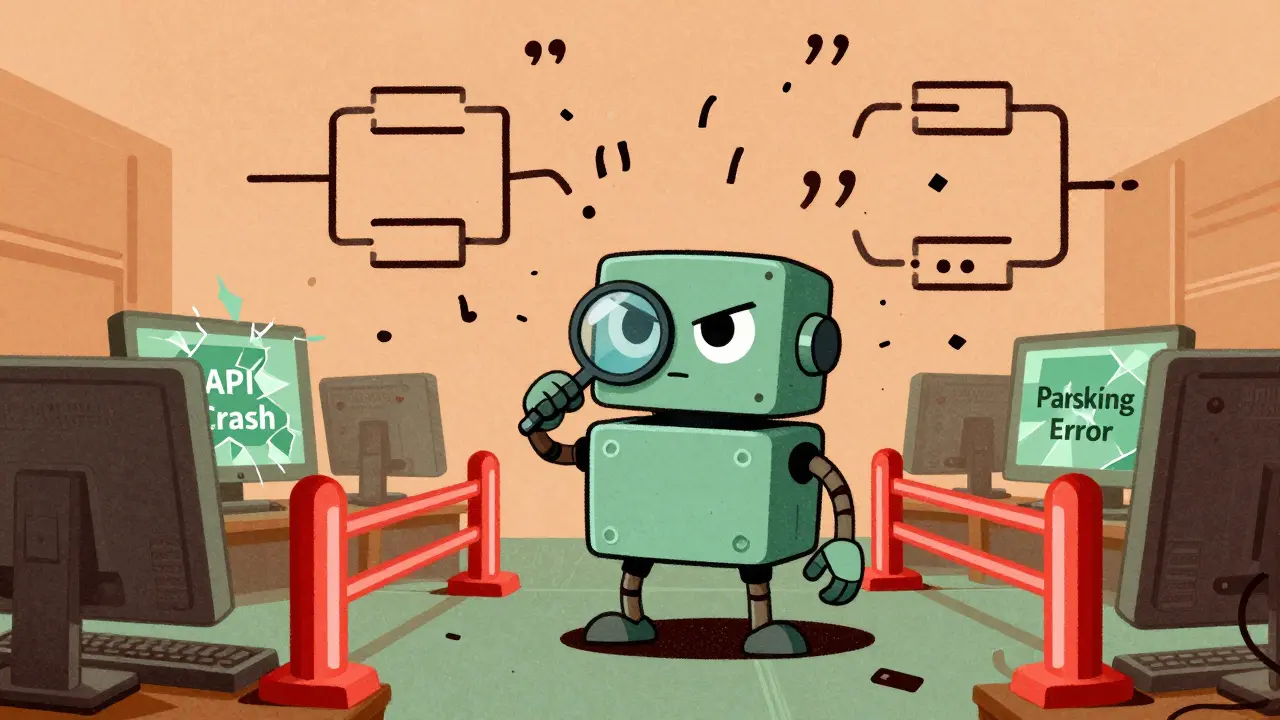

Read MoreConstrained Decoding for LLMs: How JSON, Regex, and Schema Control Improve Output Reliability

Learn how constrained decoding ensures LLMs generate valid JSON, regex, and schema-compliant outputs-without manual fixes. See when it helps, when it hurts, and how to use it right.

Read MoreLatency and Cost in Multimodal Generative AI: How to Budget Across Text, Images, and Video

Multimodal AI can boost accuracy but skyrockets costs and latency. Learn how to budget across text, images, and video by optimizing token use, choosing the right hardware, and avoiding common overspending traps.

Read MoreRAG Failure Modes: Diagnosing Retrieval Gaps That Mislead Large Language Models

RAG systems often appear to work but quietly fail due to retrieval gaps that mislead large language models. Learn the 10 hidden failure modes-from embedding drift to citation hallucination-and how to detect them before they cause real damage.

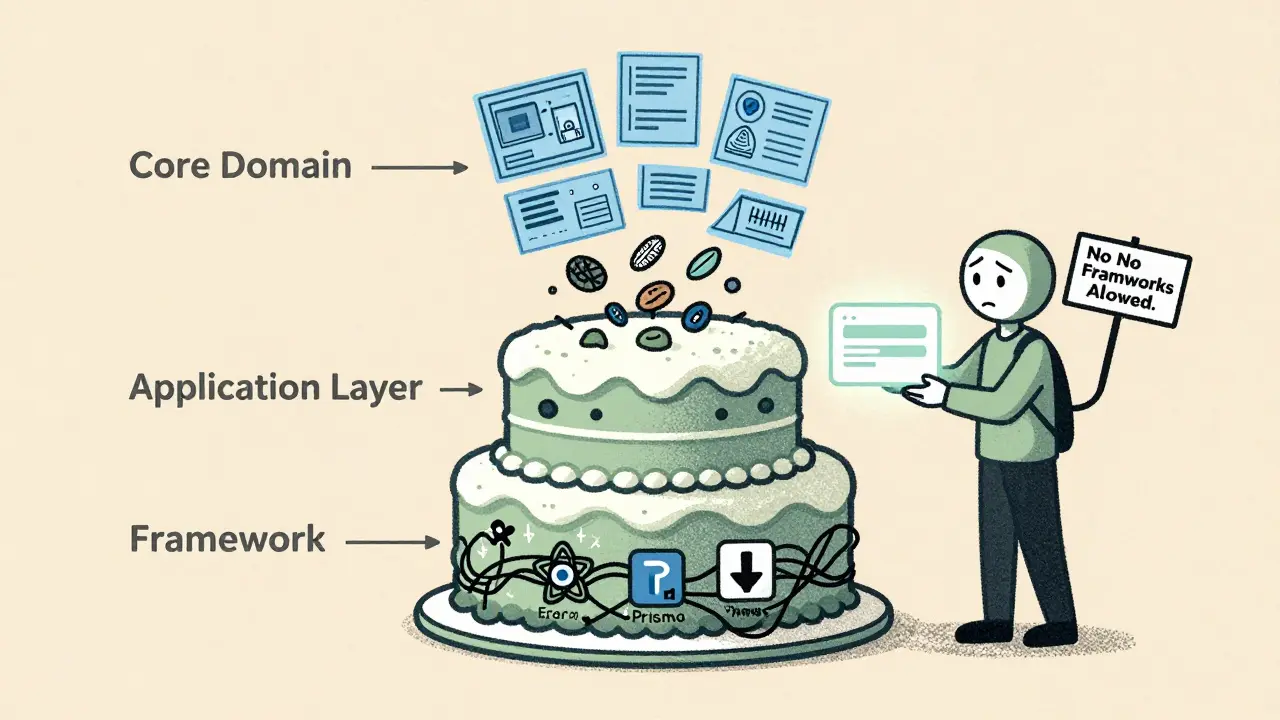

Read MoreClean Architecture in Vibe-Coded Projects: How to Keep Frameworks at the Edges

Clean Architecture keeps business logic separate from frameworks like React or Prisma. In vibe-coded projects, AI tools often mix them-leading to unmaintainable code. Learn how to enforce boundaries early and avoid framework lock-in.

Read MorePrivacy-Aware RAG: How to Protect Sensitive Data When Using Large Language Models

Privacy-Aware RAG protects sensitive data in AI systems by removing PII before it reaches large language models. Learn how it works, why it's critical for compliance, and how to implement it without losing accuracy.

Read MoreProductivity Uplift with Vibe Coding: What 74% of Developers Report

74% of developers report productivity gains with vibe coding, but real-world results vary wildly. Learn how AI coding tools actually impact speed, quality, and skill growth-and who benefits most.

Read MoreHuman-in-the-Loop Operations for Generative AI: Review, Approval, and Exceptions

Human-in-the-loop operations for generative AI ensure AI outputs are reviewed, approved, and corrected by people before deployment. Learn how top companies use structured workflows to balance speed, safety, and compliance.

Read MoreHuman-in-the-Loop Review for Generative AI: Catching Errors Before Users See Them

Human-in-the-loop review catches AI hallucinations before users see them, reducing errors by up to 73%. Learn how top companies use confidence scoring, domain experts, and smart workflows to prevent costly mistakes.

Read MoreHuman-in-the-Loop Review for Generative AI: Catching Errors Before Users See Them

Human-in-the-loop review catches dangerous AI hallucinations before users see them. Learn how it works, where it saves money and lives, and why automated filters alone aren't enough.

Read More