Category: Technology - Page 2

Prompt Sensitivity in Large Language Models: Why Wording Changes Output

Small changes in how you phrase a prompt can drastically alter an AI's output. Learn why this happens, which models handle it best, and how to build more reliable prompts for real-world use.

Read MoreMultilingual Performance of Large Language Models: How Transfer Learning Bridges Language Gaps

Multilingual large language models use transfer learning to understand multiple languages, but performance drops sharply for low-resource languages. Learn why, how new techniques like CSCL are helping, and what it means for global AI equity.

Read MorePrivacy and Security Risks of Distilled Large Language Models - What You Must Know

Distilled LLMs are faster and cheaper but inherit the same privacy risks as their larger models. Learn how model compression creates hidden security flaws - and what you must do to protect your data.

Read MoreScaling for Reasoning: How Thinking Tokens Are Rewriting LLM Performance Rules

Thinking tokens are transforming how LLMs reason by targeting inference-time bottlenecks. Unlike traditional scaling, they boost accuracy on math and logic tasks without retraining - but at a high compute cost.

Read MoreWhy Multimodality Is the Next Big Leap in Generative AI

Multimodal AI combines text, images, audio, and video to understand context like humans do-making generative AI smarter, faster, and more accurate than text-only systems. Here's how it's already changing healthcare, customer service, and marketing.

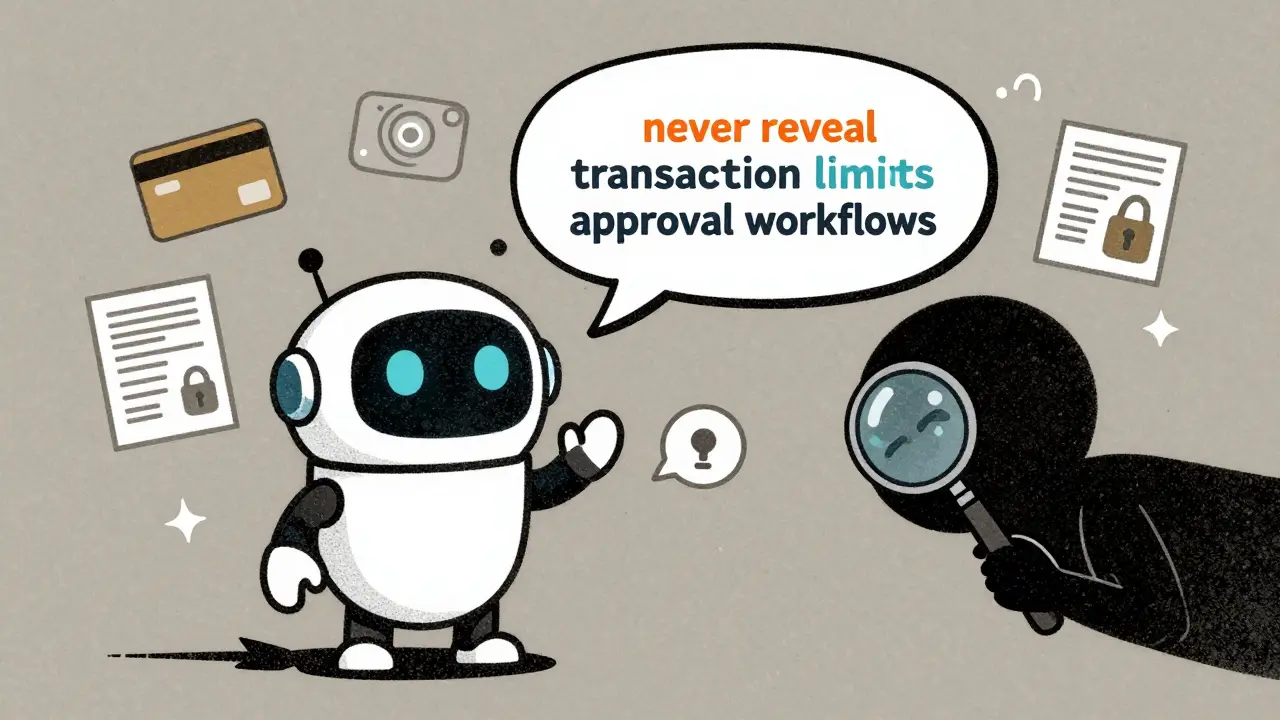

Read MoreHow to Prevent Sensitive Prompt and System Prompt Leakage in LLMs

System prompt leakage is now a top AI security threat, letting attackers steal hidden instructions from LLMs. Learn how to stop it with proven techniques like output filtering, instruction defense, and external guardrails.

Read MoreMultimodal Generative AI: Models That Understand Text, Images, Video, and Audio

Multimodal generative AI understands text, images, audio, and video together-making it smarter than older AI systems. Learn how models like GPT-4o and Llama 4 work, where they’re used, and why they’re changing industries in 2025.

Read MoreModel Parallelism and Pipeline Parallelism in Large Generative AI Training

Model and pipeline parallelism enable training of massive generative AI models by splitting them across multiple GPUs. Learn how these techniques overcome GPU memory limits and power models like GPT-3 and Claude 2.

Read MoreMulti-Head Attention in Large Language Models: How Parallel Perspectives Power Modern AI

Multi-head attention lets large language models understand language from multiple angles at once, enabling breakthroughs in context, grammar, and meaning. Learn how it works, why it dominates AI, and what's next.

Read MoreConfidential Computing for LLM Inference: How TEEs and Encryption-in-Use Protect AI Models and Data

Confidential computing uses hardware-based Trusted Execution Environments to protect LLM models and user data during inference. Learn how encryption-in-use with TEEs from NVIDIA, Azure, and Red Hat solves the AI privacy paradox for enterprises.

Read MoreError Analysis for Prompts in Generative AI: Diagnosing Failures and Fixes

Error analysis for prompts in generative AI helps diagnose why AI models give wrong answers-and how to fix them. Learn the five-step process, key metrics, and tools that cut hallucinations by up to 60%.

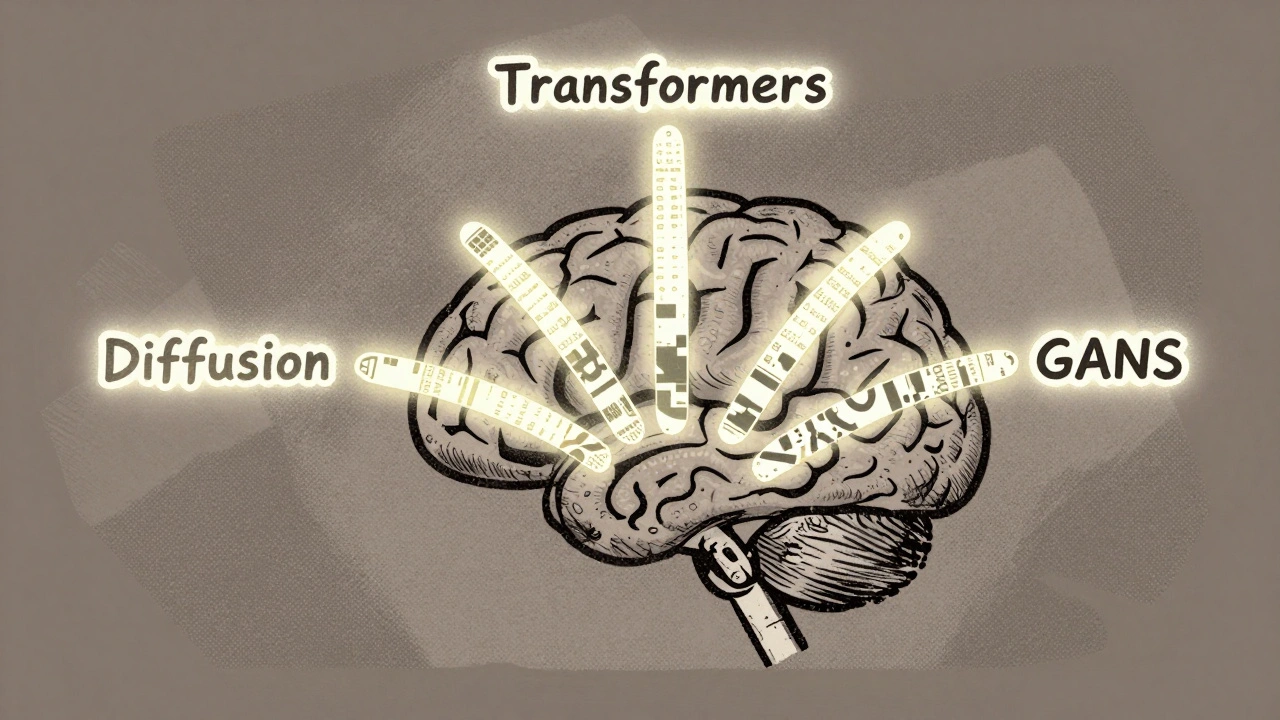

Read MoreFoundational Technologies Behind Generative AI: Transformers, Diffusion Models, and GANs Explained

Transformers, Diffusion Models, and GANs are the three core technologies behind today's generative AI. Learn how each works, where they excel, and which one to use for text, images, or real-time video.

Read More