Imagine trying to translate a complex sentence from French to English without ever looking back at the original text. You’d have to rely entirely on your memory of the first few words, hoping they contained all the necessary context for the rest of the sentence. That’s essentially what happens in neural networks when we lack a proper mechanism to connect input and output sequences dynamically. This is where Cross-Attention comes in. It is the critical bridge that allows decoder layers in transformer models to condition their outputs on information processed by the encoder.

In the world of large language models (LLMs) and sequence-to-sequence tasks, cross-attention isn’t just a nice-to-have feature-it’s the engine that makes accurate translation, summarization, and multimodal understanding possible. Without it, decoders would be blind to the specific details of the source data after the initial encoding phase. Let’s break down how this mechanism works, why it’s structured the way it is, and why you should care if you’re building or using modern AI systems.

The Anatomy of Cross-Attention

To understand cross-attention, we first need to look at the standard Transformer Architecture, which was introduced in the seminal paper "Attention Is All You Need." The architecture splits processing into two main components: an encoder and a decoder. The encoder processes the input sequence (like a French sentence), while the decoder generates the output sequence (the English translation).

Inside each decoder layer, there are three distinct sub-layers arranged in a specific order:

- Masked Self-Attention: The decoder looks at its own previous outputs. The "mask" ensures it only sees tokens generated up to the current position, preventing it from "cheating" by peeking at future tokens during training.

- Cross-Attention: This is the star of our show. Here, the decoder queries the encoder’s output to gather relevant context.

- Feed-Forward Network: A final step that processes the combined information from self-attention and cross-attention.

This ordering is crucial. First, the decoder refines its own internal state. Then, it reaches out to the encoder via cross-attention to pull in external context. Finally, it synthesizes everything. If you swap these steps, the model loses the ability to effectively ground its generation in the source material.

How Queries, Keys, and Values Interact

Cross-attention operates using the same mathematical foundation as self-attention but with a twist in where the vectors come from. In self-attention, queries, keys, and values all originate from the same sequence. In cross-attention, they come from different places:

- Queries (Q): Generated from the decoder’s current state. These represent what the decoder is currently trying to generate or predict.

- Keys (K) and Values (V): Generated from the encoder’s output. These represent the available information from the source sequence.

Think of it like a library search. The decoder has a question (Query). The encoder provides the catalog of books (Keys) and the actual content of those books (Values). The cross-attention mechanism calculates how well each book matches the question. It computes the dot product between Q and K^T, scales it by $1/\sqrt{d_k}$ to keep gradients stable, and applies a softmax function to get attention weights. These weights determine how much of each Value vector contributes to the final output.

This asymmetry-where Q comes from one place and K/V from another-is what enables conditioning. The decoder actively seeks out relevant parts of the encoder’s representation rather than passively receiving a fixed summary.

Cross-Attention vs. Self-Attention: What’s the Difference?

It’s easy to confuse these two mechanisms since they share similar math. However, their roles are fundamentally different:

| Feature | Self-Attention | Cross-Attention |

|---|---|---|

| Source of Q, K, V | All from the same sequence | Q from Decoder; K, V from Encoder |

| Purpose | Understand relationships within a single sequence (e.g., syntax) | Align and map information between two different sequences |

| Location in Transformer | Both Encoder and Decoder | Decoder Only |

| Masking | Often masked in Decoder to prevent future leakage | Uses padding masks to ignore invalid encoder positions |

Self-attention helps a model understand that "bank" in "river bank" refers to land, not money, by looking at surrounding words. Cross-attention helps a model decide that the French word "rivière" should trigger the English concept of "river" by attending to the corresponding part of the encoded French sentence.

Why Cross-Attention Matters for Multimodal AI

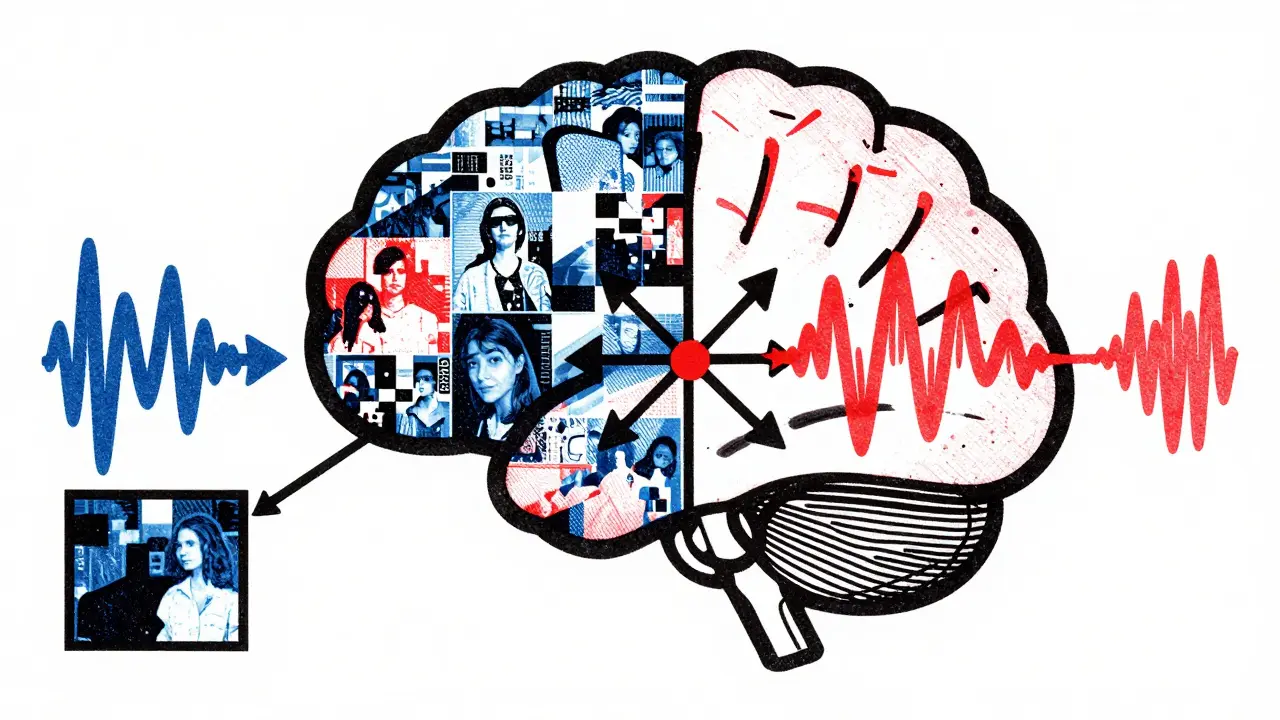

While cross-attention started in machine translation, its real power shines in Multimodal Learning. Today’s AI doesn’t just process text. It handles images, audio, and video simultaneously. How does a model know which part of an image corresponds to which word in a caption? Cross-attention.

In multimodal architectures, you might have separate encoders for vision and language. The decoder can use cross-attention to attend to both visual features and textual tokens. There are two common ways to implement this:

- Concatenated KV Pairs: Combine visual and textual encoder outputs into a single sequence of keys and values. The decoder attends to this mixed pool collectively.

- Separate Cross-Attention Layers: Use one cross-attention layer for visual context and another for textual context. This gives finer control over how each modality influences the output.

Libraries like Hugging Face Transformers support these patterns extensively, making it easier for developers to build models that can describe images, answer questions about documents, or generate code from diagrams.

Practical Challenges: Masks and Stability

Implementing cross-attention isn’t just about writing the matrix multiplications. You have to handle edge cases carefully.

Padding Masks: Input sequences often vary in length. To batch them efficiently, shorter sequences are padded with special tokens. If the decoder attends to these padding tokens, it introduces noise. Cross-attention applies a mask to set the attention scores of padding positions to negative infinity before the softmax step. This ensures the model ignores irrelevant padding and focuses only on actual content.

Numerical Stability: The scaling factor $1/\sqrt{d_k}$ is not optional. Without it, dot products between high-dimensional vectors can become very large. Large values cause the softmax function to saturate, resulting in near-zero gradients. This stalls learning. Scaling keeps the values in a range where gradients flow smoothly, allowing the model to train effectively.

The Bottleneck Problem: Why We Can’t Just Use Fixed Context

You might wonder: why not just compress the entire encoder output into a single vector and pass that to the decoder? Early models like seq2seq with LSTM did exactly this. But this creates a severe bottleneck. A fixed-size vector cannot capture all the nuances of long or complex inputs. Information gets lost.

Cross-attention solves this by allowing the decoder to access the full encoder output at every step. Instead of relying on a compressed summary, the decoder can look up specific details as needed. For example, when translating a long legal document, the decoder might need to reference a clause mentioned ten sentences earlier. Cross-attention lets it jump directly to that part of the encoder’s representation, maintaining precision over long distances.

Future Directions: Efficiency and Sparse Attention

As models grow larger, cross-attention becomes computationally expensive. The complexity scales quadratically with sequence length because every decoder token attends to every encoder token. Researchers are exploring solutions:

- Sparse Attention: Limiting the number of encoder positions each decoder token attends to, based on heuristics or learned patterns.

- Efficient Variants: Using approximations like FlashAttention to speed up computation without sacrificing accuracy.

- Hierarchical Processing: Lower decoder layers handle basic alignments, while higher layers focus on abstract relationships, reducing redundant calculations.

These innovations aim to keep cross-attention fast enough for real-time applications like live translation or interactive chatbots.

What is the primary role of cross-attention in a transformer model?

The primary role of cross-attention is to allow the decoder to condition its output generation on the encoder’s processed input. It acts as a bridge, enabling the decoder to retrieve relevant context from the source sequence dynamically, which is essential for tasks like machine translation and multimodal learning.

How does cross-attention differ from self-attention?

In self-attention, queries, keys, and values all come from the same sequence, helping the model understand internal relationships. In cross-attention, queries come from the decoder, while keys and values come from the encoder, allowing the model to align and map information between two different sequences.

Why is the scaling factor 1/sqrt(d_k) important in cross-attention?

The scaling factor prevents dot products from becoming excessively large, which would cause softmax outputs to saturate and gradients to vanish. By keeping values in a stable range, it ensures smooth gradient flow during backpropagation, facilitating effective training.

Can cross-attention be used in multimodal models?

Yes, cross-attention is foundational in multimodal AI. It allows decoders to attend to features from multiple modalities, such as images and text, either by concatenating their key-value pairs or using separate cross-attention layers for each modality.

What happens if padding masks are not applied in cross-attention?

Without padding masks, the decoder might attend to padding tokens added to match sequence lengths. This introduces noise and distracts the model from actual content, leading to poorer performance and inaccurate predictions.