Tag: encoder-decoder transformers

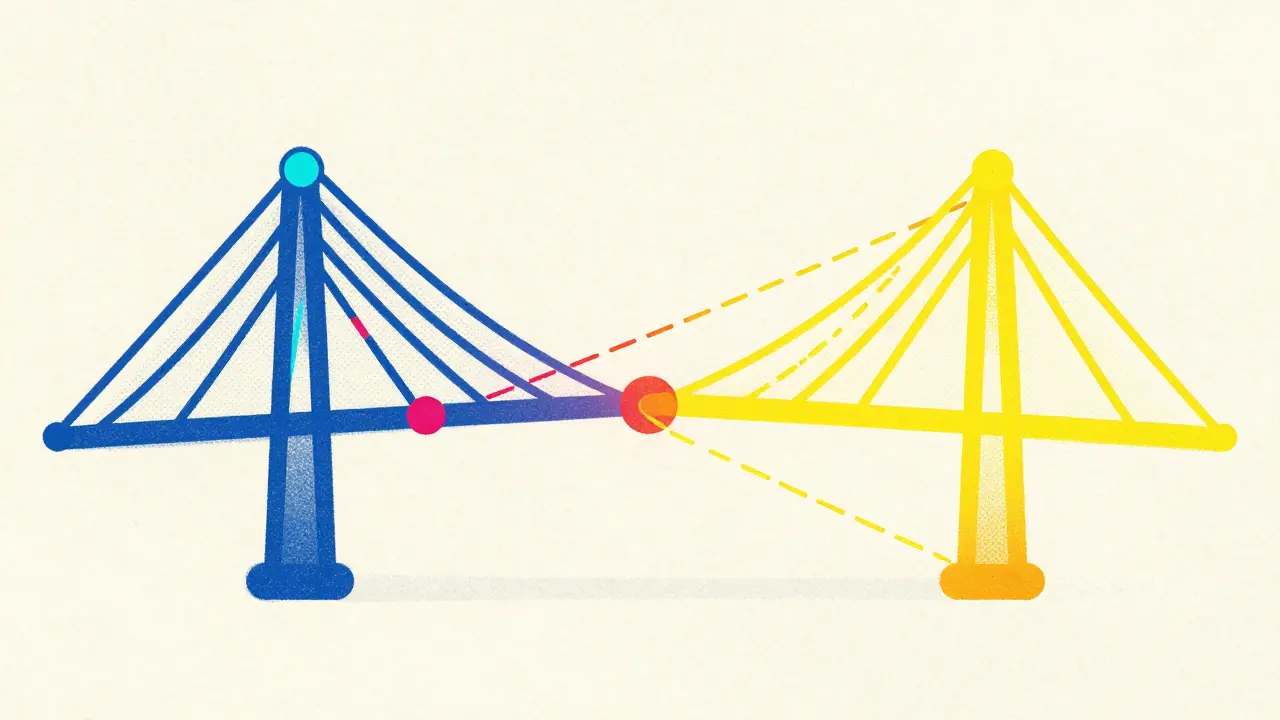

Cross-Attention in Encoder-Decoder Transformers: When LLMs Need Conditioning

Explore how cross-attention bridges encoder and decoder in transformers, enabling precise conditioning for translation and multimodal AI.

Read More