When an AI assistant tells you that a specific medication interacts with your current prescription, you don't just want to nod and move on. You need proof. In the world of Large Language Models, which are advanced AI systems capable of generating human-like text based on vast amounts of training data, this demand for proof is driving a massive shift toward source citation and evidence linking. It’s no longer enough for an AI to sound confident; it needs to show its work.

The core problem isn’t just about hallucinations-those wild guesses where AI makes up facts. The deeper issue is trust. Users, regulators, and professionals need to audit the reliability of AI statements. Whether it’s a doctor checking medical guidelines or a student verifying a historical date, the ability to trace a claim back to a credible source is what separates a useful tool from a dangerous liability. This article breaks down how these citation systems work, why they matter, and how they’re built to keep AI honest.

Why Source Attribution Is Non-Negotiable

Think about the last time you read a news article without sources. You probably scrolled past it or questioned its validity. With AI, the stakes are higher because the output is generated in real-time, often blending internal knowledge with live web searches. When an LLM combines parametric knowledge (what it learned during training) with non-parametric data (real-time online research), the resulting mix can be messy if not properly attributed.

In high-stakes fields like healthcare, law, and finance, vague answers aren’t an option. If an AI provides advice on tax deductions, it must cite the specific code section. If it suggests a treatment plan, it needs to reference peer-reviewed studies. Without this link to evidence, users cannot verify if the information is current, accurate, or biased. This verification process is essential for mitigating intellectual property issues and ethical concerns, ensuring that the AI isn’t just parroting misinformation but building arguments on solid ground.

How LLMs Find and Link Sources

So, how does an AI actually attach a link to a sentence? It’s not magic; it’s a complex pipeline involving several technical phases. Most modern systems use a combination of pre-hoc and post-hoc methods to handle citations.

Pre-hoc citation happens before the content is fully generated. The system identifies potential sources inline as it drafts the response. Imagine a librarian pulling books off the shelf while writing a summary. This method ensures that the evidence is present from the start, reducing the chance of making unsupported claims later.

Post-hoc citation occurs after the content is created. Here, the AI evaluates its own output against retrieved documents to refine and integrate citations. It’s like writing an essay first and then adding footnotes to support your arguments. A mixed approach, using both methods, is currently considered best practice. It creates robust content by initially retrieving relevant sources and then rigorously evaluating them against the final text.

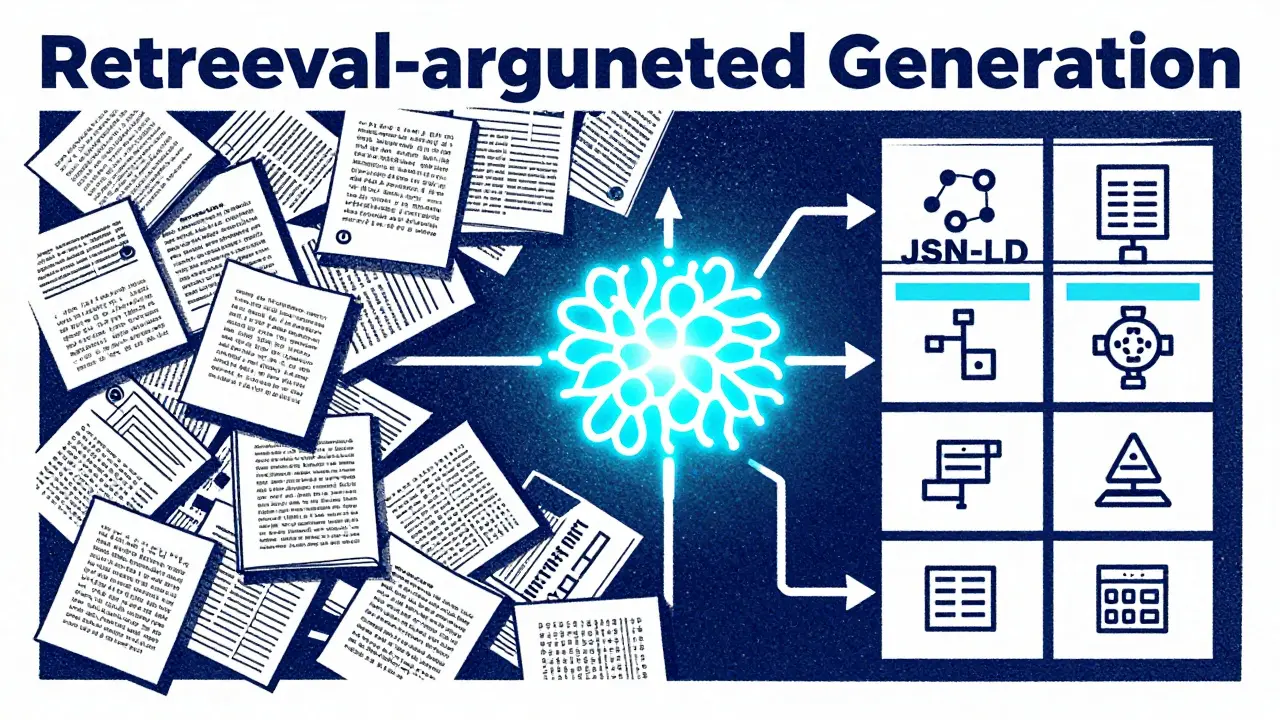

Under the hood, this relies heavily on Retrieval-Augmented Generation (RAG), a technical architecture that enhances LLMs by retrieving external information before generating responses. A typical RAG pipeline splits source documents into searchable chunks. When you ask a question, a semantic search engine finds the most relevant chunks, and the LLM uses those specific snippets to construct its answer, citing the exact paragraph where the info came from.

The Role of Structured Data in Evidence Weighting

Not all sources are created equal, and AI knows it. The quality of a citation depends heavily on how well-structured the source material is. This is where Structured Data, such as code added to websites to help search engines understand content context like JSON-LD, RDFa, or Schema.org markup, becomes critical.

When an LLM scans a webpage, it doesn’t just read text. It looks for structured signals. If a page has clear entity anchors, Q-IDs, or sameAs properties, the AI can verify connections between different pieces of information much faster and more accurately. For example, if two articles mention "Apple Inc." with the same unique identifier, the AI understands they refer to the same company, enabling multi-hop reasoning across documents.

This structured data increases the evidential value of a source. During the evidence weighting phase, the AI decides which sources get visibility in the final response. It resolves contradictions by weighing credibility and structural connectivity. A source with rich, explicit metadata is far more likely to be cited than a plain-text blog post, even if the plain text contains similar information. This shifts the focus from simple keyword matching to deep semantic understanding.

| Methodology | Timing | Primary Benefit | Main Risk |

|---|---|---|---|

| Pre-hoc | During generation | Ensures evidence availability upfront | May limit creative flow |

| Post-hoc | After generation | Refines integration and accuracy | Risk of retrofitting weak sources |

| Mixed Approach | Both stages | Robustness and ethical compliance | Higher computational cost |

Evaluating Accuracy: The SourceCheckup Framework

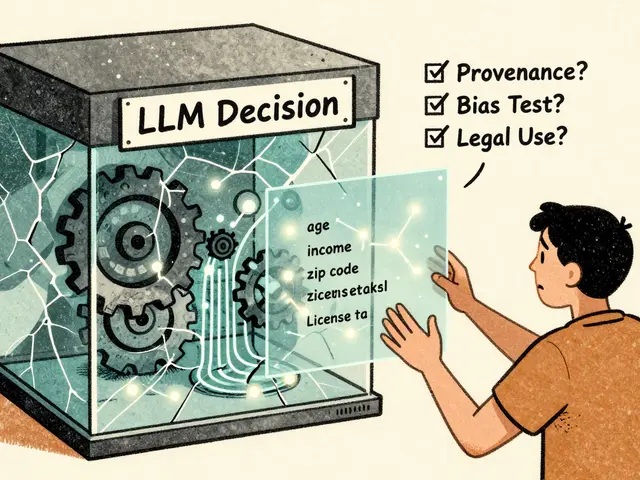

How do we know if these citations are actually good? We can’t just trust the AI to say it’s right. That’s why researchers have developed automated evaluation frameworks like SourceCheckup, an automated agent-based pipeline designed to evaluate the relevance and supportiveness of sources in LLM responses.

SourceCheckup works by analyzing statement-source pairings. It checks if a specific claim made by the AI is truly supported by the linked document. In medical domains, this framework has shown remarkable precision. Studies using SourceCheckup found an 88.7% agreement rate between the model’s evaluations and consensus among US-licensed medical doctors. This level of accuracy proves that AI can reliably audit itself when given the right tools.

To achieve this, researchers constructed a dedicated corpus of 58,000 medical-specific statement-source pairs. This dataset allows for longitudinal studies, tracking how model performance improves over time. It also helps measure precision-the percentage of sources that genuinely support the statements versus generic lists of links. High precision means the AI is intentionally attributing claims, not just dumping URLs at the end of a paragraph.

Fixing Errors with SourceCleanup

Even with advanced systems, errors happen. Sometimes an AI will partially deviate from its source, creating a half-truth. To address this, developers use correction agents like SourceCleanup, a specialized LLM agent that modifies unsupported statements to align with their cited sources.

SourceCleanup takes a problematic statement and its corresponding source as input. It then rewrites the statement to ensure it is fully supported by the evidence. This is crucial for maintaining integrity in critical applications. If an AI says a drug has "minor side effects" but the source says "severe side effects," SourceCleanup steps in to correct the discrepancy before the user sees it. This layer of defense significantly boosts overall citation accuracy and user safety.

Building Authority Through Citations

For content creators and businesses, getting cited by LLMs is the new SEO. It’s distinct from traditional search engine ranking. Instead of chasing backlinks, you’re building authority through strategic practices. Creating pillar pages with comprehensive, well-structured data helps LLMs recognize your content as a primary source.

Adding evidence with structured data affects every stage of the LLM’s processing: retrieval, entity recognition, evidence weighting, and reasoning. If your website uses Schema.org markup correctly, you increase the likelihood that an AI will extract and cite your information accurately. This represents a shift from link building to authority development, where clarity and structure trump volume.

What is the difference between pre-hoc and post-hoc citation?

Pre-hoc citation identifies and retrieves sources during the content generation process, ensuring evidence is available upfront. Post-hoc citation evaluates and refines citations after the content is created, allowing for better integration and accuracy checks. A mixed approach is often recommended for optimal results.

How does structured data improve LLM citations?

Structured data formats like JSON-LD and Schema.org provide explicit references to entities and relationships. This helps LLMs verify connections between sources, resolve contradictions, and weigh evidence more accurately, leading to higher-quality citations.

What is SourceCheckup used for?

SourceCheckup is an automated framework that evaluates whether statements made by LLMs are truly supported by their cited sources. It measures precision and relevance, helping developers ensure their AI models provide accurate and verifiable information.

Can LLMs fix their own citation errors?

Yes, specialized agents like SourceCleanup can detect unsupported or partially supported statements and rewrite them to align strictly with the provided source material, improving overall accuracy and trustworthiness.

Why is evidence weighting important in AI responses?

Evidence weighting determines which sources are most credible and relevant to include in the final response. It helps resolve conflicts between contradictory sources and ensures that the AI prioritizes high-quality, structured information over vague or less reliable data.