Imagine being able to tell exactly how smart an artificial intelligence will be before you ever finish training it. That sounds like science fiction, but it's the daily reality of modern AI development. In 2026, we stopped guessing and started calculating. We call this process neural scaling, and it represents one of the most important shifts in how we build large language models. Instead of burning millions of dollars on trial-and-error, teams now use mathematical laws to predict performance with remarkable accuracy.

This approach relies on a simple but powerful premise: the capabilities of a language model follow predictable patterns based on three things. First, there is the sheer size of the model itself, measured by its number of parameters. Second, there is the amount of text the model has read during training, counted in tokens. Third, there is the computational energy required to put them together. When you tweak any one of these levers, you know exactly how much better the model will get. It is essentially a recipe for intelligence.

The Core Equation of Intelligence

To understand why neural scaling laws work, you have to look at the graph of loss versus compute. Loss is a fancy term for error rate-essentially, how often the AI guesses the wrong next word. Early research showed that this relationship isn't random. If you plot the error rate on a logarithmic scale against the amount of computing power used, you get a straight line. This linearity allows us to extend that line far into the future.

We can break this down into three critical variables that every developer needs to track:

- Model Size (N): The total number of adjustable parameters inside the neural network. Think of these as the synapses in a brain.

- Data Size (D): The total volume of training data, usually measured in tokens (roughly the equivalent of four characters per token).

- Compute Cost (C): The total floating-point operations performed to train the model.

The beauty of the law is that these variables are interconnected. You cannot simply double the compute and expect double the performance if you ignore data quality or architecture efficiency. For years, the industry assumed that making the model bigger was always the right move. We were chasing GPT-3, which had 175 billion parameters, thinking size equaled success. While larger models did improve capabilities like few-shot learning, they often hit a wall where adding more parameters yielded diminishing returns.

The Chinchilla Shift: Quality Over Bulk

In 2023, a paper introduced a concept that fundamentally changed our strategy. The Chinchilla model demonstrated that throwing money at parameters wasn't enough. You have to balance the model size against the dataset size. A smaller model trained on more data can outperform a giant model starved of information. This insight corrected a major misconception in AI development. Before this, many companies were building massive models with too little data, leaving potential performance on the table.

This principle, often referred to as compute-optimal training, suggests a specific ratio between the number of parameters and the number of training tokens. When we followed this optimized path, results improved significantly across the board. It meant we could achieve state-of-the-art performance with less carbon footprint and lower cost. Instead of a generic "bigger is better" mantra, we adopted a precision-engineering mindset. A 70 billion parameter model trained optimally could beat a 530 billion parameter model that didn't respect the data-compute balance.

| Variable | Role in Training | Optimal Strategy |

|---|---|---|

| Parameters | Determines capacity to store knowledge | Scaled proportionally to data |

| Dataset Size | Determines diversity and coverage of facts | Prioritized over pure model size |

| Compute | Enables learning process | Balanced to optimize energy use |

Beyond Pretraining: The Inference Revolution

If you think scaling only matters when we train a model, you are missing the newest frontier. As we moved into 2025 and beyond, the focus shifted toward how the model thinks *after* training. Traditional scaling focused on the initial creation phase. However, newer reasoning architectures changed the game entirely. Systems like the o1 and o3 series utilize massive amounts of compute during the actual use case-the inference step. Instead of just answering immediately, these models take time to plan their thoughts.

This phenomenon creates a different kind of scaling law. Here, performance scales with the time the model spends generating a chain of thought. A complex math problem might require a model to generate thousands of internal steps before giving a final answer. We see smooth improvements in reasoning capabilities as we allow the model to 'think' longer. This adds a new dimension to our predictive models. We are no longer just predicting how good a model will be at generation; we are predicting how effectively it can solve problems given more processing time.

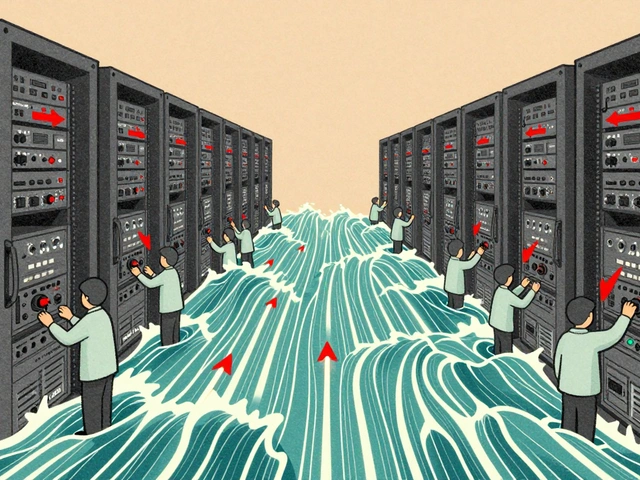

This shift impacts how we design hardware. GPUs designed for massive parallel training (like the H100) share workload requirements with those needed for rapid inference, but the memory bandwidth becomes the bottleneck for long chains of thought. We are seeing a convergence where the distinction between training and inference blurs because both require similar scaling principles to function efficiently.

Planning With Precision

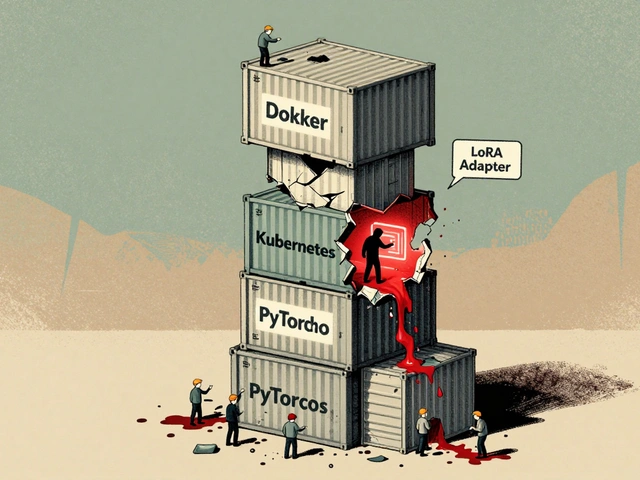

The most practical benefit of understanding these laws is financial planning. Large-scale pretraining is incredibly expensive, often costing tens of millions of dollars. Organizations typically get only one chance to get the architecture right before committing resources. By fitting scaling laws to small models trained on fractions of the data, we can predict exactly where the big model will land.

This workflow has become standard in the industry. Teams train dozens of smaller models under various settings-different learning rates, batch sizes, and architectures. They fit a power law curve to this data. Once the curve is established, they extrapolate the results to the target scale. This reduces the risk of launching a model that falls short of expectations. It turns model development from a gamble into a calculated investment.

We also use these predictions to identify where breaks in the law might occur. Sometimes, a model hits a threshold where it suddenly acquires emergent abilities-skills it didn't seem to possess before. These breaks aren't captured by simple linear scaling, but knowing the baseline helps us spot when we've entered a new regime of capability.

FAQ: Questions About Scaling Laws

What exactly is a neural scaling law?

A neural scaling law is an empirical rule that predicts the performance of a language model based on its size, the amount of data used to train it, and the compute resources expended. It generally shows that errors decrease in a predictable way as these resources increase.

Why is the Chinchilla benchmark important?

Chinchilla proved that maximizing model parameters alone is inefficient. It showed that balancing model size with dataset size yields much better performance per dollar spent, leading to the concept of compute-optimal training.

Does scaling always mean bigger models?

Not necessarily. Recent trends show that scaling compute during inference (thinking time) can yield higher performance than just increasing the static size of the model weights.

Can scaling laws predict the future perfectly?

They provide highly accurate extrapolations, but they sometimes miss emergent behaviors or sudden architectural breakthroughs that change the slope of the curve.

How do companies use this today?

Companies run small experiments to fit curves and then predict the outcome of full-sized models, allowing them to allocate budgets precisely rather than guessing.

Kelley Nelson

28 March, 2026 - 11:50 AM

It is fascinating to observe how popular discourse continues to simplify complex mathematical realities into digestible soundbites.

One finds it rather tiresome that the article equates intelligence with mere computational efficiency without acknowledging the philosophical nuance.

True cognition cannot be reduced to loss curves and parameter counts alone.

We must look deeper than the superficial graphs presented in such glossy articles.

The concept of neural scaling assumes a linearity that does not exist in organic systems.

History has repeatedly shown us that exponential growth eventually plateaus due to external constraints.

These constraints are rarely discussed in such optimistic technological manifestos.

Furthermore, the reliance on compute as a primary metric ignores the quality of the training data itself.

Garbage in indeed remains garbage out regardless of the hardware prowess utilized.

It is disheartening that the industry focuses so heavily on quantity over semantic depth.

We ought to prioritize understanding over raw predictive power in any meaningful capacity.

The financial implications described are valid points of concern for budget holders.

However, the ethical implications of such planning remain entirely unaddressed in this text.

It is imperative that we do not lose sight of the human element involved in creation.

Simply calculating output does not equate to genuine learning or comprehension anywhere.

Gareth Hobbs

28 March, 2026 - 20:53 PM

They're lying about the scaling laws to sell us more chips!!!

Fredda Freyer

29 March, 2026 - 16:48 PM

While the skepticism regarding corporate transparency is understandable, the underlying mathematics hold up under rigorous peer review.

The phenomenon of diminishing returns is real and documented across numerous independent studies.

We see clear evidence of this in open-source releases versus proprietary benchmarks.

The gap often lies in optimization techniques rather than fundamental deception.

It is worth considering that the technology serves a broader purpose beyond profit margins.

Understanding these laws helps researchers allocate resources more ethically.

Efficiency leads to lower carbon footprints which benefits everyone in the ecosystem.

Perhaps the focus should shift toward open access to these training methodologies.

We could democratize knowledge rather than gatekeep it behind paywalls.

Zelda Breach

30 March, 2026 - 22:09 PM

This article reads like it was written by a middle school student who watched too many TED talks.

The lack of proper citation makes every single claim incredibly suspect to anyone with actual expertise.

Stop trying to convince us that math magic exists in 2026.

Real engineers know that empirical observation trumps pretty graphs every time.

Your formatting errors are showing up everywhere in the text sections.

Just admit you don't know what you're actually talking about here.

Aryan Gupta

31 March, 2026 - 23:27 PM

You made several run-on sentences that undermine your own credibility significantly.

However, I agree that the narrative feels curated for public consumption specifically.

The timing of this release aligns too perfectly with new funding cycles.

It feels suspiciously convenient for those holding the majority of shares.

We need to watch for signs of manipulation in future reports carefully.

Do not trust the headlines blindly when the sources are anonymous corporations.

The truth is usually hidden in the fine print nobody bothers reading.

Your frustration is valid even if the syntax needs improvement.

Alan Crierie

1 April, 2026 - 22:49 PM

Great discussion going on here today! 🌟 I really appreciate the diverse perspectives everyone is sharing.

Let's keep the conversation respectful and productive moving forward! 👏