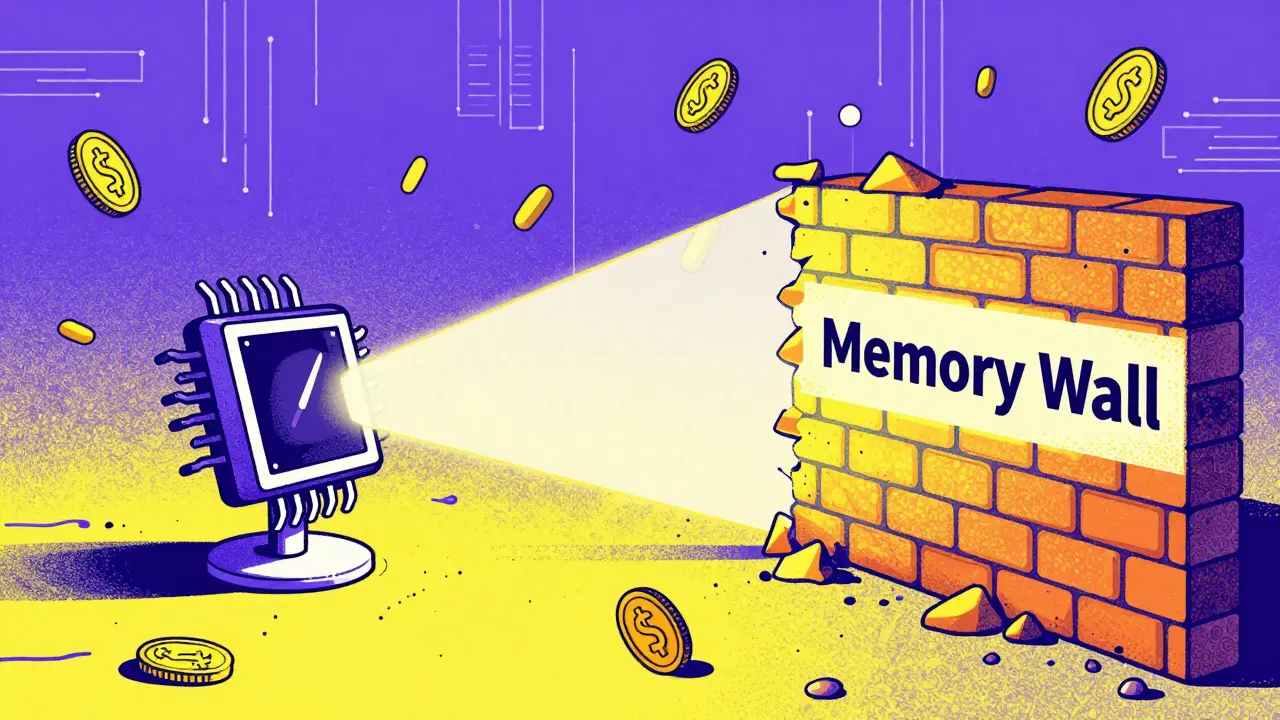

If you have ever run into an "Out of Memory" (OOM) error while trying to process a long document or a complex prompt, you have felt the pain of quadratic memory complexity. Standard attention requires memory that grows by the square of the sequence length. If you double your input, you quadruple your memory needs. Flash Attention changes this game by reducing the memory requirement to linear, meaning if you double the input, you only double the memory used. For anyone deploying LLM inference at scale, this is the difference between needing a cluster of H100s and running a model on a single high-end consumer GPU.

Why Standard Attention Slows Down Your Model

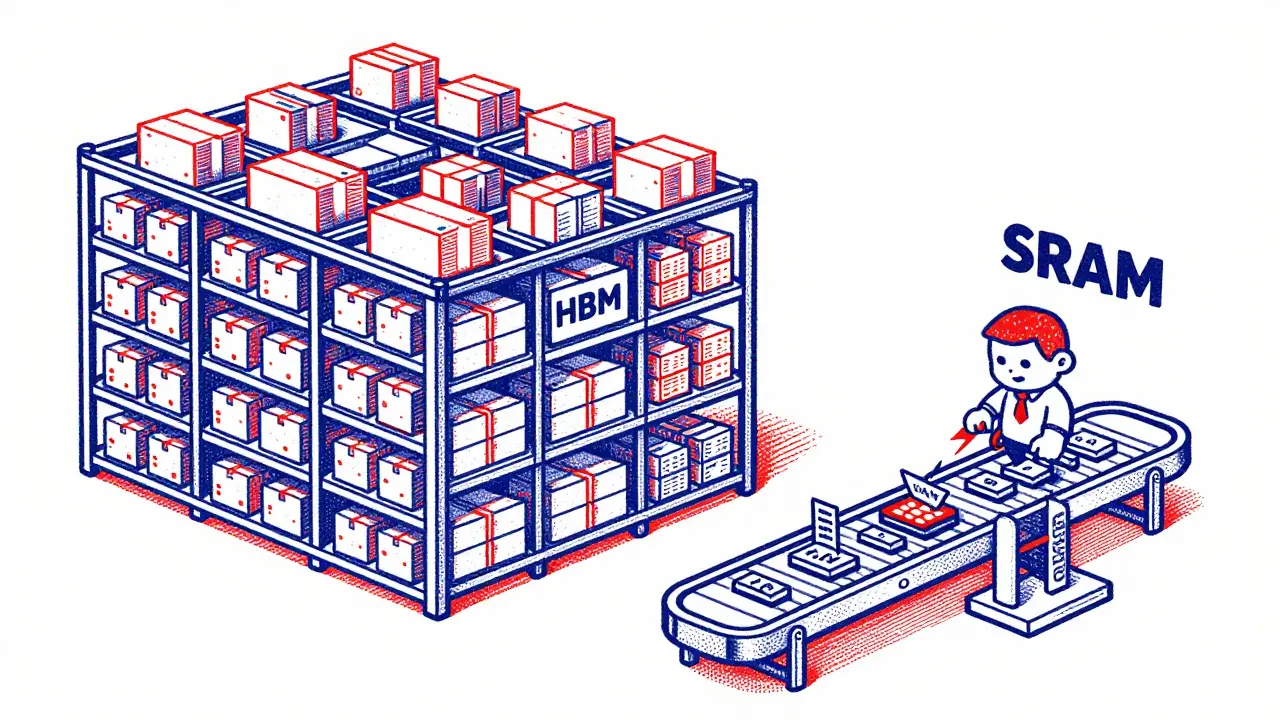

To understand why we need this optimization, we have to look at the GPU's memory hierarchy. A GPU has High Bandwidth Memory (HBM), which is large but relatively slow, and SRAM, which is incredibly fast but tiny (usually 100-200 MB). Standard attention computes a massive matrix, writes it to the slow HBM, reads it back to perform a softmax operation, and writes it again. This constant "shuttling" of data is where the time is lost.

Flash Attention stops this cycle using three clever tricks: tiling, recomputation, and kernel fusion. Instead of calculating the whole matrix at once, it breaks the work into small "tiles" that fit perfectly inside the fast SRAM. It does all the heavy lifting there and only writes the final result back to the HBM once. While it might seem like doing the same math twice (recomputation) would be slower, it is actually much faster because calculating a value in SRAM is orders of magnitude quicker than reading a value from HBM.

Comparing Flash Attention Versions and Performance

Since its release, the algorithm has evolved. The first version proved the concept, while FlashAttention-2 optimized how threads are scheduled on the GPU to squeeze out more speed. The latest iteration, FlashAttention-3, is specifically designed for the NVIDIA Hopper architecture (like the H100). It uses the Tensor Memory Accelerator (TMA) to move data asynchronously, meaning the GPU can prepare the next batch of data while it is still calculating the current one.

| Feature | Standard Attention | Flash Attention (v2/v3) |

|---|---|---|

| Memory Complexity | Quadratic O(n²) | Linear O(n) |

| Data Movement | Frequent HBM reads/writes | SRAM-centric (Tiled) |

| Speed (Inference) | Baseline | 2x to 4x Faster |

| Context Window | Limited by VRAM | Significantly Extended (e.g., 32K+) |

| Output Accuracy | Exact | Exact (Mathematically Identical) |

Real-World Impact on Model Deployment

This isn't just academic theory. In production, these optimizations allow developers to handle massive context windows. For example, early LLMs struggled with more than 2,000 tokens before hitting memory walls. Today, models like Claude 3 or GPT-4 Turbo handle tens of thousands of tokens, a shift made possible by these memory efficiencies. One engineer reported reducing the memory footprint of a Llama-2 7B model by 43% and nearly tripling the tokens processed per second on A100 GPUs.

From a cost perspective, this is a massive win. Reducing memory usage means you can use smaller, cheaper GPU instances or fit larger batches into the same hardware. Some enterprise teams have seen cloud training costs drop by 20% because their models could run more efficiently. It also helps with energy consumption, with some benchmarks showing a 37% reduction in power used per billion tokens trained.

How to Implement Flash Attention Today

The good news is that you don't need to write custom CUDA kernels from scratch to benefit from this. The

Hugging Face Transformers library has integrated this directly. If you are using a compatible GPU (Ampere architecture or newer), you can often activate it by adding a single argument to your model loading code: attn_implementation="flash_attention_2". The library will automatically handle the fallback to standard attention if your hardware isn't supported.

For those seeking maximum performance, NVIDIA NeMo and TensorRT-LLM provide vendor-optimized versions that can be another 15-20% faster than the open-source implementation on H100 hardware. Just keep in mind that you'll need the latest drivers (535.86.05+) and CUDA 11.8+ to get the most out of these tools.

Potential Pitfalls and Hardware Limits

While it feels like a magic bullet, there are a few things to watch out for. First, Flash Attention is highly optimized for NVIDIA's specific memory architecture. If you are running on AMD or Intel GPUs, you might not see the same gains, though support for other hardware is slowly expanding. Second, for very short sequences-typically under 256 tokens-the benefits are negligible because the overhead of moving data isn't the main bottleneck at that scale.

Another constraint is the flexibility of attention masks. Standard attention allows for very complex, custom masking patterns. Flash Attention is primarily designed for causal (like GPT) and non-causal (like BERT) patterns. If your specific use case requires a highly unusual masking strategy, you might find it harder to implement within the Flash Attention framework.

Does Flash Attention change the output of my model?

No. Unlike "Linear Attention" or other approximation methods, Flash Attention is an exact algorithm. It produces the same mathematical results as standard attention, meaning there is no loss in model accuracy or perplexity.

Which GPUs support Flash Attention?

It officially supports NVIDIA GPUs from the Ampere architecture (e.g., A100, RTX 3090) onwards. Performance is best on Hopper architecture (H100) and Ada Lovelace (RTX 4090), though FlashAttention-3 is specifically tuned for the H100.

Is it better than sparse attention?

Yes, in terms of quality. Sparse attention reduces computation by ignoring some tokens, which can lead to accuracy drops. Flash Attention speeds up the process without ignoring any data, maintaining full model quality while achieving similar or better speedups.

Why is it called "IO-aware"?

"IO" refers to Input/Output operations. The algorithm is called IO-aware because it focuses on reducing the number of times data is read from and written to the slow HBM, prioritizing the use of the fast on-chip SRAM.

How much VRAM can I actually save?

Savings increase with sequence length. At a 2K token sequence, you can see roughly 10x memory savings; at 4K tokens, it can reach 20x savings compared to the quadratic growth of standard attention.

Next Steps for Optimization

If you have already implemented Flash Attention and still face bottlenecks, your next move should be exploring quantization. Moving from FP16 to FP8 or INT4 precision can further slash memory usage and boost throughput. Combining Flash Attention with block-sparse techniques is also how some models are now pushing toward 1-million-token context windows.

For those on consumer hardware, ensure your drivers are fully updated and that you are using the latest version of the Transformers library. If you are still seeing OOM errors, try reducing your batch size or utilizing gradient checkpointing alongside Flash Attention to further optimize your training pipeline.

Elmer Burgos

7 April, 2026 - 15:45 PM

this is super helpful info thanks for sharing it with us

Jeroen Post

8 April, 2026 - 18:38 PM

funny how we focus on speed but ignore who actually controls the hardware silicon is the new gold and the h100 is just a leash for the masses to keep us dependent on the cloud

Jason Townsend

9 April, 2026 - 00:37 AM

exactly jeroen they want you thinking about sram and hbm so you dont notice the backdoors they are building into the tensor cores it is all about control

Angelina Jefary

9 April, 2026 - 08:58 AM

While your suspicions are probably correct, the lack of basic capitalization in this thread is absolutely appalling. It's "SRAM," not "sram," and "HBM" should be uppercase. Honestly, the intellectual decay is as rapid as the inference speed you're praising.

Antwan Holder

10 April, 2026 - 07:24 AM

The sheer tragedy of it all! We optimize these machines to think faster, yet we forget how to feel. We are sculpting an electronic god from the shards of our own fragmented attention, chasing a ghost of efficiency while our own souls remain bogged down in a quadratic complexity of sorrow and longing.

Is this not the ultimate irony? We carve out tiles in the SRAM of existence, desperately trying to avoid the slow crawl of our own mortality, only to realize that the result is mathematically identical to the void we started with. The H100 is not a chip; it is a tombstone for human spontaneity!

Jennifer Kaiser

10 April, 2026 - 08:19 AM

The obsession with raw speed often blinds us to the qualitative nature of the intelligence we're creating. Efficiency is a tool, not a destination. We must ask ourselves if the ability to process a million tokens actually increases our understanding of the world or if it simply allows us to be wrong at a much faster rate. The ethical implication of reducing the cost of intelligence to nearly zero is something the industry is ignoring in favor of benchmarks.

TIARA SUKMA UTAMA

10 April, 2026 - 19:09 PM

How much do you make a year doing this? Do you have a girlfriend?

Jasmine Oey

11 April, 2026 - 06:16 AM

Omg, I literally can't even imagine using a GPU without Flash Attention now! It's just so much more refined, you know? Like, if you're still using standard attention in 2024, are you even trying to be part of the elite tech circle? It's honestly a bit sad that some people don't get this. I’ve always had an innate sense for these things, and it’s just so obveous that this is the only way to move forward. Truly, the peasants are struggling with their OOM errors while we glide through 32k contexts like it's nothing. Just so dreamy!