The High Score Paradox

You look at the leaderboard. Your chosen Large Language Model ranks top tier. It scores 92% on math reasoning and dominates the coding benchmarks. You feel confident. You deploy it into your customer support chatbot or your internal dev tool, and suddenly, the magic vanishes. The model stumbles over simple tasks. Users complain the answers are wrong, generic, or hallucinated.

This isn't a glitch in your implementation. It is a fundamental disconnect known as the "performance illusion." Recent analysis from early 2026 confirms what many practitioners have suspected for years: there is a severe correlation gap between Offline Evaluationa standardized assessment of model performance using fixed datasets and real-world outcomes. We need to stop trusting raw benchmark numbers blindly if we want our AI projects to succeed.

Defining the Battlefield: Lab vs. Field

To understand why the scores lie, we first have to define the environments. In an academic setting, researchers run models through a gauntlet of highly engineered tests. These Benchmarksstandardized datasets used to measure and compare machine learning models often use multi-step prompting, extensive scaffolding, and multiple attempts to get the "right" answer. It is a controlled lab environment where every variable is tweaked for success.

In contrast, real-world production is messy. End-users do not write poetry to your API. They send short, natural language queries. There is zero-shot prompting-meaning no examples given-and strict constraints on latency and cost. When you strip away the hand-holding of the academic test, the model has to stand on its own two feet. Often, it can't walk. The correlation between how well a model does in the lab and how it performs in the field has dropped significantly compared to earlier industry assumptions.

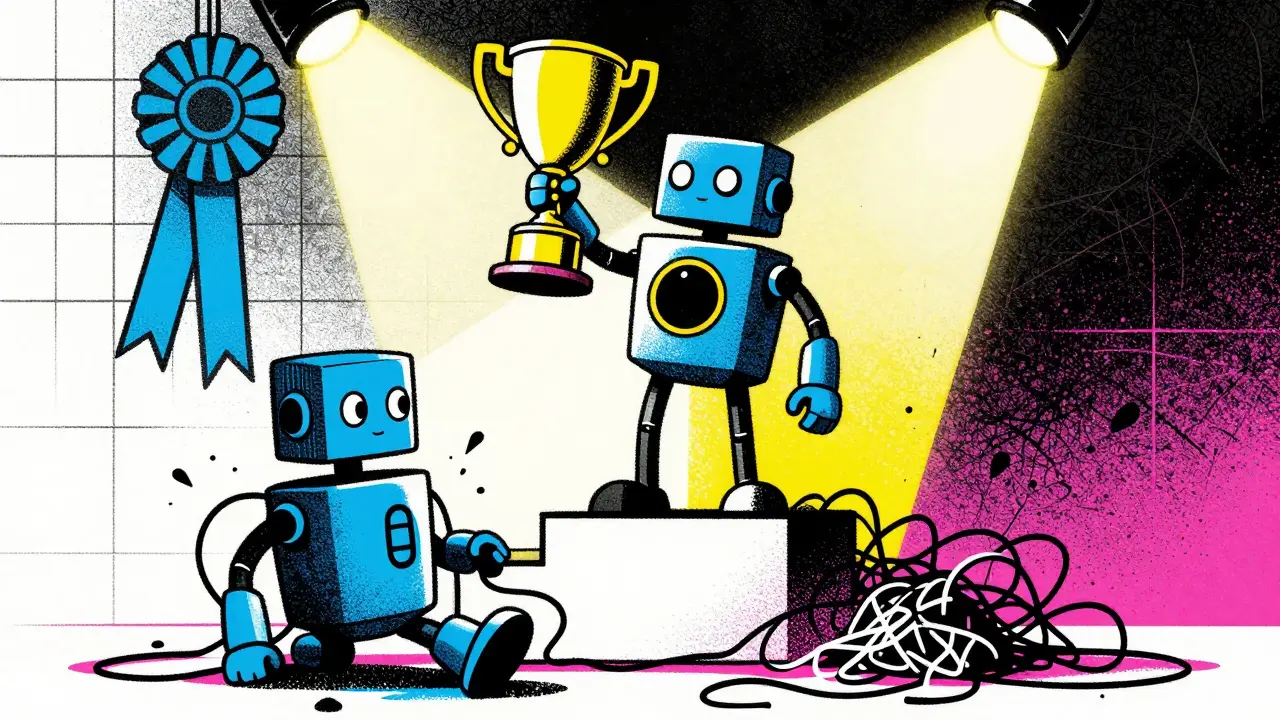

The Code Generation Evidence

One of the most striking pieces of evidence comes from code generation studies conducted in the lead-up to 2026. Researchers compared how models performed on synthetic benchmarks versus actual production codebases. On the synthetic tests, models achieved correctness rates between 84% and 89%. That looks incredible. However, when tested on real-world class-level code generation, the success rate plummeted to between 24% and 35%.

| Environment | Average Correctness Rate | Primary Constraint |

|---|---|---|

| Synthetic Benchmark | 84% - 89% | Multi-pass, Engineered Prompts |

| Real-World Code | 24% - 35% | Single-pass, Natural Input |

This 49 to 65 percentage point drop is critical. It proves that the issue isn't necessarily overfitting to specific test examples. Rather, the model is over-reliant on the "test setup" itself. It expects the structured help that researchers give it, which users never provide. This creates a dangerous blind spot for CTOs and product managers reviewing vendor dashboards.

Why Prompts Matter More Than Parameters

The root cause of this divergence usually lies in the interaction layer. Academic evaluations frequently employ sophisticated prompt engineering. They might feed the model several examples before asking for an answer (few-shot learning) or guide it through a chain of thought process. This artificial scaffolding boosts performance metrics artificially.

Enterprises operate differently. Cost constraints mean companies prefer single-prompt interactions. We cannot afford to send ten different variations of a user query to the server just to pick the best one, even if that works better in theory. Furthermore, regional languages suffer here. Even strong multilingual models show significant degradation when the elaborate scaffolding is removed. If you evaluate a model on English data with heavy prompting, then deploy it to handle Spanish requests with minimal context, you will see the benchmark numbers fail to predict the reality.

Training Alignment: Online vs. Offline

The gap extends beyond just testing; it affects how we train the models to behave well. In the realm of reinforcement learning for alignment, there is a clear distinction between online and offline approaches. Online algorithms perform inference during training, maintaining an explicit reward model that learns in real-time. Offline approaches, which are easier to manage, rely on static datasets.

Studies comparing these methods found that online alignment generally outperforms offline approaches regarding optimization budget and KL divergence metrics. However, this doesn't mean offline methods are useless. Semi-online strategies, such as combining Direct Preference Optimization (DPO)a technique for aligning language models with human preferences with on-policy data, can nearly match the performance of full online training.

The nuance here is about gradients. Using negative gradients in alignment gives a boost to difficult cases where the model needs to increase the probability of low-probability responses. Without this, models tend to be safe but boring. With it, they become more capable. But remember: higher classification accuracy in a reward model does not always correlate with better real-world user satisfaction. We sometimes chase the wrong metric entirely.

Building a Reality-Based Evaluation Strategy

If the standard leaderboards are misleading, how do you vet a model for your business? The solution is to ground your evaluation in your actual usage patterns. You need to build custom test sets that mirror your application scenarios.

- Multicultural Inputs: Don't just test on clean English. Inject dialects, typos, and mixed-language inputs to stress-test robustness.

- Domain-Specific Scoring: Create scoring rubrics that align with business objectives, not generic correctness.

- Human-in-the-Loop Review: Automated metrics miss nuance. For critical workflows, human review must remain part of the quality loop.

- Continuous Monitoring: Track model drift over time. A model that worked in January might fail by June due to changing user intent.

Luckily, offline evaluation still has value because it offers repeatability and fast iteration speed. You shouldn't abandon it; you should just treat it as a necessary but insufficient filter. Use it for quick debugging, but validate any improvements with online evaluation under real conditions.

The Latency Tradeoff

We often overlook the operational side of performance. Latencythe delay before a transfer of data begins following an instruction is a primary metric for user experience. Offline models, once downloaded, offer minimal latency. They are essential for edge computing scenarios like mobile apps, robots, and wearables where autonomy is key. There are no network delays.

Online cloud models, while potentially smarter due to larger parameter counts and fresh knowledge, introduce delay risks. Server load and unstable internet connections can ruin the experience even if the model is technically brilliant. You have to decide whether you want raw intelligence or immediate response, as these two qualities often pull in opposite directions during deployment.

Getting this right means acknowledging that benchmark perfection is not the goal. Utility is. We need to shift our focus from how smart the model looks on paper to how reliable it is when the lights are on.

Frequently Asked Questions

Why do my model's benchmark scores differ so much from real results?

Benchmarks typically use engineered, multi-turn prompts with scaffolding that do not exist in production. Real users provide natural, zero-shot inputs, causing a massive performance drop (sometimes over 50%) compared to test results.

Should I ignore standard benchmarks completely?

No. Standard benchmarks provide a baseline for theoretical capability and allow for comparison between versions. However, they should never be used as the sole predictor of production success.

What is the best way to validate model performance?

Create a custom test set based on real historical user data. Include edge cases, errors, and domain-specific instructions. Combine automated scoring with periodic human review for quality assurance.

Does model size correlate with real-world reliability?

Not strictly. Larger models often score higher on general benchmarks but may not adapt better to specific niche tasks than smaller, fine-tuned models. Specificity matters more than raw parameter count in production.

How does latency impact evaluation strategy?

High-latency online models might provide better answers, but slow delivery hurts user experience. Edge-deployed offline models trade some intelligence for instant responsiveness, which is vital for robotics and mobile apps.

Vishal Gaur

28 March, 2026 - 02:15 AM

Wow i readed this and it makes sence but people do not talk about it enough. When i look at my own projects i see the same issue happening all the time and its really frustrating. Because the vendor promises you one thing and then in reality you get something else that is totally different from what you expected. It feels like they are hiding some details behind the scenes so people dont know the truth about how bad the models actually perform without all the extra help. I wish we had more tools to test properly before we even think about deploying anything important into production environments for our clients. Maybe we need new standards that focus on zero shot tasks only instead of these fancey engineered prompts that are useless in the wild. Its just sad to see companies spending millions on models that cant even handle basic typos from regular users anymore. I think the article made a good point about latency too since speed matters just as much as accuracy when customers are waiting around for answers. Overall though i still think there is hope if we stop trusting the leaderboards so blindly and start testing locally. Also the part about spanish degradation was scary because localization is huge right now. We cannot ignore the drop in code generation rates either. It shows that scaffolding is the main thing keeping scores high artificially. Without the hand holding the models fall apart completely. This disconnect is very dangerous for anyone who builds software today. I hope the industry learns from these findings soon enough.

Rajat Patil

30 March, 2026 - 00:41 AM

Your concerns are valid and well stated in your previous message. We must be careful when we trust data from automated tests alone. Simple verification methods are better for safety. I agree that custom sets help us find true issues. The risk of deployment failures is real without proper checks.

pk Pk

30 March, 2026 - 17:30 PM

Stop relying on leaderboards entirely until proven otherwise in your specific use case.

Nikhil Gavhane

1 April, 2026 - 07:45 AM

This is really eye opening information but it gives me hope because we finally understand the gap better than before. We can build better systems now that we know where the failure points are exactly located within the pipeline. It is great to see researchers focusing on real world usage instead of just theoretical numbers on a dashboard somewhere. Hopefully this leads to cheaper and more reliable AI tools for everyone in the near future soon. The progress in understanding these gaps is a positive step forward for the field.

deepak srinivasa

2 April, 2026 - 22:24 PM

It is interesting to note how the scaffolding changes everything in the final output quality. The difference between synthetic and real data is quite large indeed. Production constraints seem to reveal the true limitations of current architectures.