Tag: LLM inference pricing

Estimating Monthly Costs for a Production Large Language Model Application

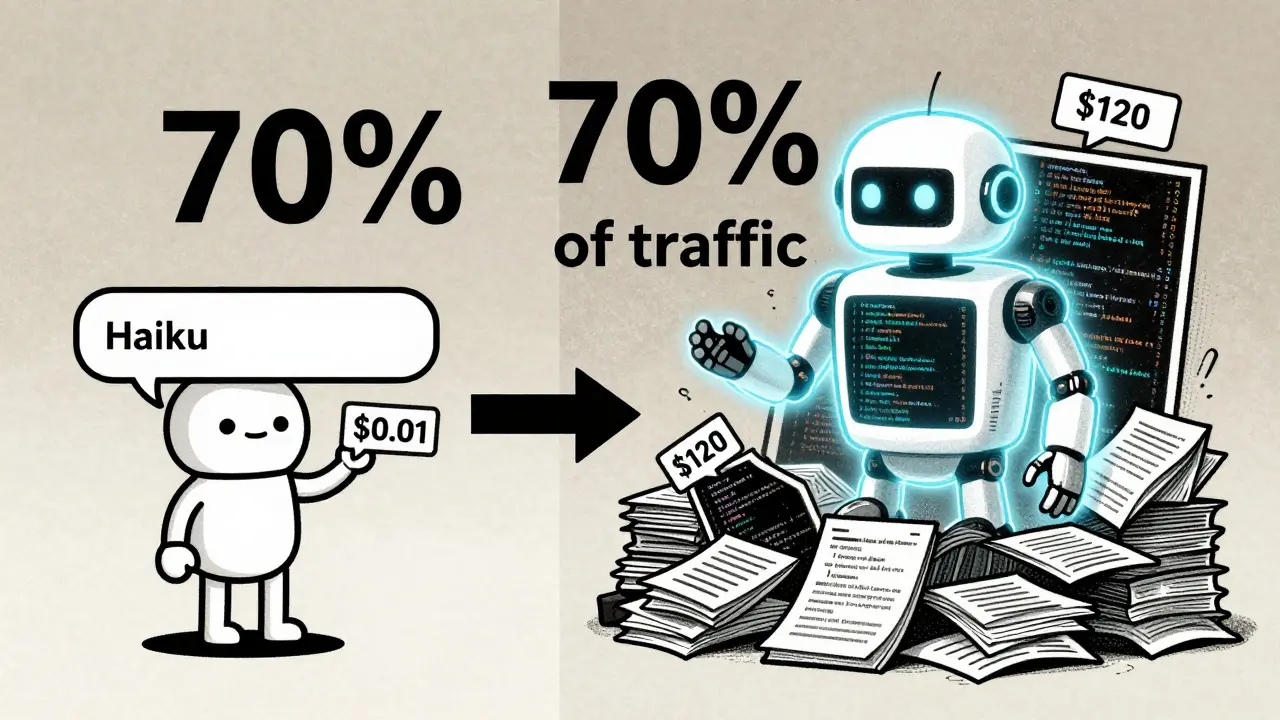

Estimating monthly costs for a production LLM application requires understanding infrastructure, model routing, and development expenses-not just API pricing. In 2026, smart architecture cuts costs by 90% compared to brute-force approaches.

Read More